Recently I’ve had a couple of opportunities to think again about how a secure password reset function should operate, firstly whilst building this functionality into ASafaWeb and secondly when giving some direction for someone else doing a similar thing. In that second instance, I wanted to point them to a canonical resource on the ins and outs of securely implementing a reset function. Problem is though, there isn’t one, at least not covering everything I believe is important. So here it is.

You see, the world of forgotten passwords is actually a little murky. There are plenty of different perfectly legitimate angles and a bunch of pretty bad ones as well. Chances are you’ve experienced each many times as an end user so let me try and draw on some of these examples to see who’s doing it well, who’s not and what you need to focus on to get it right in your app.

Password storage: hashing, encrypting and (gasp!) plain text

We can’t talk about what to do with forgotten passwords until we talk about how they’re stored in the first place. We’ve got three primary ways in which passwords will usually be persisted in a database:

- Plain text. You have a password column and it sits there in the clear.

- Encrypted. Usually using symmetric encryption (the one key to both encrypt and decrypt), the encrypted password also sits there in a single column.

- Hashed. A one-way process (you can hash but not un-hash), the password is hopefully accompanied by a salt, each of which sit in their own columns.

Let’s just get that first one out of the way quickly; never store passwords in plain text! Ever. One little injection vulnerability, one sloppy backup or any one of a dozen other simple little slipups and it’s game over, all your passwords – sorry – all your customer’s passwords are in the public domain. Which, of course, means a better than average chance that all their passwords for all their other accounts on totally independent systems are in the public domain. And it’s your fault.

Encryption is better, but still flawed. The problem with encryption is decryption; it’s possible to take those crazy looking ciphers and convert them back to plain text and once that happens, you’re back with readable passwords. How does this happen? A little flaw sneaks into the code which decrypts the password and makes it publicly accessible – that’s one way. The machine the encrypted data sits on gets owned – that’s another way. Another way again is that the database backup is obtained and someone also gets their hands on the encryption key, which is frequently pretty poorly managed.

Which leads us to hashing. The idea of hashing is that it only goes one way; the only way you can ever match a password from a user with its hashed partner is to hash the input and compare it. In order to prevent attacks from tools such as rainbow tables, we add randomness to the process by using a salt (check out my post on cryptographic storage for the full picture). The bottom line is that when done properly, we can have a high degree of confidence that hashed passwords should never again become plain text (I’ll save the respective merits of various hashing algorithms for a later post).

A quick argument about hashing versus encrypting; the only reason you should ever need to encrypt and not hash is when you want to see the plain text password and you should never want to see this, at least not in a typical website scenario. If you do, you’re probably doing something else wrong!

Warning!

A little bit further down this page is a partial screen grab of the website AlotPorn. It’s been very carefully cropped and you won’t see anything you won’t see at the beach, don't scroll beyond here if that might still cause offence.

Always reset, never remind

Ever been asked to build a password reminder function? Take a step back and work through that request in reverse; why is a “reminder” needed? Because the user has forgotten their password. What are we really trying to do? Help them log back in again.

I get it – the word “reminder” is (often) used colloquially – but what we’re really setting out to do here is to securely help the user get back online. Because we want to be secure, there are two reasons why a reminder (i.e. actually sending them their password) won’t work:

- Email is an insecure channel. In the same way as we wouldn’t send anything sensitive over HTTP (we’d use HTTPS), the transport layer for email is not secure. Actually, it’s much worse than just sending info over an insecure transport protocol as your mail often persists in storage, is accessible by system admins, is readily forwarded and redistributed, is accessed by malware and so on and so forth. Unencrypted mail is an extremely insecure channel.

- You shouldn’t have access to the password anyway. Go back to that previous section about storage – all you should have is the password hash (with a nice strong salt), which means there’s no way you can pull the password back out and email it around anyway.

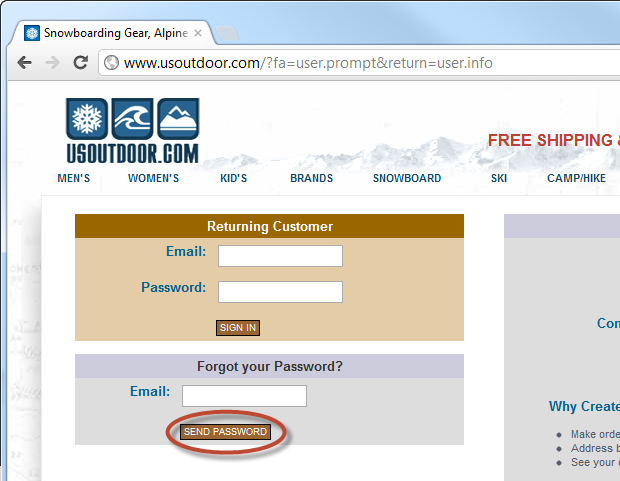

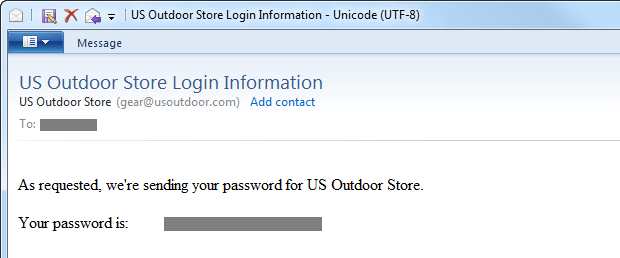

Let me demonstrate the problem courtesy of usoutdoor.com: Here’s a typical login page:

Clearly the first problem is that the logon page hasn’t been loaded over HTTPS, but then they’ve also gone and offered to “Send Password”. Now maybe that’s an example of the earlier mentioned colloquial use of the term, let’s dig a big further and see what happens:

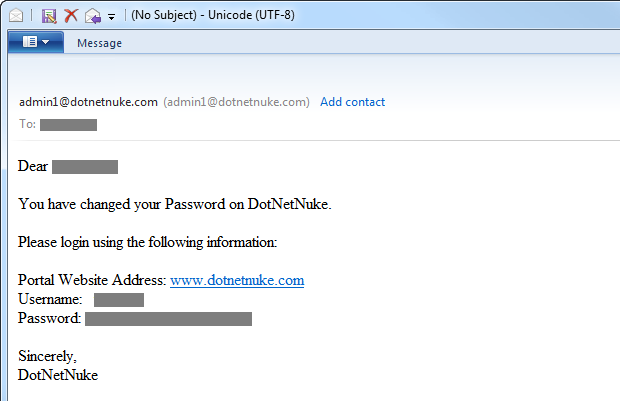

Not looking much better, unfortunately and the email confirms the problem:

So this tells us a couple of important things about usoutdoor.com:

- They’re not hashing the password. At best they’re encrypting it but they’re quite possibly just storing it in the clear; we have no evidence to the contrary.

- They’re sending a persistent password – one we can go back and keep using over and over – via an insecure channel.

Now that we’re clear on that, the trick becomes how we go about ensuring the reset process happens securely and the first step to doing that is to establish that the requestor is actually authorised to perform the reset. In other words, we need a bit of identity verification but before we do that, let’s look at what happens when identity is confirmed without first verifying the requestor is actually the owner of the account.

Username enumeration and the impact on anonymity

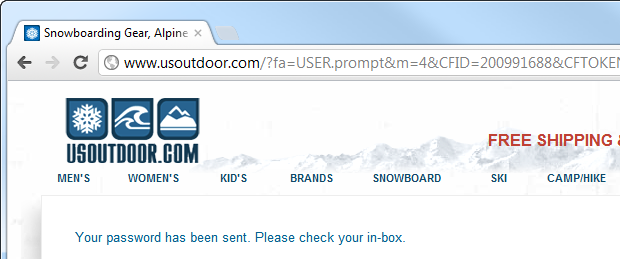

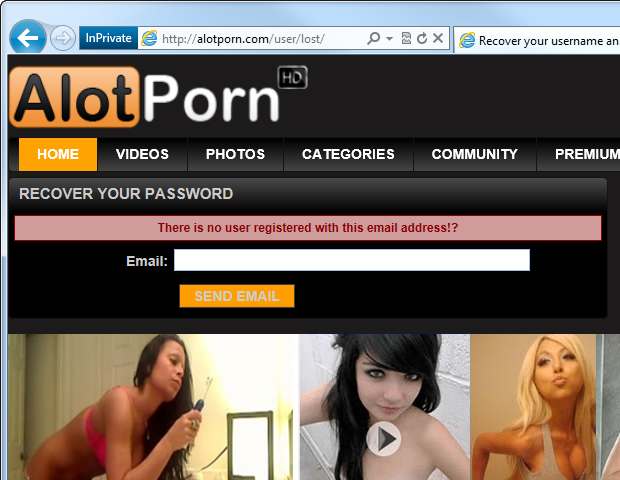

Here’s a problem best illustrated graphically. The problem is this:

You see that? Focus now – we’re looking at the message which says “There is no user registered with this email address”. The problem, of course, is when a site like this confirms there is a user registered with that email address. Bingo – you’ve just uncovered your husband’s / boss’s / neighbour’s porn fetish!

Of course porn is a bit of a canonical example of where privacy is important, but the risk of matching an individual to a particular website goes beyond a potentially embarrassing disclosure such as this. One risk that arises is one of social engineering; once an attacker can match a person to a service, they have a piece of information that they can begin leveraging. For example, they may contact the individual whilst posing as a representative of the website and ask for additional information in a spearphishing attack.

This practice also opens up the risk of “username enumeration” where an entire collection of usernames or email addresses can be validated for existence on the website simply by batching requests and looking at the responses. Got a list of everyone’s email address from the office and a few spare minutes to do some scripting? You can see the problem!

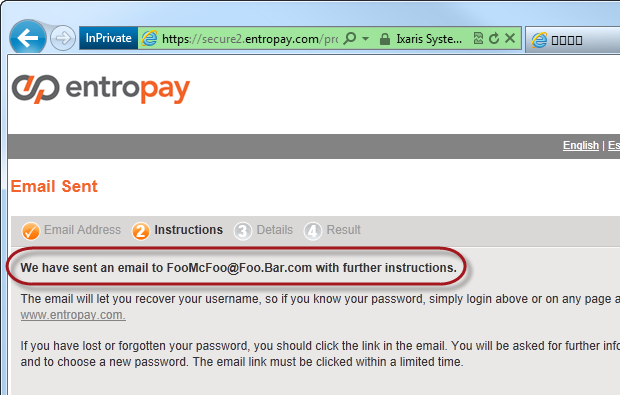

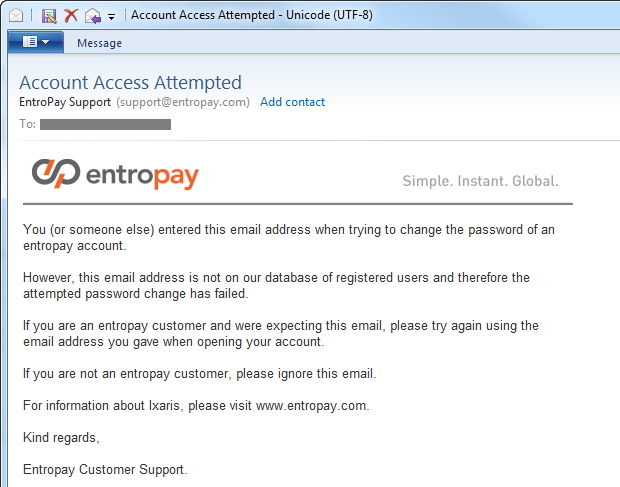

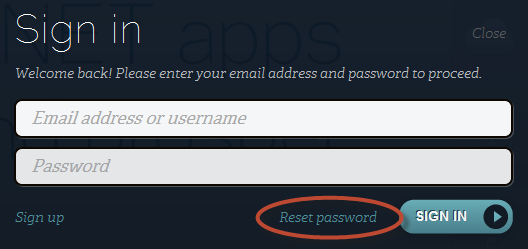

So what’s the alternative? Well it’s actually quite easy and Entropay executes it very well:

What Entropay have done here is disclosed absolutely nothing about the existence of the email address in their system to someone who doesn’t own that address. If you do own that address and it doesn’t exist in their system, you get a nice little email like this:

Of course there may be legitimate use cases where someone either thinks they registered at a website – but didn’t – or they did but with a different email address. The response above deals with both those scenarios very nicely. Obviously if the address was valid you’d get an email which would facilitate a password reset.

The thing about the approach taken by Entropay is that identity verification happens via email before any sort of online verification. One approach some sites take is to prompt the user with a secret question (more on this shortly) before the reset can begin but of course the problem with this is that you have to answer the question along with providing some form of identification (either email or username) which then makes it almost impossible to respond intuitively without disclosing the existence of the account to an anonymous user.

There is a slight usability tax to pay using this approach and it’s that there is no immediate feedback when an invalid account is attempted to be reset. Of course this is the whole reason why we’re sending an email in the first place but from a legitimate end user perspective, if they’ve entered an invalid address then the first they’re going to know about it is when the email arrives. This may cause some frustration on their behalf, but it’s a small trade-off for an infrequent process.

Just one more slightly tangential note on this while I’m here – log on facilities which disclose the validity of the username or email address have exactly the same problem. Always defer to the user with a “You username and password combination is invalid” message as opposed to explicitly confirming the existence of an identity (i.e. your username was correct but your password was incorrect).

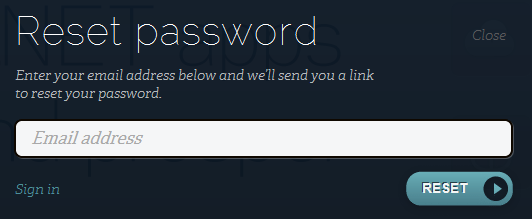

Sending a reset password versus sending a reset URL

The next concept we have to deal with relates to how the password is reset and there are two common approaches:

- Generate a new password on the server and email it

- Email a unique URL which will facilitate a reset process

Despite plenty of guidance to the contrary, the first point is really not where we want to be. The problem with doing this is that it means a persistent password – one you can go back with and use any time – has now been sent over an insecure channel and resides in your inbox. Chances are your inbox syncs to your mobile device(s) and possibly to your mail client plus it may reside online in your web-based mail service for who knows how long. The point is that your mailbox should not be considered a long term secure storage facility.

But there’s one more big problem with the first approach in that it makes the malicious lockout of an account dead simple. If I know the email address of someone who owns an account at a website then I can lock them out of it whenever I please simply by resetting their password; it’s denial of service attack served up on a silver platter! This is why a reset is something that should only happen after successfully verifying the right of the requestor to do so.

When we talk about a reset URL, we’re talking about a website address which is unique to this specific instance of the reset process. Obviously it must be random and not something guessable nor should it contain any external references to the account for which it’s facilitating the reset. For example, a reset URL should not simply be a path such as “Reset/?username=JohnSmith”.

What we want to do is create a unique token which can be sent in an email as part of the reset URL then matched back to a record on the server alongside the user’s account thus confirming the email account owner is indeed the one attempting to reset the password. For example, the token may be “3ce7854015cd38c862cb9e14a1ae552b” and is stored in a table alongside the ID of the user performing the reset and the time at which the token was generated (more on that in a moment). When the email is sent out, it contains a URL such as “Reset/?id=3ce7854015cd38c862cb9e14a1ae552b” and when the user loads this, the page checks for the existence of the token and consequently confirms the identity of the user and allows the password to be changed.

Now of course because the process above is going to (hopefully) give the user the ability to create a new password, we need to ensure that the URL is loaded over HTTPS. No, posting to HTTPS is not enough, that URL with the token must implement transport layer security so that the new password form cannot be MITM’d and the password the user creates is sent back over a secure connection.

The other thing we want to do with a reset URL is to time limit the token so that the reset process must be completed within a certain duration, say within an hour. What this does is ensures that the window for which the reset can occur is kept to a minimum so should anyone obtain the reset URL they can only action it within a very small window. Of course an attacker can always go and begin the reset process again but they’ll then need to obtain another unique reset URL.

Finally, we want to ensure that this is a one-time process. Once the reset process is complete, the token should be deleted so that the reset URL is no longer functional. As with the previous point, this is to ensure an attacker has a very limited window in which they can abuse the reset URL. Plus of course the token is no longer required if the reset process has completed successfully.

Some of these steps may seem a little excessive, but they don’t detract at all from the usability of the feature and they do add to the security, albeit in circumstances we’d hope would be uncommon. In 99% of cases, the user is going to action the reset within a very short period and they’re not going to reset the password again in the immediate future.

The role of CAPTCHA

Ah CAPTCHA, the security measure we all love to hate! In fact CAPTCHA isn’t so much a security measure as it is an identification measure – are you a human or are you a robot (or an automated script, as it may be). The intention is to avoid the automated submission of forms which of course could be used as an attempt to breach security. In a password reset context, a CAPTCHA means the reset feature can’t be brute-forced either to spam an individual or to attempt to identify the existence of accounts (which of course won’t be possible if you’ve followed the guidance in the identity verification section earlier on).

Of course CAPTCHA itself is not perfect; there are numerous precedents of “breaking” it programmatically and achieving reasonable success rates in the range of 60-70%. Then you have the approach I demonstrated in my post about Breaking CAPTCHA with automated humans where you could pay humans a fraction of a cent to solve each CAPTCHA and get a 94% success rate. So it has faults, but it does (slightly) raise the barrier to entry.

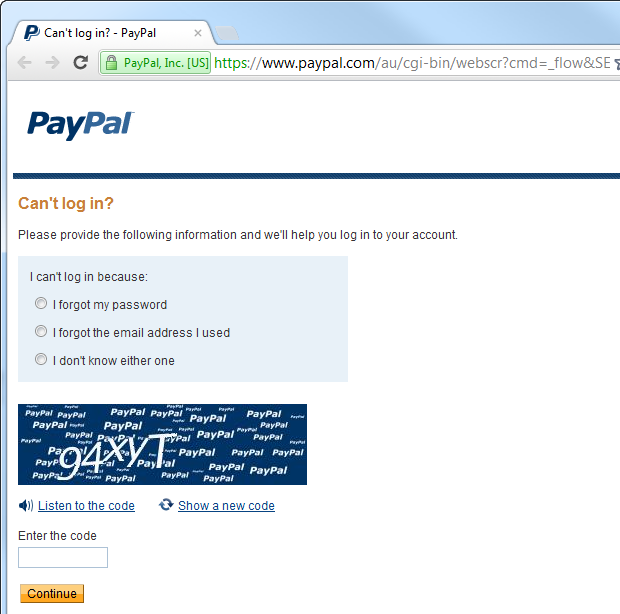

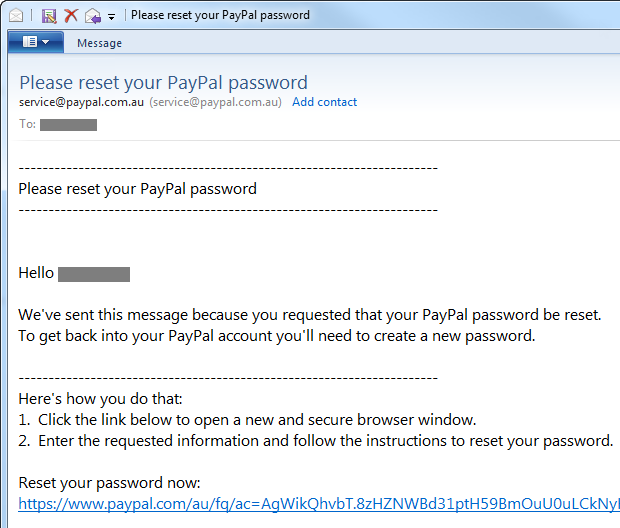

Let’s take a look at PayPal’s approach:

In this case, the reset process simply can’t begin until the CAPTCHA is solved so in theory, you can’t automate the process. In theory.

For most web applications though, this is going to be overkill and will definitely pose a usability overhead – people simply don’t like CAPTCHAs! A CAPTCHA is also the sort of thing you can retrofit later on if it’s required. If the service begins to get abused (this is where logging is important – more on that soon), dropping in a CAPTCHA is a piece of cake.

Secret questions and answers

With what we’ve looked at so far, we’ve been able to reset the password simply by having control of the email account. I say “simply”, but of course illegally gaining access to someone’s email account should be a hard thing. But it isn’t always.

Actually, that link above is about Sarah Palin having her Yahoo! account hacked and it serves a couple of purposes; firstly, it illustrates how easily (some) email accounts can be breached and secondly, it shows how poor secret questions can be abused – but we’ll come back to that one.

The problem with password resets which are 100% dependent on email is that the account integrity of the site you’re trying to reset the password on then becomes 100% dependent on the email account integrity. Whoever has access to your email now has access to any account that can be reset purely by receiving an email. For these accounts, your email is truly the skeleton key to your online life.

One way of mitigating this risk is to implement a secret question and answer pattern. You’ve no doubt seen this before; choose a question for which only you should know the answer then you may be prompted for this before you’re able to perform a password reset. It gives that bit of additional assurance that the person attempting to perform the reset is indeed the owner of the account.

Getting back to Sarah Palin, what went wrong here is that the answers to her secret question(s) were easily discoverable. Particularly once you have a highly public profile, information such as mother’s maiden name, education history or where someone might have lived in the past really isn’t that secret. In fact much of this can easily be discovered for almost anyone. And so it was with Sarah:

The hacker, David Kernell, had obtained access to Palin's account by looking up biographical details such as her high school and birthdate and using Yahoo!'s account recovery for forgotten passwords.

This is primarily a design flaw on Yahoo!’s part; by providing or allowing such basic questions they fundamentally undermined the value of the secret question and indeed undermined the security of their system. Of course password resets of an email account are always going to be trickier because you may well not be able to validate ownership by sending the account holder an email (short of having a secondary address on file), but fortunately there aren’t a lot of use-cases these days for building such a system.

Getting back to secret questions, one option is to allow the user to self-construct their own questions. The problem with this though is that you end up with either painfully obvious questions:

What colour is the sky?

Questions which can put people in an uncomfortable position when a human uses the secret question for verification (such as in a call centre):

Who did I sleep with at the Christmas party?

Or frankly stupid questions:

How do you spell “password”?

When it comes to secret questions, people need to be saved from themselves! In other words, the site itself should define the secret question, or rather define a series of secret questions from which the user can choose. And not just choose one either; ideally, the user should define two or more secret questions at the time of account registration which can then be used as a second channel of identity verification. Having multiple questions adds a higher degree of confidence to the verification process plus gives you opportunity to add randomness (not always show the same question) plus provides a bit of redundancy should someone legitimate forget an answer.

So what makes a good secret question? There are a few different factors:

- It should be concise – the question is to the point and unambiguous

- The answer is specific – you don’t want a question which could be answered in different ways by the same person

- The possible answers must be diverse – a question about someone’s favourite colour would result in a small subset of possible answers

- Answer discovery should be hard – if you can readily find the answer for anyone (think high-profile people) then it’s no good

- The answer must be constant over time – asking for someone’s favourite movie may result in a different answer a year from now

As it happens, there’s a website dedicated to good security questions which, unsurprisingly, is at GoodSecurityQuestions.com. Some of these seem quite good, others fail some of the tests above, particularly the “discovery” test.

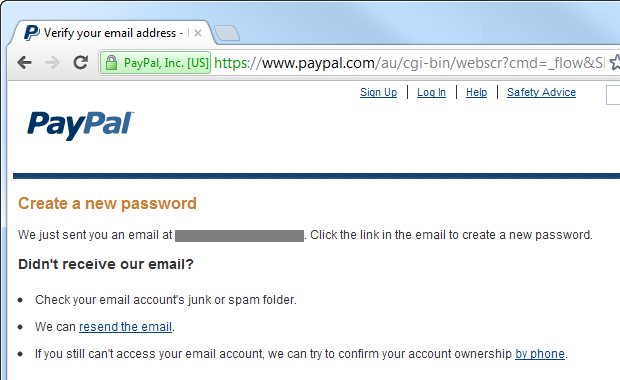

Let me walk you through how PayPal implements their secret questions and in particular, the extent they go verify identities. Earlier on we saw the page to begin the process (the one with the CAPTCHA), here’s what happens once you drop in an email address and solve the CAPTCHA:

Which results in an email like this:

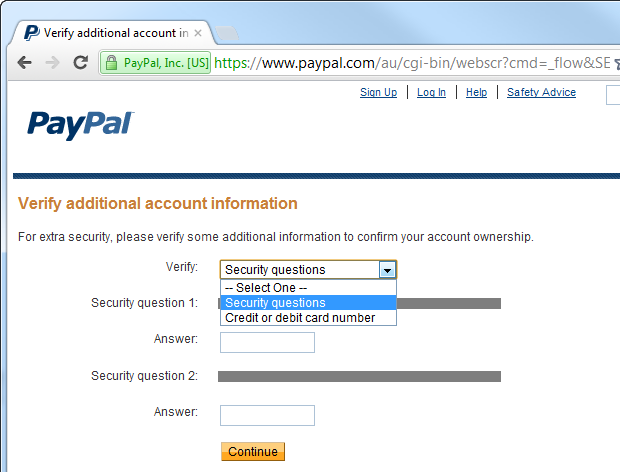

So far this is all very normal, but here’s what’s behind that reset URL:

Right, so now the secret questions come into play. Actually, PayPal also allows password reset by verifying a credit card number so there’s an additional channel there which many sites won’t have access to. I simply cannot change my password without answering both secret questions (or knowing the card number). Even if someone takes over my email account, they cannot reset the PayPal account unless they know some intimate information about me. What sort of information? Here are the secret question options PayPal gives you:

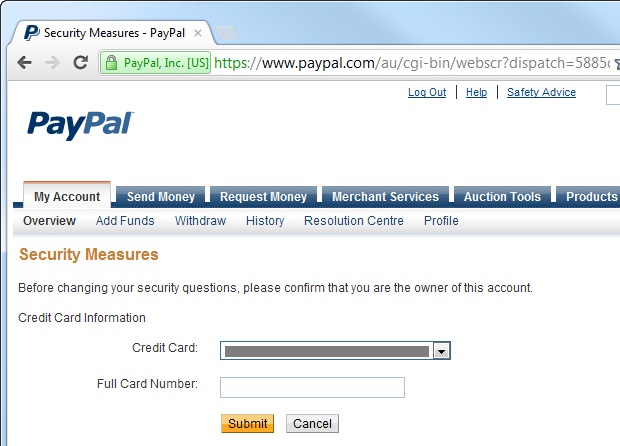

The question about the school and the hospital might be a bit dubious on the “discoverability” test but the others aren’t too bad. But to add to the security, PayPal requires further verification of identity to change secret question answers:

PayPal is a pretty utopian example of a secure password reset: they implement CAPTCHA to mitigate against brute force, require two secret questions and then require another form of identify verification altogether just to change the answers – and that’s after you’re already logged in. Of course we’d expect this from PayPal; they’re a financial institution and they handle lots of money. This doesn’t mean every password reset process should follow these steps – that’s overkill in most cases – but it’s a good reference point for when security is serious business.

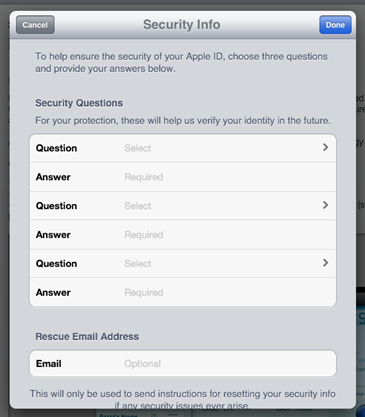

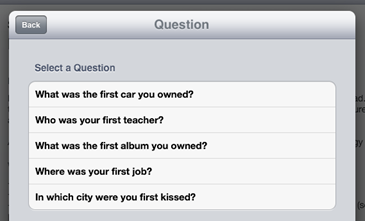

One nice thing about the secret question approach is that if you haven’t implemented it from day one, it can be a later addition if the risk profile as the asset being protected demand it. A good case in point is Apple who just recently rolled out this mechanism. When I went to update an app on iPad the other day, I was prompted with the following:

This then presented me with a screen to define several secret question and answer pairs and a rescue email:

As with PayPal, the questions are pre-determined and some of them are actually pretty good:

Each of the three question and answer pairs presents a different set of possible questions so there are quite a number of different ways an account can be configured.

The other thing to consider with the answer component of the secret question is storage. Sitting it in the DB is plain text poses similar risks to doing the same with the password, namely that a database disclosure will immediately reveal the value and not only put the app at risk but quite possibly other totally unrelated apps which depend on the same secret questions (it’s the Acai berry conundrum all over again). Secure hashing (a strong algorithm and cryptographically random salt) is an option, however unlike most password scenarios, there may be a legitimate reason to make the answer visible in plain text. A typical scenario is when a human operator is verifying an identity over the telephone. Now of course hashing is still feasible (the operator can simply enter the answer the customer provides), but at worst, the secret answer should have some level of cryptographic storage, even if it’s just symmetric encryption. Bottom line: treat secret answers as secret!

Just one more thing on secret questions and answers – they’re more vulnerable to social engineering. Attempting to directly elicit an account’s password out of someone is one thing, striking up a conversation with them about their education history (a common secret question) is quite another. In fact you can quite legitimately have a discussion with someone about many aspects of their life which could constitute the secret question and not arouse suspicion. Of course the very intention of a secret question is that it relates to someone’s life experiences so that it is memorable and therein lies the problem – people like to talk about their life experiences! There’s not a lot you can do about that other than to ensure that the available secret questions are less likely to be the kind that could be socially engineered out of someone.

Two factor authentication

Everything you’ve read up until now has involved verifying an identity based on things the requestor knows. They know their email address, they know how to access their email (i.e. they know their email address password) and they know the answers to some secret questions. “Knowledge” – or something you know – is considered to be one factor of authentication, the other two common factors are something you have, such as a physical device, and something you are such as your finger prints or retina.

In most scenarios it’s a bit infeasible to perform biologic validation, particularly when we’re talking about web application security, so it’s usually the second attribute – something you have – which is used in two factor authentication (2FA). One common approach to this second factor is to use a physical token such as an RSA SecurID:

Common uses for a physical token include authenticating to corporate VPNs and financial services. The premise involves authenticating to a service using both a password and the code on the token (which rotates frequently) combined with a PIN. In theory, an attacker must know the password, have the token and also know the token PIN in order to identify themself. In a password reset scenario the password is obviously not known, but possession of the token can be used to verify the legitimacy of the account claim. Of course like any security implementation, it’s not fool proof, but certainly it raises the bar to entry.

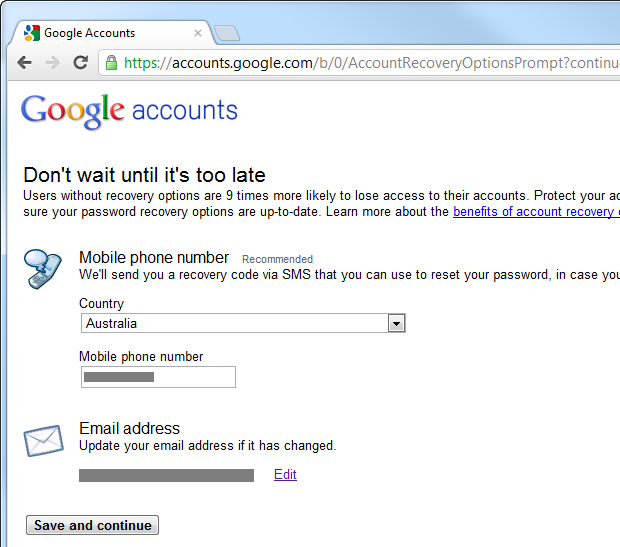

One of the main problems with this approach is the cost and logistics of implementation; we’re talking distributing physical devices to every customer and educating them about a new process. Then of course they actually need to have the device on them when they need it which isn’t always the case with a physical token. Another option is to implement the second factor of authentication using SMS which in a 2FA scenario can be used as validation that the person instrumenting the reset process actually has the mobile phone of the account holder. Here’s what Google does:

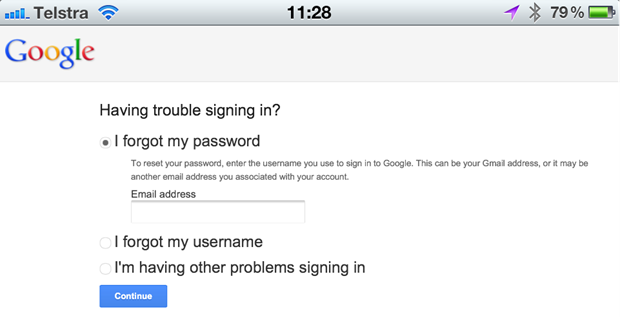

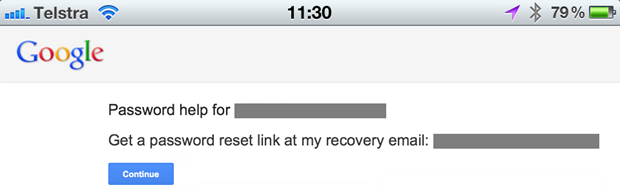

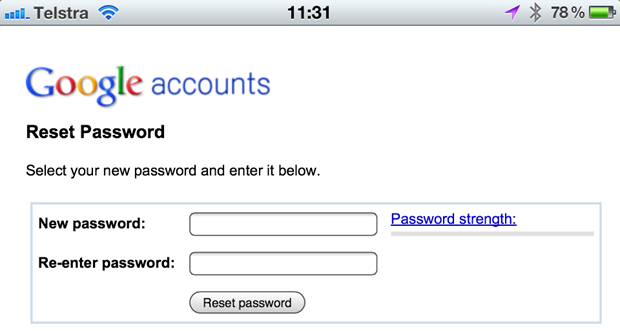

Now you also need to have 2-step verification enabled, but what this means is that the next time you need to reset your password, your mobile phone can become your second factor of authentication. Let me demonstrate how to initiate this this via my iPhone, for reasons which will soon become apparent:

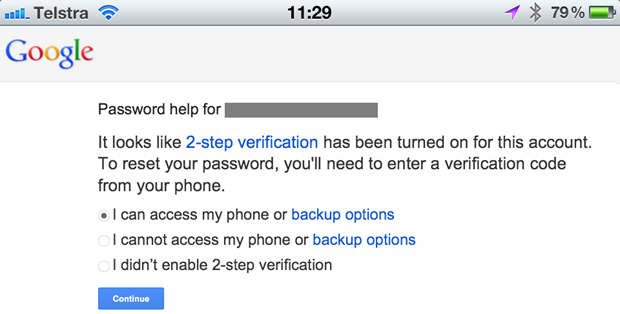

After identifying the email address of the account, Google recognises that 2FA has been enabled and we’re able to reset the account via verification that can be SMS’d to the account holders mobile phone:

We now need to elect to begin the reset process:

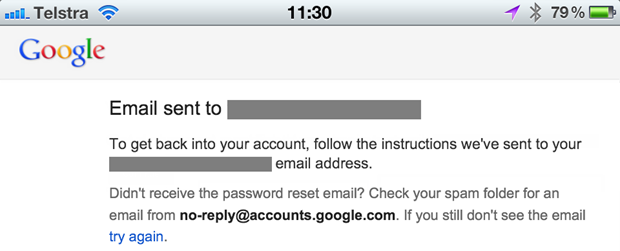

This sends an email off to the registered address:

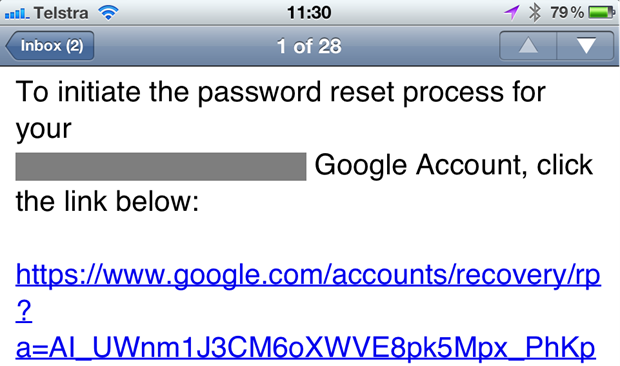

The email then contains a reset URL:

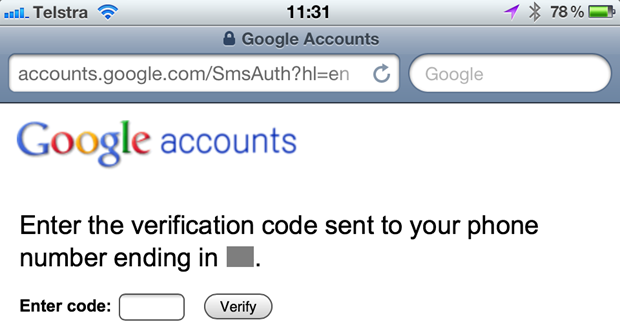

When the reset URL is accessed, the SMS is sent and the website prompts for it to be entered:

Here’s that SMS:

After it’s entered into the browser, we’re back into classic password reset territory:

This might seem a little verbose – and it is (I think that 3rd iPhone screen could go) – but it does validate that the person conducting the reset has access to both the email address and the account holder’s mobile phone. This could well be 9 times more secure than an email only channel for password resets, but there’s a problem…

The problem has to do with smart phones. The device below can verify only one factor of authentication – it can receive an SMS but not an email:

However this device can receive an SMS and receive a reset email:

The problem is that when we view email as the first factor of authentication then we view either SMS (or even an app generating tokens) as the second, these days that’s all bundled up into the one device. Of course what this means is that if someone gets their hands on your smartphone then all that convenience suddenly means you’re back to one channel; that second factor of “something you have” means you also have the first factor. And all of that’s behind a single 4 digit PIN – if the phone has even has a PIN in the first place and has been locked.

Yes, 2FA as Google has implemented it certainly provides additional security, but it’s not fool proof and it’s certainly not dependent on two entirely autonomous channels.

Resetting via username versus resetting via email address

Should you allow a reset only via email address? Or should you be able to reset via username too? The problem with resetting via username is that there’s no way to notify the user if the username was invalid that doesn’t disclose the fact that someone else may have an account with that name. In the previous section, a reset via email ensured the legitimate owner of that email could always receive feedback without disclosing its existence in the system publicly. You can’t do that with just a username.

So the short answer is: email only. If you’re trying to do it with username then you’re going to have cases where the user is left wondering what’s going on or you’re disclosing the existence of accounts. Yes, it’s only a username and not an email address and yes, anybody can choose any (available) username they’d like but there’s still a good chance you’re going to implicitly disclose account holders due to the propensity of username reuse.

So what happens if someone forgets their username? Assuming the username isn’t already the email address (which is often the case), then the process is similar to how a password reset begins – enter the email address then send a message to that address without disclosing its existence. The only difference is that this time around, the message simply contains the username rather than a password reset URL. Either that or the email explains that there is no account on file for that address.

Identity verification and email addresses accuracy

A key aspect of password resets – arguably the key aspect – is verifying the identity of the person attempting to perform the reset. Is this indeed the legitimate owner of the account? Or someone attempting to either break into it or inconvenience the owner?

Email is clearly the most convenient, most ubiquitous channel for verifying an identity. It’s not fool proof and there are many cases where simply being able to receive emails at the account holder’s address is not sufficient if a high degree of identity confidence is required (hence the use of 2FA), but it’s almost always the starting point of a reset process.

One thing that’s critical if email is going to play a role is confidence that the email address is actually correct to begin with. If someone has a character wrong then clearly resets aren’t going to get through. An email verification process at the point of registration is a sure way of ensuring the address is correct. We’ve all seen this in practice; you register, an email is sent to you with a unique URL you need to click through to which therefore verifies you are indeed the holder of that email account. Not being able to log on until this process is complete ensures there is motivation to validate the email address.

As with many aspects of security, this model imposes a usability overhead in exchange for giving us a greater degree of security in terms of confidence in the user’s identity. This might be fine for a site where the user places a high value on being able to successfully register and is happy to add one more step to the process (paid services, banking, etc.) but it’s the sort of thing they may well just walk away from if they perceive the account as being a “throwaway” such as simply a means of commenting on a post.

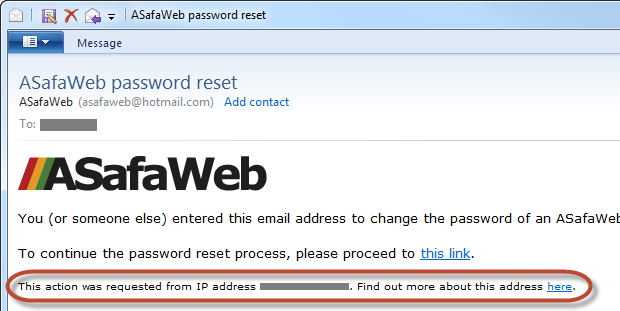

Identifying who initiated the reset process

Clearly there is scope for abusing the password reset feature and evildoers can do so in a number of different ways. One very easy trick we can use to help verify the source of the request – one which usually works – is to attach the IP address of the requestor to the reset email. What this does is equips the recipient with some information to identify the source of the request.

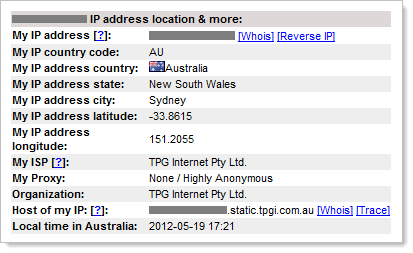

Here’s an example from the reset feature I’m presently building into ASafaWeb:

That “find out more” link takes you off to ip-adress.com which will give you things like the location and organisation of the requestor:

Now of course anyone wanting to hide their identity has numerous ways of obfuscating their real IP address, but this is a neat little way to put some form of identity to the requestor and in most cases, it will give you a good idea of who was behind the reset request.

Notifying a change via email

One theme which has pervaded across this post is communication; tell the account holder as much as possible about what is going on at each step in the process without disclosing anything which could be used for nefarious purposes. It’s the same thing once the password has actually been changed – let the owner know!

A change of password can come from one of two different sources:

- Changing the password while already logged on because the owner wants something different

- Resetting the password while logged off because the owner has forgotten it

Whilst this is a post primarily about resets, a notification in the first example above mitigates the risk of someone else changing the password without the legitimate owner’s knowledge. How could this happen? A very common scenario is that someone else has obtained the legitimate owner’s password (reused one breached from another location, key logged, easily guessable, etc.) and has decided to change it and lock them out. Without an email notification, the real owner has no idea of the change.

Now of course in the reset scenario the owner must have already initiated the process (or defeated the various identity verification measures outlined above) so the change shouldn’t come as a surprise to them, but email notification is positive feedback and additional verification. Besides, it makes for a consistent experience in both of the scenarios above.

Oh, and in case it’s not already obvious, don’t email them the new password! Some of you may laugh, but it happens:

Log, log and then log some more

The thing about a password reset feature is that it’s ripe for abuse, either by an attacker wanting to gain access to an account of someone just wanting to cause mischief and inconvenience for the account holder or system owner. Many of the practices discussed above will help mitigate abuse, but they won’t stop it and they certainly won’t stop people from attempting to misuse the feature.

One practice that can be absolutely invaluable for detecting malicious behaviour is logging and I mean really extensive logging. Log failed log on attempts, log password resets, log password changes (i.e. while the user is already logged on) and basically log anything you can that will help you identify what’s going in should you really need it in the future. Even log individual parts of the process, for example a good reset feature will involve initiating the reset via the website (log the request and log attempts to reset with an invalid username or email), log the visit to the website with the reset URL (including attempts to use an invalid token) then log the success or failure of the secret question’s answer.

Now when I say logging, you don’t just want a record of the fact the page was loaded, you want to collect as much info as you can so long as it’s not sensitive. People, please don’t log the password! What you do want to log is the identity of the authenticated user (they’ll be authenticated if they’re changing an existing password or if they’re attempting to reset someone else’s while logged in), any attempted usernames or email addresses plus any reset tokens they attempted to use. But you also want to log things like IP address and if possible, even request headers. This allows you to reconstruct not just what the person (or attacker) was attempting to do, but who they were.

Delegating responsibility to other providers

If this all just seems like a lot of hard work, you’re not alone in your thinking. The reality is that building a secure account management facility isn’t simple. It’s not that it’s technically hard, it’s just that there are a lot of nuts and bolts involved. It’s not just resets, there’s the whole registration process, secure password storage, handling multiple invalid login attempts and so on and so forth. Whilst I advocate using pre-built functionality such as the ASP.NET membership provider, there’s still a lot of work to be done.

These days there are numerous third party providers who are happy to take the pain of writing all this yourself and abstract it all away into a managed service. The options include OpenID, OAuth and even Facebook, among others. Some people swear by this model (indeed OpenID has proven to be very successful on Stack Overflow), but then others literally find it a nightmare.

Undoubtedly, a service like OpenID takes a number of problems away from the developer but also undoubtedly, it introduces all new ones. Does it have a role to play? Yes, but clearly we’re not seeing authentication providers adopted en mass. Banks, airlines even shopping – I can’t think of a single one which doesn’t implement their own authentication mechanism and there are clearly some very good reasons for that.

Malicious resets

One thing about each of the examples above is that the old password is only rendered useless after the account owner’s identity has been verified. This is very important as if the account could be reset before verifying identity and then the door is opened for all sorts of malicious activity.

Here’s an example: someone is bidding at an auction site and towards the end of the bidding process they lock out competing bidders by initiating the reset process thus removing their competition. Clearly there can be major adverse results if a poorly designed reset feature can be abused. Mind you, account lockouts by invalid login attempts is a similar story, but that’s one for another post.

As I mentioned earlier, allowing anonymous users the ability to reset anyone’s account simply by knowing their email address is a denial of service attack just waiting to happen. It may not be a DOS in the way we often think of it, but there’s no faster way to lock someone out of their account than though a poorly designed password reset feature.

The weakest link

All of what you’ve read above is fantastic in terms of securing a single account, but one thing you need to remain conscious of is the ecosystem around the account you’re securing. Let me give you an example:

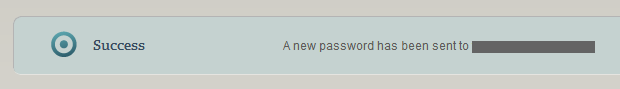

ASafaWeb is hosted on the very excellent service provided by AppHarbor. The reset process for their hosting account goes like this:

Step 1:

Step 2:

Step 3:

Step 4:

After reading all the earlier info in this post it’s easy to see there a few areas which, in a perfect world, we’d approach a bit differently. The point I want to make here though is that if I publish a site such as ASafaWeb onto the AppHarbor service then implement some great secret questions and answers, throw in a second factor of authentication and do everything else by the book, none of this will change the fact that the weakest link in the process can trump all of this. After all, if someone can successfully authenticate to AppHarbor using my credentials then they can go and reset every single ASafaWeb account to whatever password they like anyway!

The point is that the strength of the security implementation needs to be looked at holistically; you need to threat model each and every entry point in the system, even if it’s just a cursory process such as what I did above with AppHarbor. This is enough to give me a good indication of how much effort I should be investing in the ASafaWeb password reset process.

Tying it all together

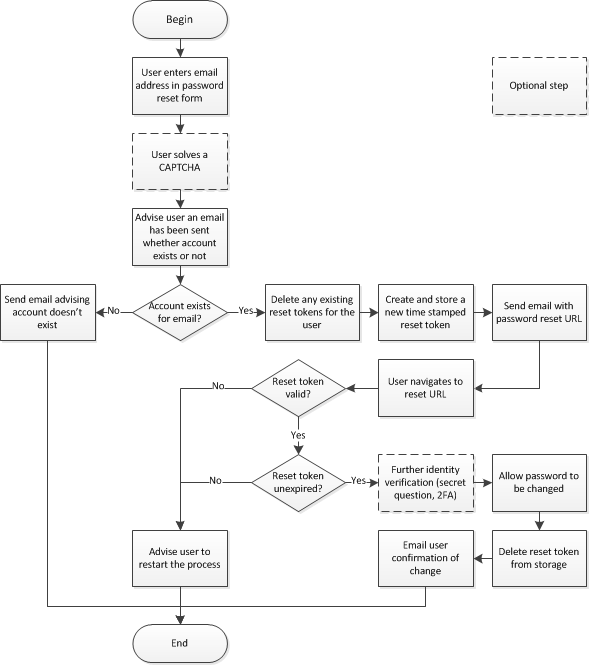

This post contains a lot of information to absorb so let me distil it down to a simple visual representation:

Keep in mind also that you want to be logging the activity at as many of these points as possible. And that’s it – easy!

Summary

If this seems like a comprehensive post, consider that there’s plenty of additional material I could have included but elected not to for the sake of brevity; the role of a rescue email address, what happens if you lose access to the email on the account (i.e. you change jobs) and so on and so forth. As I said earlier, it’s not that resets are difficult, it’s just there are a lot of angles to it.

Even though resets aren’t difficult, they’re often implemented poorly. We saw a couple of examples above where the implementation could lead to problems and there are many more precedents where resets gone wrong did cause problems. Just the other day it seems that a reset was abused to steal $87k worth of Bitcoins. That’s a serious adverse result!

So take care with your resets, threat model the various touch points and keep your black hat on while building the feature because if you don’t, there’s a good chance that someone else will!