As we begin to look at the final few entries in the Top 10, we’re getting into the less prevalent web application security risks, but in no way does that diminish the potential impact that can be had. In fact what makes this particular risk so dangerous is that not only can it be used to very, very easily exploit an application, it can be done so by someone with no application security competency – it’s simply about accessing a URL they shouldn’t be.

On the positive side, this is also a fundamentally easy exploit to defend against. ASP.NET provides both simple and efficient mechanisms to authenticate users and authorise access to content. In fact the framework wraps this up very neatly within the provider model which makes securing applications an absolute breeze.

Still, this particular risk remains prevalent enough to warrant inclusion in the Top 10 and certainly I see it in the wild frequently enough to be concerned about it. The emergence of resources beyond typical webpages in particular (RESTful services are a good example), add a whole new dynamic to this risk altogether. Fortunately it’s not a hard risk to prevent, it just needs a little forethought.

Defining failure to restrict URL access

This risk is really just as simple as it sounds; someone is able to access a resource they shouldn’t because the appropriate access controls don’t exist. The resource is often an administrative component of the application but it could just as easily be any other resource which should be secured – but isn’t.

OWASP summaries the risk quite simply:

Many web applications check URL access rights before rendering protected links and buttons. However, applications need to perform similar access control checks each time these pages are accessed, or attackers will be able to forge URLs to access these hidden pages anyway.

They focus on entry points such as links and buttons being secured at the exclusion of proper access controls on the target resources, but it can be even simpler than that. Take a look at the vulnerability and impact and you start to get an idea of how basic this really is:

| Threat Agents | Attack Vectors | Security Weakness | Technical Impacts | Business Impact | |

| Exploitability EASY | Prevalence UNCOMMON | Detectability AVERAGE | Impact MODERATE | ||

| Anyone with network access can send your application a request. Could anonymous users access a private page or regular users a privileged page? | Attacker, who is an authorised system user, simply changes the URL to a privileged page. Is access granted? Anonymous users could access private pages that aren’t protected. | Applications are not always protecting page requests properly. Sometimes, URL protection is managed via configuration, and the system is misconfigured. Sometimes, developers must include the proper code checks, and they forget. Detecting such flaws is easy. The hardest part is identifying which pages (URLs) exist to attack. | Such flaws allow attackers to access unauthorised functionality. Administrative functions are key targets for this type of attack. | Consider the business value of the exposed functions and the data they process. | |

So if all this is so basic, what’s the problem? Well, it’s also easy to get wrong either by oversight, neglect or some more obscure implementations which don’t consider all the possible attack vectors. Let’s take a look at unrestricted URLs in action.

Anatomy of an unrestricted URL attack

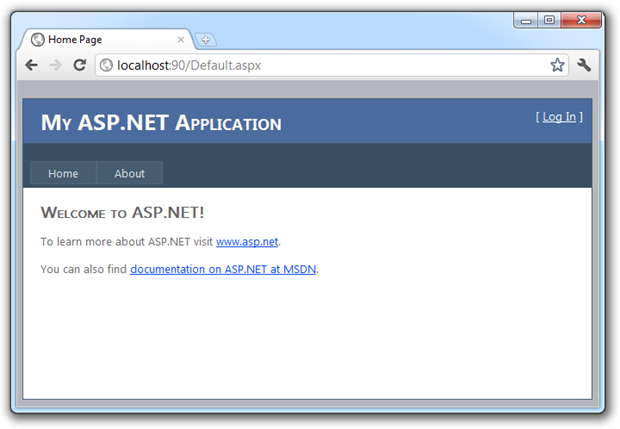

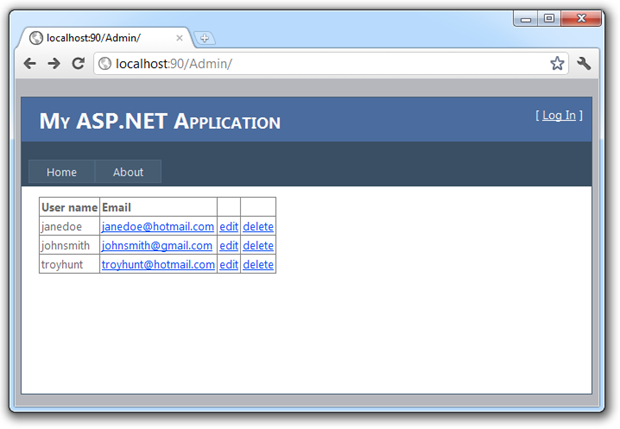

Let’s take a very typical scenario: I have an application that has an administrative component which allows authorised parties to manage the users of the site, which in this example means editing and deleting their records. When I browse to the website I see a typical ASP.NET Web Application:

I’m not logged in at this stage so I get the “[ Log In ]” prompt in the top right of the screen. You’ll also see I’ve got “Home” and “About” links in the navigation and nothing more at this stage. Let’s now log in:

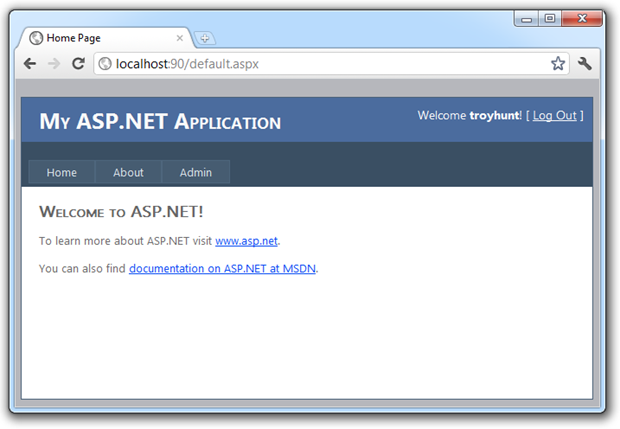

Right, so now my username – troyhunt – appears in the top right and you’ll notice I have an “Admin” link in the navigation. Let’s take a look at the page behind this:

All of this is very typical and from an end user perspective, it behaves as expected. From the code angle, it’s a very simple little bit of syntax in the master page:

if (Page.User.Identity.Name == "troyhunt") { NavigationMenu.Items.Add(new MenuItem { Text = "Admin", NavigateUrl = "~/Admin" }); }

The most important part in the context of this example is that I couldn’t access the link to the admin page until I’d successfully authenticated. Now let’s log out:

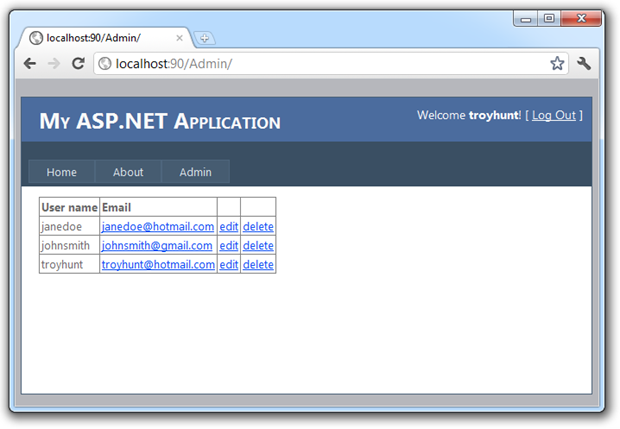

Here’s the sting in the tail – let’s now return the URL of the admin page by typing it into the address bar:

Now what we see is that firstly, I’m not logged in because we’re back to the “[ Log In ]” text in the top right. We’ve also lost the “Admin” link in the navigation bar. But of course the real problem is that we’ve still been able to load up the admin page complete with user accounts and activities we certainly wouldn’t want to expose to unauthorised users.

Bingo. Unrestricted URL successfully accessed.

What made this possible?

It’s probably quite obvious now, but the admin page itself simply wasn’t restricted. Yes, the link was hidden when I wasn’t authenticated – and this in and of itself is fine – but there were no access control wrapped around the admin page and this is where the heart of the vulnerability lies.

In this example, the presence of an “/Admin” path is quite predictable and there are countless numbers of websites out there that will return a result based on this pattern. But it doesn’t really matter what the URL pattern is – if it’s not meant to be an open URL then it needs access controls. The practice of not securing an individual URL because of an unusual or unpredictable pattern is often referred to as security through obscurity and is most definitely considered a security anti-pattern.

Employing authorisation and security trimming with the membership provider

Back in the previous Top 10 risk about insecure cryptographic storage, I talked about the ability of the ASP.NET membership provider to implement proper hashing and salting as well playing nice with a number of webform controls. Another thing the membership provider does is makes it really, really easy to implement proper access controls.

Right out of the box, a brand new ASP.NET Web Application is already configured to work with the membership provider, it just needs a database to connect to and an appropriate connection string (the former is easily configured by running “aspnet_regsql” from the Visual Studio command prompt). Once we have this we can start using authorisation permissions configured directly in the <configuration> node of the Web.config. For example:

<location path="Admin"> <system.web> <authorization> <allow users="troyhunt" /> <deny users="*" /> </authorization> </system.web> </location>

So without a line of actual code (we’ll classify the above as “configuration” rather than code), we’ve now secured the admin directory to me and me alone. But this now means we’ve got two definitions of securing the admin directory to my identity: the one we created just now and the earlier one intended to show the navigation link. This is where ASP.NET site-map security trimming comes into play.

For this to work we need a Web.sitemap file in the project which defines the site structure. What we’ll do is move over the menu items currently defined in the master page and drop each one into the sitemap so it looks as following:

<?xml version="1.0" encoding="utf-8" ?> <siteMap xmlns="http://schemas.microsoft.com/AspNet/SiteMap-File-1.0" > <siteMapNode roles="*"> <siteMapNode url="~/Default.aspx" title="Home" /> <siteMapNode url="~/About.aspx" title="About" /> <siteMapNode url="~/Admin/Default.aspx" title="Admin" /> </siteMapNode> </siteMap>

After this we’ll also need a site-map entry in the Web.config under system.web which will enable security trimming:

<siteMap enabled="true"> <providers> <clear/> <add siteMapFile="Web.sitemap" name="AspNetXmlSiteMapProvider"

type="System.Web.XmlSiteMapProvider" securityTrimmingEnabled="true"/> </providers> </siteMap>

Finally, we configure the master page to populate the menu from the Web.sitemap file using a sitemap data source:

<asp:Menu ID="NavigationMenu" runat="server" CssClass="menu"

EnableViewState="false" IncludeStyleBlock="false" Orientation="Horizontal"

DataSourceID="MenuDataSource" /> <asp:SiteMapDataSource ID="MenuDataSource" runat="server"

ShowStartingNode="false" />

What this all means is that the navigation will inherit the authorisation settings in the Web.config and trim the menu items accordingly. Because this mechanism also secures the individual resources from any direct requests, we’ve just locked everything down tightly without a line of code and it’s all defined in one central location. Nice!

Leverage roles in preference to individual user permissions

One thing OWASP talks about in this particular risk is the use of role based authorisation. Whilst technically the approach we implemented above is sound, it can be a bit clunky to work with, particularly as additional users are added. What we really want to do is manage permissions at the role level, define this within our configuration where it can remain fairly stable and then manage the role membership in a more dynamic location such as the database. It’s the same sort of thing your system administrators do in an Active Directory environment with groups.

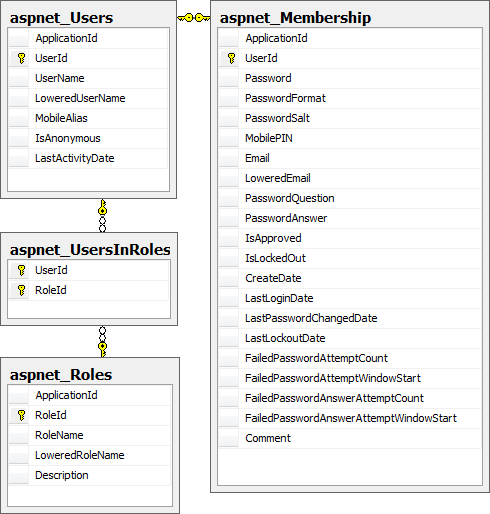

Fortunately this is very straight forward with the membership provider. Let’s take a look at the underlying data structure:

All we need to do to take advantage of this is to enable the role manager which is already in our project:

<roleManager enabled="true">

Now, we could easily just insert the new role into the aspnet_Roles table then add a mapping entry against my account into aspnet_UsersInRole with some simple INSERT scripts but the membership provider actually gives you stored procedures to take care of this:

EXEC dbo.aspnet_Roles_CreateRole '/', 'Admin' GO DECLARE @CurrentDate DATETIME = GETUTCDATE() EXEC dbo.aspnet_UsersInRoles_AddUsersToRoles '/', 'troyhunt', 'Admin',

@CurrentDate GO

Even better still, because we’ve enabled the role manager we can do this directly from the app via the role management API which will in turn call the stored procedures above:

Roles.CreateRole("Admin"); Roles.AddUserToRole("troyhunt", "Admin");

The great thing about this approach is that it makes it really, really easy to hook into from a simple UI. Particularly the activity of managing users in roles in something you’d normally expose through a user interface and the methods above allow you to avoid writing all the data access plumbing and just leverage the native functionality. Take a look through the Roles class and you’ll quickly see the power behind this.

The last step is to replace the original authorisation setting using my username with a role based assignment instead:

<location path="Admin"> <system.web> <authorization> <allow roles="Admin" /> <deny users="*" /> </authorization> </system.web> </location>

And that’s it! What I really like about this approach is that it’s using all the good work that already exists in the framework – we’re not reinventing the wheel. It also means that by leveraging all the bits that Microsoft has already given us, it’s easy to stand up an app with robust authentication and flexible, configurable authorisation in literally minutes. In fact I can get an entire website up and running with a full security model in less time than it takes me to go and grab a coffee. Nice!

Apply principal permissions

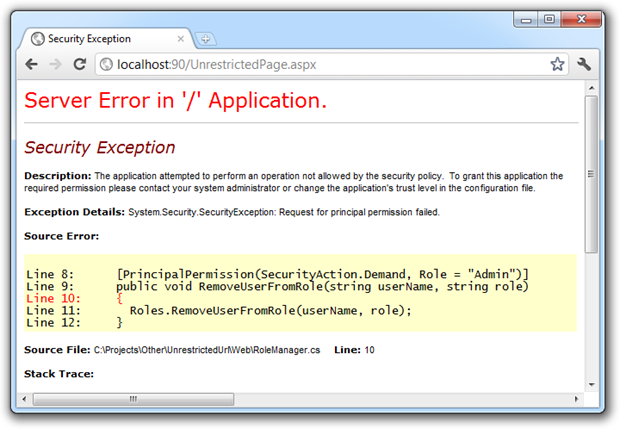

An additional sanity check that can be added is to employ principle permissions to classes and methods. Let’s take an example: Because we’re conscientious developers we separate our concerns and place the method to remove a user a role into a separate class to the UI. Let’s call that method “RemoveUserFromRole”.

Now, we’ve protected the admin directory from being accessed unless someone is authenticated and exists in the “Admin” role, but what would happen if a less-conscientious developer referenced the “RemoveUserFromRole” from another location? They could easily reference this method and entirely circumvent the good work we’ve done to date simply because it’s referenced from another URL which isn’t restricted.

What we’ll do is decorate the “RemoveUserFromRole” method with a principal permission which demands the user be a member of the “Admin” role before allowing it to be invoked:

[PrincipalPermission(SecurityAction.Demand, Role = "Admin")] public void RemoveUserFromRole(string userName, string role) { Roles.RemoveUserFromRole(userName, role); }

Now let’s create a new page in the root of the application and we’ll call it “UnrestrictedPage.aspx”. Because the page isn’t in the admin folder it won’t inherit the authorisation setting we configured earlier. Let’s now invoke the “RemoveUserFromRole” method which we’ve just protected with the principal permission and see how it goes:

Perfect, we’ve just been handed a System.Security.SecurityException which means everything stops dead in its tracks. Even though we didn’t explicitly lock down this page like we did the admin directory, it still can’t execute a fairly critical application function because we’ve locked it down at the declaration.

You can also employ this at the class level:

[PrincipalPermission(SecurityAction.Demand, Role = "Admin")] public class RoleManager { public void RemoveUserFromRole(string userName, string role) { Roles.RemoveUserFromRole(userName, role); } public void AddUserToRole(string userName, string role) { Roles.AddUserToRole(userName, role); } }

Think of this as a safety net; it shouldn’t be required if individual pages (or folders) are appropriately secured but it’s a very nice backup plan!

Remember to protect web services and asynchronous calls

One thing we’re seeing a lot more of these days is lightweight HTTP endpoints used particularly in AJAX implementations and for native mobile device clients to interface to a backend server. These are great ways of communicating without the bulk of HTML and particularly the likes of JSON and REST are enabling some fantastic apps out there.

All the principles discussed above are still essential in lieu of no direct web UI. Without having direct visibility to these services it’s much easier for them to slip through without necessarily having the same access controls placed on them. Of course these services can still perform critical data functions and need the same protection as a full user interface on a webpage. This is again where native features like the membership provider come into their own because they can play nice with WCF.

One way of really easily identifying these vulnerabilities is to use Fiddler to monitor the traffic. Pick some of the requests and try executing them again through the request builder without the authentication cookie and see if they still run. While you’re there, try manipulating the POST and GET parameters and see if you can find any insecure direct object references :)

Leveraging the IIS 7 Integrated Pipeline

One really neat feature we got in IIS 7 is what’s referred to as the integrated pipeline. What this means is that all requests to the web server – not just requests for .NET assets like .aspx pages – can be routed through the same request authorisation channel.

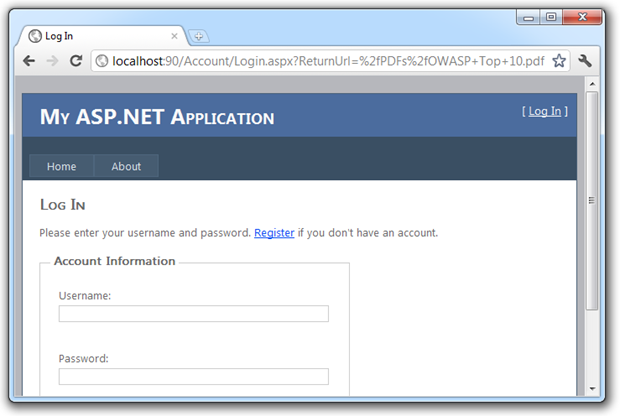

Let’s take a typical example where we want to protect a collection of PDF files so that only members of the “Admin” role can access them. All the PDFs will be placed in a “PDFs” folder and we protect them in just the same way as we did the “Admin” folder earlier on:

<location path="PDFs"> <system.web> <authorization> <allow roles="Admin" /> <deny users="*" /> </authorization> </system.web> </location>

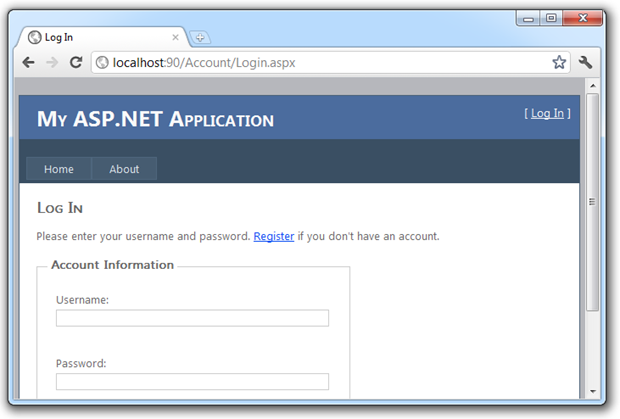

If I now try to access a document in this path without being authenticated, here’s what happens:

We can see via the “ReturnUrl” parameter in the URL bar that I’ve attempted to access a .pdf file and have instead been redirected over to the login page. This is great as it brings the same authorisation model we used to protect our web pages right into the realm of files which previously would have been processed in their own pipeline outside of any .NET-centric security model.

Don’t roll your own security model

One of the things OWASP talks about in numerous places across the Top 10 is not “rolling your own”. Frameworks such as .NET have become very well proven, tried and tested products used by hundreds of thousands of developers over many years. Concepts like the membership provider have been proven very robust and chances are you’re not going to be able to build a better mousetrap for an individual project. The fact that it’s extensible via the provider model means that even when it doesn’t quite fit your needs, you can still jump in and override the behaviours.

I was reminded of the importance of this recently when answering some security questions on Stack Overflow. I saw quite a number of incidents of people implementing their own authentication and authorisation schemes which were fundamentally flawed and had a very high probability of being breached in next to no time whilst also being entirely redundant with the native functionality.

Let me demonstrate: Here we have a question about How can I redirect to login page when user click on back button after logout? The context seemed a little odd so as you’ll see from the post, I probed a little to understand why you would want to effectively disable the back button after logging at. And so it unfolded that precisely the scenario used to illustrate unrestricted URLs at the start of this post was at play. The actual functions performed by an administrator were still accessible when logged off and because a custom authorisation scheme had been rolled; none of the quick fixes we’ve looked at in this post were available.

Beyond the risk of implementing things badly, there’s the simple fact that not using the membership provider closes the door on many of the built in methods and controls within the framework. All those methods in the “Roles” class are gone, Web.config authorisation rules go out the window and your webforms can’t take advantage of things like security trimming, login controls or password reset features.

Common URL access misconceptions

Here’s a good example of just how vulnerable this sort of practice can leave you: A popular means of locating vulnerable URLs is to search for Googledorks which are simply URLs discoverable by well-crafted Google searches. Googledork search queries get passed around in the same way known vulnerabilities might be and often include webcam endpoints searches, directory listings or even locating passwords. If it’s publicly accessible, chances are there’s a Google search that can locate it.

And while we’re here, all this goes for websites stood up on purely on an IP address too. A little while back I had someone emphatically refer to the fact that the URL in question was “safe” because Google wouldn’t index content on an IP address alone. This is clearly not the case and is simply more security through obscurity.

Other resources vulnerable to this sort of attack include files the application may depend on internally but that IIS will happily serve up if requested. For example, XML files are a popular means of lightweight data persistence. Often these can contain information which you don’t want leaked so they also need to have the appropriate access controls applied.

Summary

This is really a basic security risk which doesn’t take much to get your head around. Still, we see it out there in the wild so frequently (check out those Googledorks), plus its inclusion in the Top 10 shows that it’s both a prevalent and serious security risk.

The ability to easily protect against this with the membership and role providers coupled with the IIS 7 integrated pipeline should make this a non-event for .NET applications – we just shouldn’t see it happening. However, as the Stack Overflow discussion shows, there are still many instances of developers rolling their own authentication and authorisation schemes when they simply don’t need to.

So save yourself the headache and leverage the native functionality, override it where needed, watch your AJAX calls and it’s not a hard risk to avoid.

Resources

- How To: Use Membership in ASP.NET 2.0

- How To: Use Role Manager in ASP.NET 2.0

- ASP.NET Site-Map Security Trimming

OWASP Top 10 for .NET developers series

|

1. Injection |