I've just been reading Kingpin by Kevin Poulsen which sheds some really interesting light on criminal credit card fraud in the mid 2000's. Towards the end of the book, there's a reference to a 1997 case in which the government persuades the sentencing judge to permanently seal the court transcripts for fear that disclosure would impact the targeted company as follows:

loss of business due to the perception of others that computer systems may be vulnerable

There were 80,000 people impacted in that incident and they never knew that their personal information had been obtained by criminals for fear that the very organisation that lost the data in the first place would be adversely impacted. Fortunately, cover-ups like this can no longer happen in many parts of the world. Most US states have had mandatory data breach disclosure laws since 2002, Australia has a draft bill that will go to parliament this year and the EU's General Data Protection Regulation is making it mandatory across the board there too. It's always been ethically dubious not to disclose a data breach to those who have been impacted by it, but it's also illegal in many places if not now, then very soon.

Yet somehow, well over a decade after we started seeing mandatory disclosure laws come into effect, some organisations not only ignore the push for public transparency, but even justify non-disclosure by saying it's in the victims' best interests to keep it quiet. I saw a security "strategy" this week in the wake of a major data breach which was alarming, to say the least. I want to capture the details of it here and frankly, tear it to shreds because we should never see an organisation playing fast and loose with people's data in this way. Hopefully if this strategy is ever considered by others in future they'll stumble across this post and think better of it.

This relates to the Lifeboat data breach from earlier this week. Well actually, the breach itself was many months ago but the disclosure was only this week and therein lies the problem.

When approached by the reporter in the above story about this incident, Lifeboat stated:

When this happened [in] early January we figured the best thing for our players was to quietly force a password reset without letting the hackers know they had limited time to act

I was stunned when I read this - you mean they knew about the incident and decided to cover it up?! I'm used to seeing organisations genuinely have no idea they've been hacked but to see one that actually knew about it - a 7 million record breach at that - and then consciously silence the incident without telling anyone left me speechless. This is 7 million records which contain passwords stored as MD5 hashes too which means that you can take the hash then simply Google it and like magic, here's the plain text value. Or you do it en masse using hashcat as I recently showed for salted MD5 hashes (hashes with no salt such as Lifeboat's are significantly easier to crack). A large portion of those passwords would be reverted to plain text in a very short time.

As much as that comment shocked me, the discussion I then saw on Twitter from someone who works for Lifeboat made it even worse. I'm not going to link directly to the thread in order to save the individual embarrassment because in all likelihood they'll later realise the serious implications of what they've said. I'm sure we've all evolved our thinking over time and would be embarrassed to look back on some of the views we held once upon a time and I suspect that will be the case for this bloke as well. But with that said, let's get to the meat of the issue.

It started out with a discussion on Twitter which used the same justification for concealing the breach:

If they alerted people about passwords being reset they would've basically been telling the hackers to hurry up and ALL data would've been stolen.

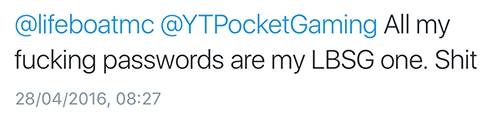

So let's start there. Assumedly, this statement and the earlier one about not letting the attackers know they have limited time relates to the window of opportunity in which an account can be exploited. As an attacker, you have someone's email address and their password and you want to use that to compromise other accounts because password reuse remains the norm rather than the exception. For example, just read through some of the responses to this tweet:

⚠ If you used your Lifeboat account password for any other services, please change them now ⚠ pic.twitter.com/H4302cOWwl

— Lifeboat (@lifeboatmc) April 27, 2016

Responses like this one:

One things for sure; if hackers are looking to exploit people then yes, they'd need to hurry up as that window of opportunity is now way smaller since the incident went public. Once people know their data has been leaked then there's a much higher likelihood that they'll change that password not just on the service that lost it, but on other locations too.

The Twitter discussion continued:

Lifeboat took an approach that protected some users whilst not alerting the hackers, pretty smart imo.

This is completely nonsensical. In no way whatsoever did covering up the incident protect anything other than their own reputation. Consider the tweet above - "all my fucking passwords are my Lifeboat one" - how on earth is it protecting the guy if they know malicious actors have his credentials and they don't tell him?! Notifying customers would have significantly reduced the window of risk; instead of many month's worth of opportunity to access victims' other accounts, attackers now had a much shorter period.

The database was compromised but hasn't been made publicly available (to our knowledge)

I'm certain this statement is correct, but it also proves the old adage of "absence of evidence is not evidence of absence". Perhaps it's simply an issue of how they interpret the term "publicly available" so let me clarify: I was actually approached by two separate individuals who offered the Lifeboat data. I have no idea whether they travel in the same circles or not, but one of them also had this to say:

also WAS being sold on RealDeal/Hell

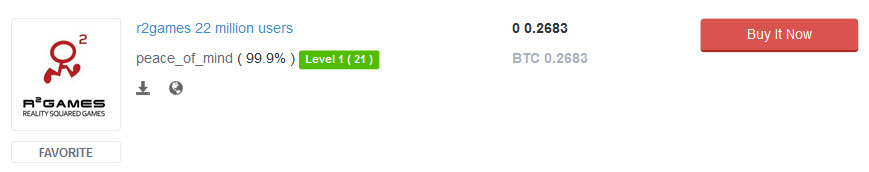

These sites are both accessible via Tor and they're notorious for trading in data breaches. For example, here's R2 games being sold right now:

R2 was actually a very similar deal to Lifeboat; someone approached me with the data some time ago after trading it within their community and I loaded it into Have I been pwned. Also like Lifeboat, R2 remains in denial about the spread of the data, in fact someone forwarded me on this message after contacting R2 about the breach:

We have also received numerous reports about this which is very alarming, however you must not worry because this news spreading was not true, R2Games is safe and secured and far from being hacked

To make absolutely certain there was no ambiguity about the incident, I verified a number of the individual's data attributes from the breach and he confirmed that it "is very legit". Another person had a similar discussion with R2 and they told him this:

We have asked our operations department regarding this issue of breach and they deny all the allegations this website is saying

I don't want to go too far off on an R2 tangent here, the point I want to make is that the realm of data breaches is often foreign to organisations that don't travel in those circles. It's an unfamiliar environment to them and I'm not at all surprised that Lifeboat was unable to locate their data. Sites like Real Deal and Hell tend to be transient both in terms of their existence (Hell has disappeared and reappeared numerous times) and in terms of the individual data sets they're trading. Many other people have the Lifeboat data and frankly, none of us know exactly what they've done with it.

The Twitter discussion went on:

Just because it's been sold or traded doesn't mean those people are smart enough or evil enough to use the emails and passwords for bad.

Precisely why do they think people actually pay for this data?! Whilst I'm sure there are some individuals that simply like to hoard it, there are many others that purchase it because there's an ROI. They spend money on the data because they can get a return on exploiting it, particularly when you have a dump as large as 7 million records and passwords stored in an easily reversible fashion. We know this very well and we've seen it happen time and time again, even all the way back to 2010 when Gawker was hacked (Del Harvey is the Head of Twitter Trust & Safety):

Got a Gawker acct that shares a PW w/your Twitter acct? Change your Twitter PW. A current attack appears to be due to the Gawker compromise.

— Del Harvey (@delbius) December 13, 2010

By barely protecting credentials in storage and then letting them float around the web for months without telling anyone, they put people at huge risk. Even in the update Lifeboat eventually issued once the incident got press (which includes the pre-requisite defence of we-now-take-security-seriously-even-though-we-didn't-before), whilst on the one hand it's heartening to see them apologise, on the other hand they then downplay the significance of the incident:

We do not know of anyone who has actually had their email or other service hacked

Let's just consider this for a moment; they're saying that they don't know of anyone who had their email compromised as a result of their credentials being taken from Lifeboat months ago. Now what does it look like when this happens, I mean when someone logs into your email account using stolen credentials? Well for one thing nobody would have turned around and blamed Lifeboat because they didn't know Lifeboat lost their credentials! Lifeboat is 100% correct in saying they don't know if anyone had other things hacked - how would they?! It's a Chewbacca defence that has nothing to do with whether other accounts were actually compromised or not.

There's also a school of thought that says "hey, this is just a game, why does security even matter?" In fact, Lifeboat specifically downplay the value of its members' credentials in their getting started guide:

This is not online banking

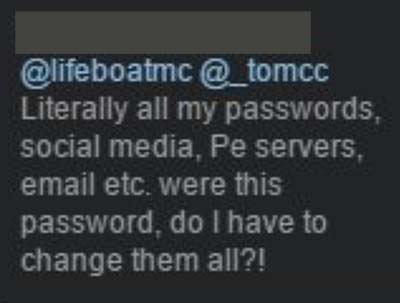

No, Lifeboat isn't online banking but you know what many of those passwords will get you access to? Online banking. And email. And ecommerce accounts and all the other places that people reused their credentials. We all know damn well that password reuse is the norm, not the exception and when you hold someone's password - and it very frequently is "their password" that they use everywhere - you have a responsibility that goes well beyond your own system. For example:

The person who originally posted this subsequently deleted it so I've obfuscated her identity here, but she's merely repeated what is a truth for a huge proportion of the Lifeboat users. It's a statistical certainty that there's a 7 figure number of people in the Lifeboat data breach who reused their passwords.

By attempting to suppress the incident, Lifeboat left millions of people completely exposed. This was unequivocally the wrong thing to do which is why even more so than the breach itself, their decision to cover it up is what's making headlines. It's simply indefensible.

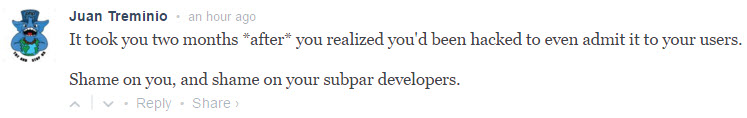

People are not happy, as is evidenced in the comments on their announcement that eventually came after the public exposure:

The Twitters are likewise angry:

@lifeboatmc @SurvivalHiveDE No, that wasn't the real problem. The real problem is you guys not caring enough to tell your users about it.

— hcherndon @ Hypixel (@hcherndon) April 27, 2016

@DavidJBrockway @DALTONTASTIC @TheLoneFireWolf @lifeboatmc Agreed. They should have let people know about this when it actually happened.

— HotshotHD = Kareem (@Hotshot_9930) April 27, 2016

@lifeboatmc You stayed quiet to cover your own asses & most of us didn't find out until yesterday. That is 3 months unaccounted for.

— Dalton Edwards (@DALTONTASTIC) April 27, 2016

@Cajun_MCPE @ZahneDoesTweets @shanjilahmed @Nykyrian_Q192 @lifeboatmc The fact that they didnt tell us when they found out makes me angry.

— Cajun (@Cajun_MCPE) April 27, 2016

Fortunately, in their announcement they do say that since the hack "The password that you chose is encrypted using much stronger algorithms" which is good news... assuming they mean "hashed" and not "encrypted", that is.

Breaches happen, it's an inevitability of modern day online life. How the organisation deals with it is the key. Compare Lifeboat's approach to that of TruckersMP which I wrote about only 5 days ago. They "owned" the incident; they got on top of it early, admitted their shortcomings and were transparent in the way they dealt with it. The first thing that should be on any company's mind after an incident like this is "how do we minimise the damage to our users".

I honestly don't know if the Lifeboat folks made this decision genuinely thinking it would help their users rather than jeopardise them or if they were just trying to cover their own arses. I genuinely hope it was the former and that with the benefit of hindsight they'd approach things differently next time. At the very least, I hope that any organisation considering a similar strategy observes the fallout from this one and thinks better of it in the future.