Some days, the news is dominated by a single security story and not just in the tech news either, but today the consumer news is all about Apple’s message to their customers. I’ve been getting a heap of media requests and seeing some really interesting things said about the story so let me distill all the noise into the genuinely interesting things that are worth knowing. There are way more angles to this than initially meet the eye, and it’s a truly significant point in time for online privacy.

The background story

In December last year, 14 people were killed and a bunch more injured in a mass shooting in San Bernardino, California. The FBI have said that the couple responsible for the incident had been radicalised by Islamic extremists and Obama consequently defined the shooting as an act of terrorism. Both shooters were subsequently killed in a fire fight with police.

The relevancy to Apple is that in the aftermath of the event, the FBI located one of the suspect’s iPhones but due to the effectiveness of Apple’s encryption, were unable to gain access to the information stored on it. Since iOS 8, Apple has stated that it "would be impossible" for them to decrypt a device since they don’t hold the encryption keys nor do they have any backdoors that would provide them with access. Consequently, the contents of the San Bernardino shooter’s iPhone have not been recoverable by the FBI.

The catalyst for Apple’s customer letter

This week, a judge in California ordered Apple to assist the FBI in accessing the contents of the San Bernardino shooter’s iPhone. Whilst it’s not been explicitly said exactly what information the FBI is seeking from the device, you could reasonably assume that they’ll be particularly interested in communication that the perpetrators had with other parties particularly given that “radicalised” implies they were coerced by others into holding extremist beliefs.

The privacy ramifications aside, there’s no doubting that there is information on the device that’s extremely valuable for legitimate law enforcement purposes. We want the FBI to track down anyone involved not just with this particular case, but responsible for influencing others to carry out similar attacks. The point of contention now is whether the court’s demand is in the public’s best interest or not.

Privacy as a feature

One of the really interesting things we’ve seen as a result of the Ed Snowden leaks in 2013 is a visible shift in the positioning of privacy as a feature. To be fair, we were organically moving towards more encryption more of the time regardless of the NSA revelations, but inevitably that accelerated the shift and highlighted the need for both data encryption during transport over the web and at rest on devices.

It’s been interesting to watch Apple in particular highlight this. For example, their position on end-to-end encryption of iMessage and FaceTime which they call out in their iOS 9 feature list:

Conversations over iMessage and FaceTime are encrypted, including predictive text — so no one but you and the person you’re talking to can see or read what’s being said.

What’s intriguing about this approach is that when it works well, encryption is transparent. This is not a feature like you’d see showcased in an Apple product launch, not like Live Photos or even Touch ID. Consumers don’t “see” the encryption of iMessage nor the fact that evidently, without being able to unlock the device the data can’t even be read whilst at rest. In fact, you could even argue that so far this case around the court order had been very good publicity for Apple and you can just picture Tim saying “Your privacy is so important that we’re not even going to back down in the face of a legal demand from the US government”.

Apple has been quite smart in the way they’ve gone about their encryption insofar as they’ve effectively tied their own hands. By not holding keys they simply can’t bow to court orders to hand over data which is what makes this present situation quite unique. In this case, the court has had to ask for something quite different to the data itself…

What’s actually being requested and how would Apple do it?

There’s a bit of playing with words going on here and each side is clearly going to pick the ones that best serve their purposes. In Tim’s customer letter, he says the following:

The FBI may use different words to describe this tool, but make no mistake: Building a version of iOS that bypasses security in this way would undeniably create a backdoor.

To which the DoJ says this:

BREAKING: White House says Department of Justice is not asking Apple to create a new backdoor, asking for access to one device.

— Reuters Top News (@Reuters) February 17, 2016

But it also begs the question – what exactly are the mechanics of gaining access to the data on a phone which is meant to be encrypted? Here’s the actual court order and specifically the assistance expected from Apple:

Apple's reasonable technical assistance may include, but is not limited to: providing the FBI with a signed iPhone Software file, recovery bundle, or other Software Image File ("SIF") that can be loaded onto the SUBJECT DEVICE. The SIF will load and run from Random Access Memory and will not modify the iOS on the actual phone, the user data partition or system partition on the device's flash memory. The SIF will be coded by Apple with a unique identifier of the phone so that the SIF would only load and execute on the SUBJECT DEVICE. The SIF will be loaded via Device Firmware Upgrade ("DFU") mode, recovery mode, or other applicable mode available to the FBI. Once active on the SUBJECT DEVICE, the SIF will accomplish the three functions specified in paragraph 2. The SIF will be loaded on the SUBJECT DEVICE at either a government facility, or alternatively, at an Apple facility; if the latter, Apple shall provide the government with remote access to the SUBJECT DEVICE through a computer allowing the government to conduct passcode recovery analysis.

Assuming that there genuinely aren’t any backdoors on the device at present, the approach seems to be to modify iOS (and Apple would need to digitally sign the software so the feds can’t do it themselves) such that it explicitly does have a weakness built into it, namely the ability to continually retry passcodes or in other words, brute force the locked device.

This weakness would rightly be classified as a backdoor, even if only a backdoor on a single phone per the request to limit it to the “subject device”. But this is really the heart of the issue in that what Apple is saying is that once this intentionally vulnerable version of the operating system exists, it will be requested again. The genie will be out of the bottle and this will almost certainly be the first of many requests.

Is the iPhone really impenetrable by the FBI alone?

That’s what this case implies – that they can’t get into the device without Apple’s help – and of course it suits Apple just fine for that to be the status quo because per the previous point, privacy is a very important marketing feature for them. There’s no doubt that US intelligence services are looking for weaknesses in the devices and naturally other nation states are doing just the same. In security terms, we tend to speak about “degrees of difficulty” when it comes to compromising a system rather than an absolute state of secure versus insecure. When it comes to iOS, it’s highly likely that the bar has been set very high, even for an adversary as well-equipped as the US government. The recent million-dollar bounty Zerodium put on a remotely exploitable iOS 9 jail break goes some way to demonstrating just how difficult it is to compromise the latest iOS version. (Note that the bounty was paid but a remote jail break is a far cry from decrypting a locked device.)

One theory is that even if the device is accessible via the FBI, they’d prefer to see this case go to trial:

if the gov hacks the iPhone themselves, they don't get the legal precedent they are so desperate to establish in this case.

— Christopher Soghoian (@csoghoian) February 17, 2016

It’s an interesting point Chris makes and if the FBI really did want to establish precedent more than they actually wanted to just access the data, this would be the case to do it with. San Bernardino is the personification of what the government has tried so hard to convince the US population they need to be fearful of – terrorist acts on home soil. This case would garner more sympathy from the general populace than a one-off shooting or even a child abuse case because it’s precisely the thing that most people are so fearful of.

I suspect the most likely scenario is that firstly, Apple have genuinely not built any intentional backdoors into the device. Doing so and then being discovered (and certainly there are many other precedents of backdoors being identified by other researchers in other products), would be absolutely catastrophic for the brand when they’re pushing privacy so hard. Secondly, there are certainly various exploits available both developed by nation states and via the likes of Zerodium but it’s entirely possible that they don’t include the ability to decrypt a locked iOS 9 device. All speculation aside though, we just don’t know for sure but to Chris’ point, the outcome would be very a valuable precedent to set for either party.

$200 billion in the bank goes a long way

Apple is presently the most valuable company in the world at $544 billion. They also have a cash stockpile of more than $200 billion so it would be fair to say they’re well-equipped for legal challenges. Consider also how high the stakes are; I’ve just talked about privacy as a feature and how much of their reputation they’re now staking on being a privacy-focused company. If they were to be forced into building the backdoor Tim talks about in his letter, there’d be enormous damage done to their brand, perhaps irreparable damage and for Apple in particular, image is everything.

This is a case that Apple is both well-equipped to fight and needs to fight. Not only that, but they’ve got a lot of public support from informed people that grasp the ramifications of what the court is asking them to do. They’ll throw serious resources at this and at the very least, it will likely be a protracted exercise regardless of the outcome. That in itself is significant because assuming the DoJ wants access to the device in order to establish connections and communications with other parties, time is important. How much value does the data hold if legal proceedings play out for years? What’s the half-life of this class of data and even if it’s short lived, how likely is the government to back off given the value of setting a precedent as I mentioned earlier?

Everyone want to backdoor everyone

Think back to 2013 – early 2013 – and the years leading up to it. Think specifically about Huawei, the Chinese manufacturer that builds a heap of networking products sold around the world. Well they were sold around the world until the US government banned them from selling to certain government departments due to the concerns around state-sponsored Chinese backdoors. This prompted Huawei to completely withdraw from the US market due to “geopolitical reasons”.

That was in April, just one month before Ed Snowden headed off to Hong Kong to release the troves of data he exfiltrated from the NSA, including information on their “Tailored Access Operations” program:

This is a picture of “supply chain interdiction” or in other words, intercepting routers en route to customers so that backdoors could be installed. Cisco were none too happy about this and swore black and blue it was news to them, but it also sheds some light on why the US would be so opposed to Huawei doing business locally; they want easier access to the supply chain so that they can compromise the devices themselves.

It goes both ways too and it was only a couple of years ago that China was doing the same thing regarding Windows 8. Going back even further, there were bans on Windows 2000 and Intel products for the same reason. The point is that the politics of the home state can have a huge impact on the global organisations attempting to do business on the world stage because we’ve consistently seen someone backdooring someone else. The bans on the products mentioned here were due to suspicions that other nation states were using them for espionage purposes (although I’m sure that behind closed doors these suspicions were probably proven on multiple occasions). Where does it leave the likes of Apple if they’re forced to bow to a highly publicised court order to backdoor their devices? Let’s look at what this means for the industry in the US.

The impact on US tech companies

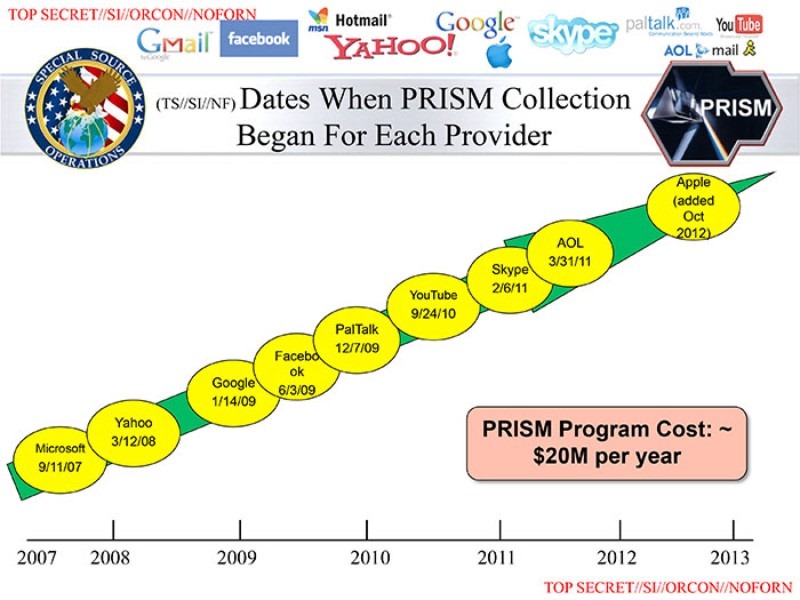

There’s no escaping the impact that programs like the NSA’s PRISM have had on US tech companies:

The debate about how complicit these organisations were in PRISM aside, the very fact that we’re looking at data from US companies being retrieved directly by US intelligence services has had a major impact on the US tech industry. Indeed, this is undoubtedly one of the things that’s driven Apple to implement such strong encryption in the first place, but the impact is far broader than just.

For example, in increasingly privacy-conscious Europe, we’re seeing Microsoft now building out the Azure cloud platform in Germany such that their local telco Deutsche Telekom is the “data trustee”. In other words, all access to data in that location is now controlled by German law and as the headline in that link proclaims, the US government “can’t touch it”. It’s precisely because of programs like PRISM that a service of this nature is even in demand because quite simply, companies outside the US have lost trust in those within the US because of the impact of US government intervention.

The issue of data sovereignty is becoming particularly important as the example above highlights. Equally though, US companies are now going to great pains to demonstrate that local customers in international locations have a right to privacy and protection from US government overreach. The Microsoft case in Ireland is a perfect example of this where a legal case in the US has resulted in a request for data stored in their Ireland data centre. Microsoft is making a point of saying that “a U.S. judge has no authority to issue a warrant for information stored abroad” and that’s an enormously important position for them to take. Imagine the ramifications – imagine the damage the US government could do to Microsoft – if it transpires that they’re required to hand over that data. The loss of trust by international customers would have far-reaching ramifications.

The point as it relates to Apple and the FBI is that this case has the potential to do enormous damage not just to Apple’s international sales, but to other US based tech companies. Think about the impact in markets like China and India: here’s two countries with more internet connected citizens than the next twelve countries put together yet well under half their populations are even online. China in particular is the short to medium term growth market for Apple; given the friction already mentioned above between the two nations when it comes to trusting each other’s tech, what would be the impact of Apple granting the FBI access to the data?

Which nation states can access the backdoor

One of the issues we always come up against when talking about backdoors is who gets access to them. Obviously the FBI wants to be able to force Apple into providing access to the data, but what if it’s the Australian Federal Police? Ok, we’re in bed together anyway courtesy of the Five Eyes arrangement, what about the Russian FSB? Should they be able to access this hypothetical backdoor if Apple builds it? What about China’s MSS?

If the US government dictating iPhone encryption design sounds ok to you, ask yourself how you'll feel when China demands the same.

— Matthew Green (@matthew_d_green) February 17, 2016

The problem is that you’re talking about a multinational organisation attempting to run a global business then needing to get picky about who can exploit weaknesses deliberately designed into the product. It’s a very slippery slope once there is a backdoor. Without it, life is much simpler – nobody gets in – and clearly this is where Apple wants to be.

I’ll leave you with this tweet from Ed Snowden which is important in the context of the geopolitical ramifications of backdoors:

The New York Times: @FBI's war on #Apple will aid China. https://t.co/URWamc702q pic.twitter.com/KnHDsWIENY

— Edward Snowden (@Snowden) February 17, 2016

Remember, it was only a year ago that Obama was very vocal about China’s desire to have backdoors in products yet here we are, almost bang on 12 months later and we’re seeing the very same demand that so worried him about China issued directly from the US government.

Where’s Google in all this?

One of the most insightful things I’ve read on the issue was this tweet by my friend Jeremiah Grossman:

Today would be the perfect day for Sundar Pichai (Google, CEO) to back up Tim Cook (Apple, CEO).

— Jeremiah Grossman (@jeremiahg) February 17, 2016

This is enormously poignant and it points to the need for a unified stance by the tech industry. When it’s Apple alone taking this stance (and obviously they’re the subject of the court order so you’d expect them to be the most vocal), it’s “The FBI v. Apple” when what we’d really like to see is Apple, Google, Microsoft and any other big players standing up and making this a tech industry issue, not an Apple issue. As I said earlier, the entire US tech industry has already been tarnished by the government, surely now is the time for a unified industry-wide stance?

However, shortly after Jeremiah’s tweet, Sundar Pichai did indeed speak up:

1/5 Important post by @tim_cook. Forcing companies to enable hacking could compromise users’ privacy

— sundarpichai (@sundarpichai) February 17, 2016

2/5 We know that law enforcement and intelligence agencies face significant challenges in protecting the public against crime and terrorism

— sundarpichai (@sundarpichai) February 17, 2016

3/5 We build secure products to keep your information safe and we give law enforcement access to data based on valid legal orders

— sundarpichai (@sundarpichai) February 17, 2016

4/5 But that’s wholly different than requiring companies to enable hacking of customer devices & data. Could be a troubling precedent

— sundarpichai (@sundarpichai) February 17, 2016

5/5 Looking forward to a thoughtful and open discussion on this important issue

— sundarpichai (@sundarpichai) February 17, 2016

The first tweet is refreshing; seeing the CEO of one of the world’s largest companies refer to one of their fiercest competitor’s positions in a favourable way is precisely what we want to see on such an important topic. (Keep in mind that Tim Cook has been critical of Google’s approach to privacy in the past too.) The subsequent ones water it down a bit though and I get the sense that Sundar’s position is far more fluid than Tim’s which as I mentioned earlier, has been staunchly in favour of strong encryption for some time now. Keep in mind also that due to the very long tail of old Android devices and low-spec hardware, most Android devices still don’t use full disk encryption so the court order is less of a pressing issue for Google than it is for Apple. (Incidentally, I looked for stats on Android disk encryption rates but couldn’t find anything authoritative, leave a comment with a link if you can point me at a resource.)

This is all possible due to an old iPhone

There’s a really good post over on the Trail of Bits blog which talks about the mechanics of how Apple can actually remove the brute force protection on the device and ultimately help the authorities gain access to the data. They make a very important observation that’s not often been represented elsewhere in the press and it’s this:

At this point it is very important to mention that the recovered iPhone is a 5C. The 5C model iPhone lacks TouchID and, therefore, lacks the single most important security feature produced by Apple: the Secure Enclave.

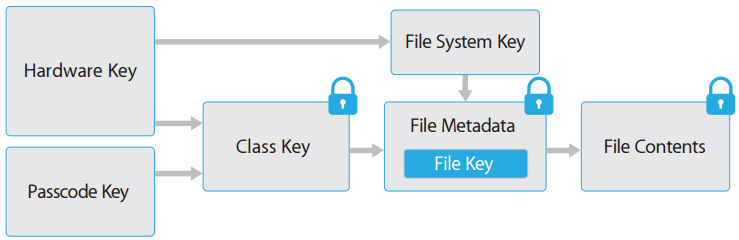

They explain how the Secure Enclave is a coprocessor within iOS that brokers access to the encryption keys. It leverages an additional embedded key such that both this key and the passcode entered by the user is required:

It keeps track of failed login attempts and independently degrades the retry rate after multiple failed passcodes are entered. The significance in terms of Apple being able to weaken the security is the difficulty they’d have in doing so with a Secure Enclave encrypted device:

If the San Bernardino gunmen had used an iPhone with the Secure Enclave, then there is little to nothing that Apple or the FBI could have done to guess the passcode

All iPhones with either Touch ID or an A7 processor or newer (so in other words, everything since the iPhone 5S) are equipped with a Secure Enclave. While the article doesn’t quite go to the point of claiming it’s impossible to breach (and to my earlier point, that’s just not a statement we like to make in security circles), clearly it does significantly raise the bar in terms of what’s required to circumvent the security controls. So in other words, if you can afford a newish, relatively high-end phone, you’re much better protected. This raises another issue altogether:

it's great that the phone used by the rich protects them from the FBI.

— Christopher Soghoian (@csoghoian) February 17, 2016

What about the phones used by everyone else? https://t.co/fFxFBNnA1T

The “digital security divide” is another issue for another story, but it’s a poignant observation when we’re talking about who’s at risk of surveillance. Equally poignant: if you were hell-bent on wreaking havoc and ready to go out in a hail of gunfire and didn’t want your device accessed by the feds, you’d simply go out and buy a current gen iPhone.

Edit, 19 Feb 2016: A day later, Trail of Bits have modified some of their guidance, most notably by adding an update to say: "Reframed “The Devil is in the Details” section and noted that Apple can equally subvert the security measures of the iPhone 5C and later devices that include the Secure Enclave via software updates". The commentary in the ensuing day since this post does indeed indicate that newer devices are still at risk which also helps explain Apple's emphatic defence of the court order.

Let us not misconstrue the story

We know that the media has a way of putting a spin on things that frequently misrepresents the underlying issue and it’s no surprise that we’re seeing a lot of this at present. Bruce Schneier summarised the problem quite succinctly:

Today I walked by a television showing CNN. The sound was off, but I saw an aerial scene which I presume was from San Bernardino, and the words "Apple privacy vs. national security." If that's the framing, we lose. I would have preferred to see "National security vs. FBI access."

This is enormously important because if the hearts and minds of people get misdirected into believing that Apple’s approach is at odds with the best interests of the nation, there won’t be the groundswell of support that’s required to keep irrational court orders like this at bay.

Summary

This issue is much bigger than just Apple providing access to a single device, it’s much bigger than the encryption debate and it’s much bigger than just the US. There are angles to this we haven’t thought about yet and it’ll continue to be sensationalised by the press, misrepresented by the government and rebuked by Apple.

The ramifications of them actually complying with this court order would likely spread well beyond just compromising a device that’s in the physical possession of law enforcement. A precedent the likes of Apple being forced to weaken consumer protections will very likely then be applied to other channels; what would it mean for iMessage when the authorities identify targets actively communicating where they’re unable to gain physical access to the device? It sets an alarming precedent and all the same arguments mounted here by the FBI could just as easily be applied to end to end encryption.

But let me finish on a lighter note: this also has the potential to result in greater consumer privacy for everyone. In part because if Apple successfully defends their stance then they’ll have the precedent the next time the issue is raised. In part also because this incident may well prompt them to tie their own hands even further and indeed this appears to be the case with the newer generation of device. And finally, because the world is watching how this plays out and it will influence the position of other governments and tech companies outside the US. If sanity prevails, we may well all be better off for having gone through this.