We have a data breach problem. They're constant news headlines, they're impacting all of us and frankly, things aren't getting any better. Quite the opposite, in fact - things are going downhill in a hurry.

Last month, I went to Washington DC, sat in front of Congress and told them about the problem. My full written testimony is in that link and it talks about many of the issue we face today and the impact data breaches have on identity verification. That was really our mandate - understanding the impact on how we verify ourselves - but I want to go back a step and focus on how we tackle data breaches themselves.

Before I left DC, I promised the folks there that I'd come back with recommendations on how we can address the root causes of data breaches. I'm going to do that in a five-part, public blog series over the course of this week. This isn't to try and fit things into a nicely boxed calendar week of drip-fed content, but rather that there are genuinely 5 logical areas I want to focus on and they're each discrete solutions I want to be able to link to specifically for years to come.

Let's get started with one I raised multiple times whilst sitting in front of Congress - education.

Data Breaches Occur Due to Human Error

You know the old "prevention is better than cure" idiom? Nowhere is it truer than with data breaches and it's the most logical place to start this series. The next 4 parts of "Fixing Data Breaches" are all about dealing with an incident once things go badly wrong, but let's start by trying to stop that from happening in the first place.

When I look at the data breaches we see day in and day out, every single one of them can be traced back to a mistake made by humans somewhere along the line. Often multiple mistakes. For example:

I've written before about vBulletin being plagued by SQL injection flaws over the years. This is due to mistakes in the code (usually non-parameterised SQL queries) and to this day, it remains the number one risk in the OWASP Top 10. The problem is then exacerbated by sites running on the platform not applying patched versions. This is due to a mistake by the administrator and "Using Components with Known Vulnerabilities" is also in the OWASP Top 10.

Particularly since last year, we've seen a huge number of very serious incidents due to leaving data publicly exposed. The Red Cross Blood Service breach gave us our largest ever incident down here in Australia (and it included data on both my wife and I). CloudPets left their MongoDB exposed which subsequently exposed data collected from connected teddy bears (yes, they're really a thing). Pretty much the entire population of South Africa had their data exposed when someone published a database backup to a publicly facing web server (it was accessible by anyone for up to 2 and a half years). Every single one of these incidents was an access control mistake.

Back to another classic vulnerability - direct object references. OWASP had this as a discrete item in their 2013 Top 10 and have now rolled it into "Broken Access Controls". This coding mistake meant that anyone could remotely access trip history and battery statuses of Nissan LEAFs plus control their heating and cooling systems. The same coding mistake in the Kids Pass website enabled personal data to be exfiltrated so long as you knew how to count (then they blocked anyone trying to report the issue to them).

This barely scratches the surface - I've only picked a small sample of incidents I've been directly involved in - but the point is that these are all attributable to people making mistakes.

People Don't Know What They Don't Know

Security tends to be viewed as a discrete discipline within information technology as opposed to just natively bake into everything. It shouldn't be this way and indeed that's where many of our problems stem from. But part of the challenge here is that people simply don't know that there's a big part of their knowledge missing. They're at the "unconscious incompetence" stage in the hierarchy of competence:

In other words:

The individual does not understand or know how to do something and does not necessarily recognise the deficit. They may deny the usefulness of the skill.

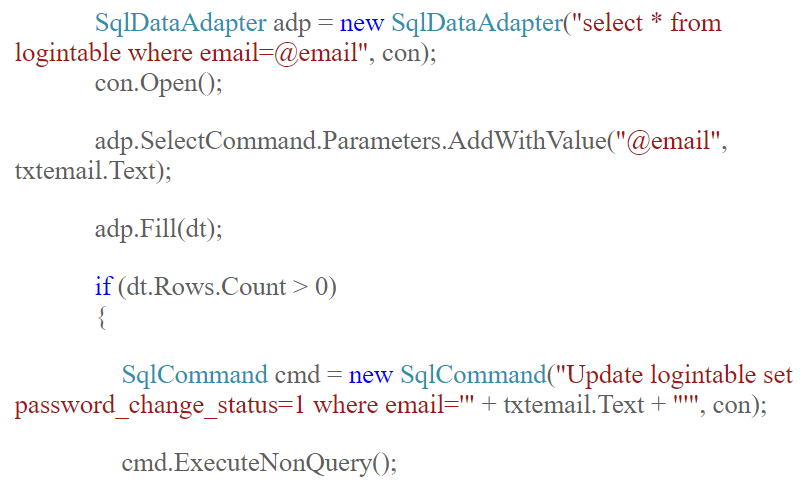

Let me demonstrate precisely the problem: have a look at this code from a blog post about how to build a password reset feature (incidentally, read the comment from me and you'll understand why I'm happy sharing this here):

There are two SQL statements here: the first one is resilient to SQL injection. The second one will lead to your database being pwned to the worst possible extent. In this case, "worst" is seriously bad news because the blog post also shows how to connect to the database with the sa account (i.e. "god rights"). Oh - and it uses a password of 12345678.

The point I'm making here is that there are plenty of folks out there who may well have the best of intentions, but they have no idea that they have a massive deficiency in their competency. Until they're exposed to that - until they're given the opportunity to learn - they remain in that first stage of competency.

Education is the Best ROI on Security Spend. Ever.

There are a lot of security companies that will sell you a lot of security things for a lot of money. Some of them are very good too, but they can be significant outlays for products that often perform very limited duties.

There are 3 aspects of education I want to focus on here in terms of ROI:

Firstly, on the investment side, it's cheap. Whether it's Pluralsight for less than a dollar a day, a workshop like my upcoming 2-day one in London or any of a multitude of other resources, the dollar outlay is a fraction of many of the products the organisational security budget goes on.

Secondly, on the return side, not writing vulnerable code has enormous upside. The cost of a data breach can be a hard thing to quantify, but one precedent we have is from TalkTalk who claimed their incident cost them £42m. Now keep in mind that this was a basic SQL injection attack mounted by a child (literally - he was 16 years old) and the code he exploited would have been very similar to that in the image above from the password reset article.

Finally, education is so cost effective because you leverage it over and over again. Penetration tests are awesome but you're $20k in the hole and you've tested one version of one app. Web application firewalls can be great and they sit there and (usually) protect one asset. Both of these are enormously valuable and they should be a part of any software delivery process, but education is the spend that keeps on paying dividends over and over again.

But there's another thing about investing in education that stretches that dollar even further, and it all has to do with stages of the app lifecycle.

Education Smashes Bugs While They're Cheap

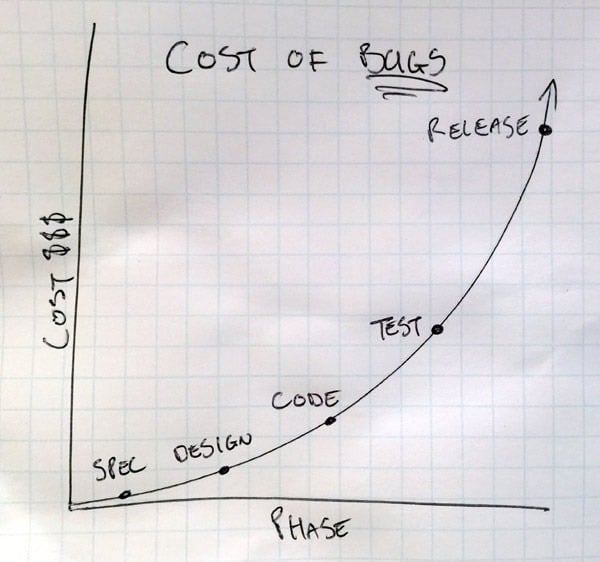

Think about this in the context of maximising the benefit each dollar spent brings an organisation and consider this question: where is the best possible time in the project lifecycle to fix security bugs? The answer is the same as it for bugs of any kind and it's perfectly illustrated by the cost of bugs over time:

Consider SQL injection because it's come up a few times here already: thinking back to the image above where we saw both good and bad code right next to each other, writing it correctly in the first place is free - there's zero additional cost at development time over and above writing it poorly.

But what if you only realise there's a problem during QA? Now you need to rewrite the code so there's effort involved there, but you also need to re-test it and ensure you've not broken anything else. (Yes, automated testing makes that a lot easier but let's face it, the types of companies writing the sort of code we saw earlier aren't exactly TDD'ing their way to software development enlightenment!) The effort of fixing that bad code is now an order of magnitude higher than not screwing it up in the first place.

Take it a step further - what if the software is already live and then you find the bug? Now we take all the effort from the previous point and add a deployment dependency as well. (And again, yes, deployments should be fully automated low-friction processes you can repeat many times a day without disruption, but refer to the previous comments on the types of companies most at risk of writing bad code.) Whichever way you cut it, there's more effort, more risk and more cost involved as you progress through the app lifecycle.

And then there's the worst-case scenario: it's the previous point plus you add someone finding the vulnerable code then exploiting the application. What does that now cost? Well if you're TalkTalk it's apparently £42m! One line of bad code that could have been easily avoided early on in the lifecycle made the difference between business as usual and a major security incident. The difference is education.

Summary

I'm conscious I have a vested interest in pushing education given what I do with Pluralsight and workshops, but I have that interest because it's just such a fundamentally sensible idea to focus on it. Everything else that follows in the next 4 parts of this series only makes sense when we get this first part wrong.

So that's education, more on the next part of the series coming tomorrow!