Apparently the average number of apps someone has on their smartphone is 41. It sounds like a lot but do the maths on how long you’ve had the phone (or a predecessor) and it you realise it’s a pretty low frequency of taking something new from the app store. A significant proportion of these apps allow you to share sensitive personal information with them; your home address, phone number, email and password, for example. Or they provide features that result in cash changing hands such as online shopping. But how do you know which apps are securely handling this information? How, for example, do you know which of these employs a satisfactory security level?

When you visit a website in the browser on your PC, there are tell-tale security indicators such as the presence of a padlock in the address bar. Find yourself on a site with any number of potential security issues and the browser will actually step in and either block the risk or very overtly tell you that this probably isn’t somewhere you want to be doing business. Not so in mobile apps though and as it turns out, each of the ones in the image above has serious security flaws that would immediately turn many people off if they saw them in the desktop world.

The reality is that a huge percentage of mobile apps of all sorts of persuasions have serious security issues for a variety of reasons. I decided to take a closer look at just a handful of local Aussie ones to see where things are going wrong down under.

Entirely missing connection encryption

Wireless internet connections have become ubiquitous and for very good reason. The prevalence of mobile devices and online services coupled with high mobile data usage costs mean consumers are naturally going to seek out wireless hotspots wherever possible. The thing is though, once you’re connected to someone else’s Wi-Fi then all your unprotected traffic is essentially at their disposal; they can read – or change – any of it.

There are obvious examples of where consumers readily and consciously connect to public hotspots: cafes, restaurants and shopping centres all frequently offer this free service. But there are less obvious examples of connecting to wireless hotspots too, for example an attacker may stand up a wireless hotspot called “Free WiFi” and whilst they might indeed provide free internet, they’re also harvesting everything victims send through there. Oh – and all they need is a consumer laptop, hardly specialised gear.

Or they might step it up a notch and invest in something like a Wi-Fi Pineapple for $100. This little piece of equipment will cause many wireless devices to automatically connect to it without the user even taking their phone out of their pocket. Once connected, it’s the same deal – all the traffic can be read or manipulated by an attacker.

Then of course there’s the fact that even without wireless, unencrypted network traffic remains at risk. ISPs, for example, have the ability to obtain unfettered access to the data flowing through their services and certainly there are precedents of them abusing that access. This can also happen with your desktop computer connected via ethernet directly to your ADSL modem.

This is why we have encryption of the transport layer which we’ll often refer to as SSL or see represented as an address that start with https. When implemented properly, SSL gives us confidence in who we’re connecting to and that the data hasn’t been modified or read during transit between the device and the server. This is why you’ll see all your banking sites on HTTPS addresses when you login via the browser, but it’s a bit harder to see what mobile apps are doing. What SSL means is that even when the connection has been compromised (and that’s a premise that we always need to work on), the traffic remains secure.

Mobile apps talking across the internet (which a significant portion of them do), require the same level of protection that browsers on a PC do. In other words, any transmissions of a sensitive nature such as sending passwords, retrieving personal information or performing transactions, must occur across an encrypted connection. But many don’t.

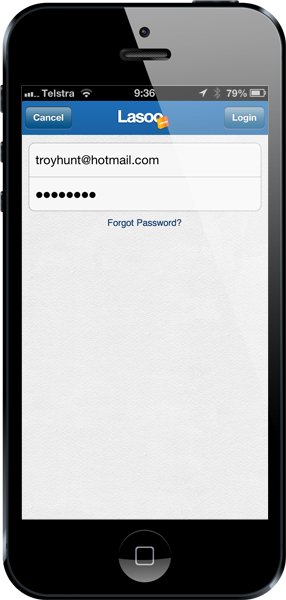

Take the Lasoo app:

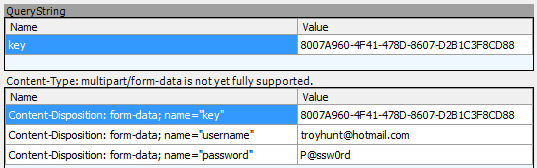

This is a pretty typical login screen. What I’ve done though before opening this app is connected my iPhone to a network that’s watching the traffic. It’s my own network but it’s entirely reflective of what an attacker can do. If the security is implemented correctly then I – with my attacker hat on – won’t be able to see any sensitive data when I attempt to login as the encryption will protect it between the phone and Lasoo’s server. But if it’s not, I get to see stuff like this:

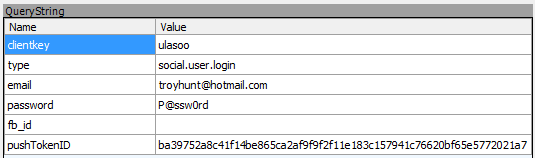

This is what an attacker intercepting the traffic can see – no protection whatsoever, they haven’t even attempted to encrypt my credentials. There’s no warning of this and these days it’s fair to say that there’s an assumption that mobile apps will implement basic defences such as connecting to HTTPS addresses. Actually this case is even worse than that because not only is the request sent over an unencrypted connection, it also sends the username and password in the URL via a query string which means it almost certainly gets logged at all sorts of points along the way. This sort of data normally gets sent in the header or body of a request but by putting it in the URL itself, by default it will end up in web server logs and quite possibly in the logs of things like proxy servers or ISPs involved in the communication. In other words there is almost certainly a treasure trove of Lasoo customers’ passwords (which statistically will also more likely than not be the same password they’ve used in other places) floating around various places on the internet.

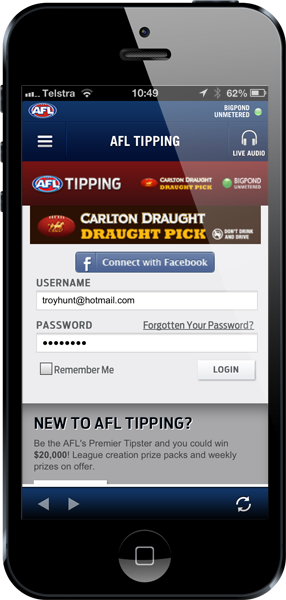

How about something a little different – say the AFL app and a bit of footy tipping:

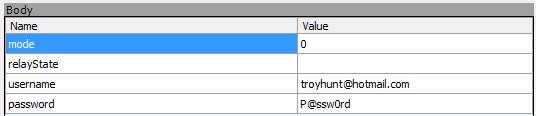

Unfortunately absolutely no security there either:

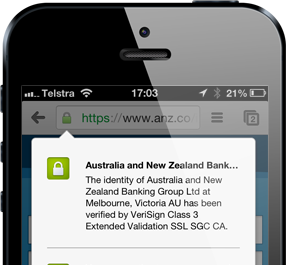

Both Lasoo and the AFL example are mobile apps which means how they connect to services over the internet is ingrained within the application code – you see nothing in the app itself to illustrate the security position of the connection. Compare that to opening a site like ANZ bank in the browser on an iPhone:

This is a very different security proposition because we get explicit reassurance from the browser that the connection is encrypted by virtue of the padlock above the address bar. In fact some mobile browsers such as Chrome on iOS will even allow you to click the padlock and see that not only is the connection properly encrypted, but who you’ve connected to:

This is important because it’s not enough just to have the connection encrypted, you don’t simply want to have a secure connection directly to an attacker! No, you also need assurance of identity which brings me to certificate validation.

Failure to validate security certificate

Let me explain this problem with a non-digital paradigm; back in the day, important messages would be enclosed in an envelope which was then emblazoned with a wax seal:

The seal would contain an emblem identifying the sender and should the recipient receive the envelope with the wax still intact then in theory they could be confident that the message hadn’t been read or tampered with by anyone along the its journey. However, this only works if the recipient actually looks at the seal and ensures that it is indeed from the sender.

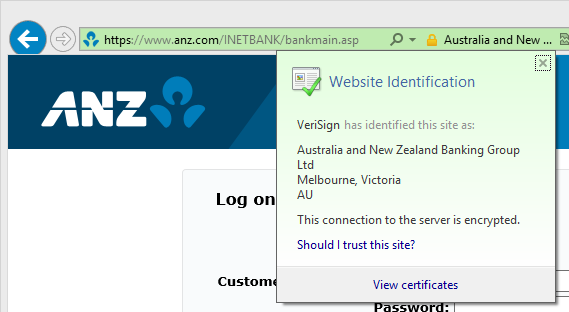

Today’s digital parallel is the SSL certificate and you can see it in the browser each time you load a webpage with an address starting with https – it looks just like this one from ANZ:

Here we can see the name of the certificate owner in the address bar which has now turned green (this is a feature of “Extended Validation” certificates which require greater identity due diligence on behalf of the owner) then we can see the message beneath that asserting the identity of the site as being “Australia and New Zealand Banking Group Ltd”. This is the equivalent of inspecting an unadulterated wax seal.

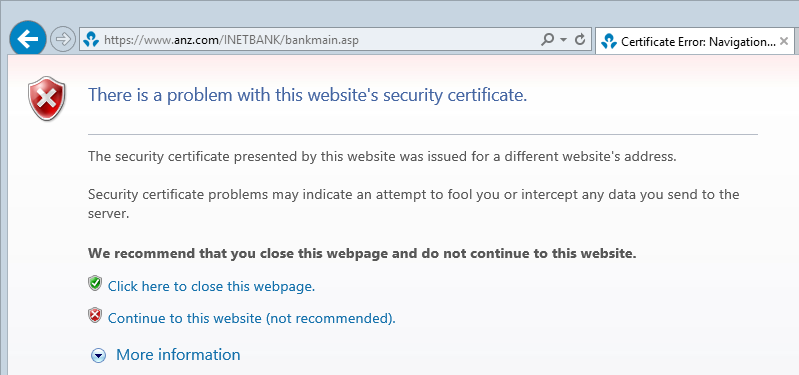

Now let’s tamper with the seal and to do that I’m going to intercept the message then apply another seal to it which means it now looks like this:

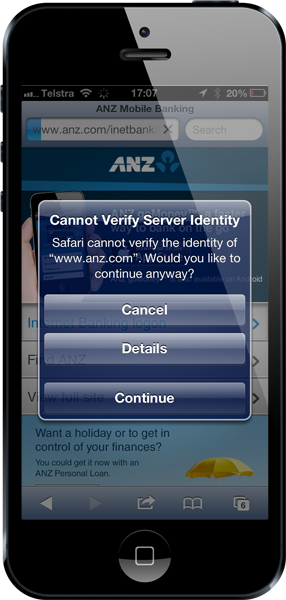

There is absolutely no mistaking that something is now very wrong. Yes, the connection is still encrypted but it’s not going through to who you think it is. You can see the same thing in mobile browsers:

This is effectively a broken seal – the identity is no longer correct and a human can clearly see this and take appropriate action which is always to get the hell out of there!

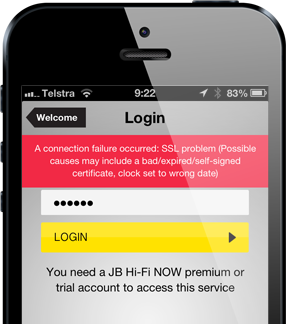

In mobile apps it’s up to the app itself to validate the certificate and most will do that implicitly when they request a resource off a secure connection on the internet. For example, here’s what JB Hi-Fi does if we try to get in the middle of the conversation:

That’s a perfect response – give the user a warning and prohibit them from proceeding. Something is wrong with this communication – beware!

But now let’s try logging on to Aussie Farmers Direct:

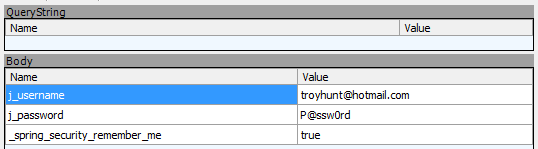

The login goes through just fine. Even though the credentials are posted to a secure address, the app never actually checks the certificate which allows an attacker to issue their own and then inspect (or manipulate) the data. Here’s what an attacker can see when you login to the app:

The transport layer security is rendered utterly useless. It’s like applying the wax seal but then the recipient never checking it; the seal is from completely the wrong person yet the message still gets processed as usual. That’s a serious security flaw.

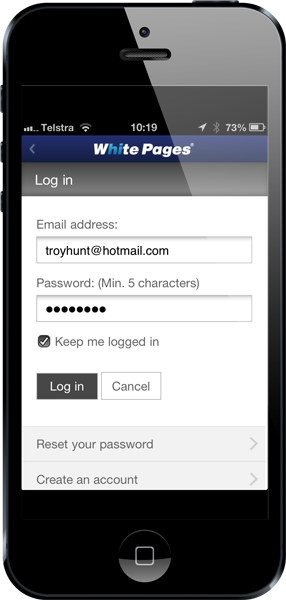

Here’s the White Pages app:

And when you attempt to login:

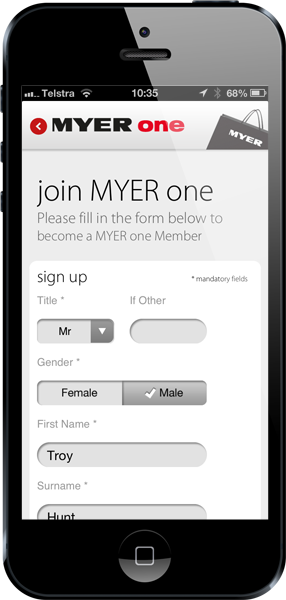

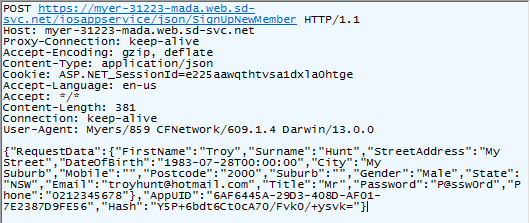

The same again with the Myers app where it encourages you to register:

Yet fails to properly protect any of the very useful data you’ve just handed over‘

Many other local apps I tested happily validated the certificate and responded appropriately when things were wrong – Coles and Woolworths, for example, behaved great and responded in a similar way to JB earlier on. But for the three above, the transport security is rendered entirely useless due to a simple misconfiguration.

Manipulation of data in transit

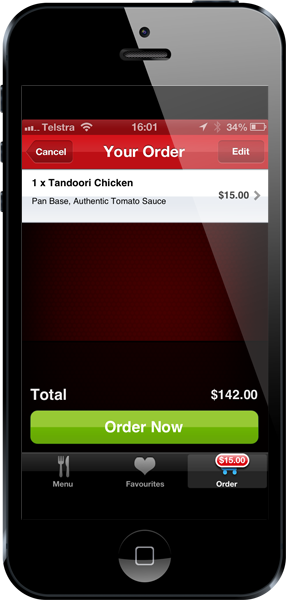

The idea of the seal I mentioned in the last section is not just to stop people from opening the message and reading the contents, it’s also there to stop someone from manipulating the contents then passing the message on like nothing has happened. No seal, no confidence in message integrity. What that means is that you can do things like this:

This is the local Aussie Pizza Hut app and as you can see, I’m ordering a $15 Tandoori Chicken pizza that comes to a grand total of $142. The reason that $15 x 1 comes out to $142 is because the services which manage the shopping process are insecure and can be manipulated. I’ve been a bit generous here and rounded the total up a fair whack, others might not be so generous... Obviously I didn’t go through with ordering a $142 pizza so what happens downstream of here is yet to be seen, but the very fact that information that leads to a financial transaction can be manipulated in flight is extremely worrying.

The thing about this example is that the risk is not necessarily just about an attacker targeting a consumer (although certainly there are many angles where they might), in a case like this it’s entirely possible for an attacker to target the organisation providing the app. Of course this also depends on the reconciliation process within the order fulfilment within Pizza Hut’s servers, but it’s clear that an attacker can easily manipulate the data being transferred between app and server.

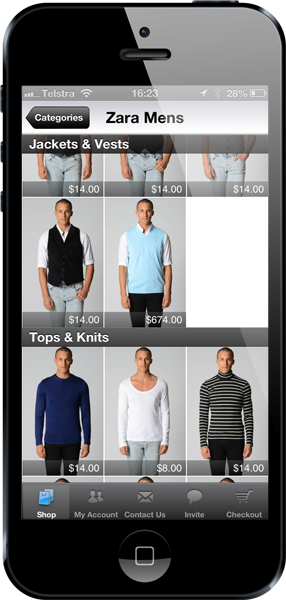

Another example is Ozsale:

That’d want to be a damn nice turquoise vest! Ozsale does their payment fulfilment through PayPal which has proven to be a rather robust service, the question of course is whether the ability to manipulate data between the app and the server can impact the payment information then sent on to PayPal. Certainly the data can be manipulated, in fact in both the Pizza Hut and Ozsale apps there’s no protection whatsoever on the data flowing over the network and that includes on the login credentials.

Even without the potential ability to impact financial transactions, the very fact that shopping applications fail to implement even the most basic of security protections is astounding. And this is indeed what protecting network traffic is – a basic task. I’ll stress again that without actually completing a purchase you can’t be sure whether this sort of data manipulation will remain in the scope of the app or actually persist through into the order lodged on the server, but it does provide attackers with lots of options they can pursue against both consumers and retailers.

These are serious, simple problems

Perhaps the greatest frustration for security professionals and competent software developers alike is that these are ridiculously simple risks to avoid. We often see SSL implemented “insufficiently” in the browser for web applications because there are a few twists and turns in terms of how to properly use it and there are also circumstances which make it difficult to consistently employ.

Mobile apps pose an altogether simpler proposition though – getting SSL right is 99% about having a valid certificate on the server (prices for these start from $0), serving the services used by the app over a secure HTTPS connection and ensuring that the app doesn’t trust invalid certificates (which is the default position for most programming languages anyway).

How is it that we end up in this position? Well with 900,000+ apps in the Apple app store Google’s Play store now apparently having broken the 1 million mark for Android apps, there are bound to be some with issues out there. But this is more than that – of the apps I tested I was actually surprised when one didn’t illustrate the most basic of security flaws.

You often hear this phrase “Minimum Viable Product” or MVP, in other words, what’s the least amount of effort required to get to market quickly and capitalise on being a leader in the shopping / tipping / pizza industry. Most of us in the software industry are familiar with the Time, Cost, Quality triangle – you can only pick two so guess which one is going to get deprioritised first? Exactly, the question is how much customers will pay when the quality of security is compromised in a rush to get the latest shiny new app out there.