I started building ASafaWeb – the Automated Security Analyser for ASP.NET websites – about a year back to try and automate processes I found I kept manually doing, namely checking the security configuration of ASP.NET web apps. You see, the problem was that I was involved in building lots of great apps but folks would often get little security configurations wrong; a missing custom errors page, stack traces bubbling up or request validation being turned off among numerous other web app security misdemeanours.

The thing is, all these things are very easily detected remotely without any access to the source code, you just need to make a few HTTP requests and draw some conclusions based on the structure of the responses. I wanted to make it dead easy for people to run these scans on their sites so I built ASafaWeb, put a great big text box on the front page to take a URL and said “Here you go”. This was – and still is – ASafaWeb on demand:

Since December 2011 when I began logging de-identified data, ASafaWeb has racked up over 27,000 on demand scans. When I analysed the scan results a few months ago, two thirds of websites were found to have serious configuration vulnerabilities. Clearly ASafaWeb was finding a lot of problems with ASP.NET websites.

But one thing that continued to nag me was that websites aren’t static; a website passing a scan today in no way guarantees it will pass tomorrow. The ease of configurability in ASP.NET remains both a strength and weakness and every time we release there’s that risk that a change in configuration has introduced a vulnerability. This is why today I’m releasing the next phase of ASafaWeb beta to the public – scheduler:

It should be apparent by its name, but my intention with the scheduler is to not only make sure a site is “safe” today, but that it remains that way. That’s my need, and I believe it’s the need of many others too.

Use cases

Let me outline some cases where a scheduler makes sense. One that I frequently see is when developers are troubleshooting unhandled exceptions on the site. A well configured website has custom errors turned on and a default redirect set to show a friendly error page when things go wrong. Problem is, this doesn’t tell you exactly what the root cause is – which is exactly the way it should be. Internal error messages should never be shown to the public. So the developer turns custom errors off, publishes the web.config and identifies the root cause. Then forgets to turn them back on.

What the ASafaWeb scheduler does is ensures that if this scenario does arise, you find out about it early because the site is regularly being tested for configuration vulnerabilities like this. Of course for the developer, a better option is to use ELMAH to log those errors somewhere only visible to them – so long as they keep it secure. And there’s our next use case – it’s easy to configure ELMAH wrong (see that previous link) and even if you believe the security configuration is good, the guidance can change over time, as was the wash-up from my post.

Another classic use case is new vulnerabilities. The hash DoS issue from December caught us all by surprise but the day after Microsoft patched it, ASafaWeb was able to remotely detect the presence of the patch. Now with the scheduler, when something like this next happens (and remember, hash DoS wasn’t the first such incident) ASafaWeb will be able to identify non-patched sites and alert those who have configured a schedule in next to no time.

Setting up a schedule

So how do you set up an ASafaWeb schedule? I’ve made this as easy as possible with an absolute bare minimum of friction. Everything you need to do is only there because it wouldn’t work without it and I want to explain each of those steps now.

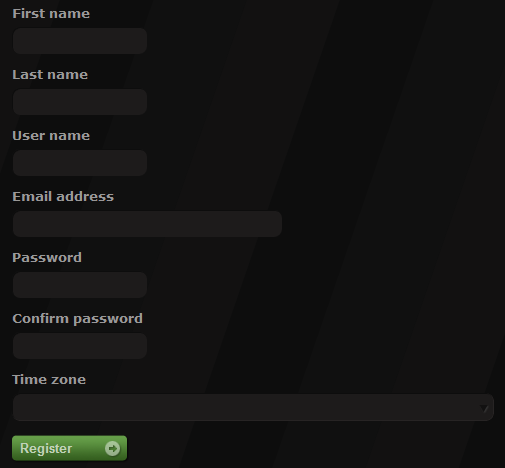

Firstly, you’ll need to register. There are actually a few reasons for this including the fact that you need some means of accessing your personal scans. You need to leave an email address so that you can be contacted if required after the scan runs and finally, the scheduler allows you to run lots of scans on a regular basis and there needs to be some form of accountability for this. That accountability is that you need to identify yourself (more on that shortly). Moving on, here’s what you’re asked for when registering:

The time zone is probably the only field that doesn’t immediately expose its purpose. When you schedule a scan, everything is converted back to your local time and the only way ASafaWeb can do that is it needs to know what “local” means to you.

After you sign up you’ll need to verify your email address. You’ve all seen this in action on other sites before – right after signup you get an email with a “click here to verify” link then you’re in. This comes back to the accountability again; there is a risk of abuse and verification of email goes some way to mitigating that.

Once you’re verified, you can immediately log in and start creating scheduled scans from the “Schedule” link in the navigation:

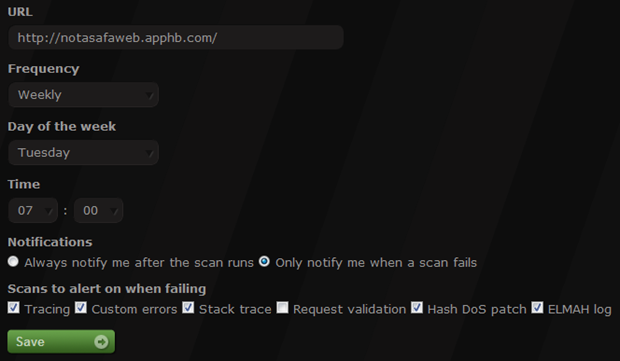

You won’t have any scheduled scans to begin with so the first thing you’ll see is a great big “Create” link. Here’s what’s behind that (I’ve pre-filled it to indicate purpose of fields):

Let me run you through what’s going on here. Firstly, you need a URL, that much is obvious. What’s not immediately obvious is that the URL must be unique per user; you can’t effectively run the same scan every hour by creating 24 daily schedules for the same URL.

Speaking of schedules, you can schedule scans to either run every day or every week. When they’re weekly you can choose the day of the week and in both cases you choose the time of the day you want it to run right down to a five minute interval. This allows you to schedule the scan around a release cycle or a weekly meeting or just simply so it’s waiting for you first thing each morning.

Notifications are ASafaWeb’s way of communicating scan results to you. You can either be implicit or explicit about how you wish to be communicated with. The explicit approach is to be notified every single time the schedule runs regardless of whether it finds anything or not. It means you can get positive feedback on the state of your site(s) without wondering if the thing is working simply because it didn’t find a problem. Of course the inverse approach is to only be notified when something has gone wrong. In fact initially this is what I thought most people would want – just tell me after I’ve broken it – but feedback from the private beta was that some people want the extra communication, or at least the ability to choose.

If you do elect to only be notified once something goes wrong, you then need to choose what needs to go wrong in order to be notified. Let me give you an example: say you’ve got a DotNetNuke site and you want to set up an ASafaWeb schedule. Now unfortunately DotNetNuke will always fail the request validation scan so being able to choose to ignore this one is important.

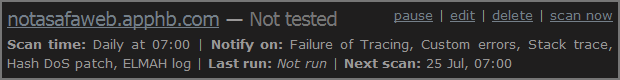

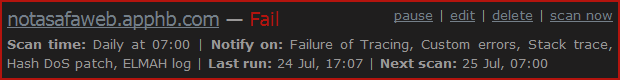

Once you’ve got the scan set up it will appear back on the schedule page along with any future ones you add:

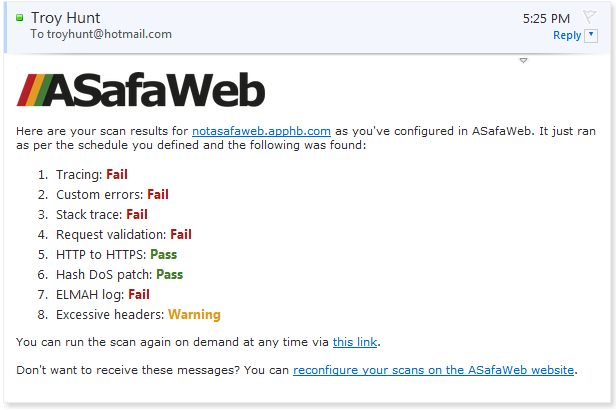

Now you can always just hit the “scan now” button which calls back out to the classic ASafaWeb on demand scan but it will also update the scheduled record. Or alternatively, just wait until the schedule comes around and causes the scan to run in which case you’ll get a nice email with all the details:

Once the scan runs, the status on the schedule page will be updated and in this case, the scan is failing:

You can also always just pause the scan then reactive it at a later date (i.e. you know it has issues you’re resolving) or delete the thing permanently. Plus of course you can always just jump back in and edit it.

Tracking and privacy

To date, one thing I’ve always been adamant about with ASafaWeb is not holding any data I absolutely, positively didn’t need to hold. There’s no surer way of not having it disclosed than to not have it in the first place!

The schedule changes that, but only by a bit. Clearly ASafaWeb now needs to track the URLs to be scanned in the schedule and obviously to achieve the statuses in the screen grabs above it needs to store these against those URLs. To mitigate this, there is no historical data stored (it’s only the status of the last scan) plus there is no fidelity stored about what scan failed (i.e. stack trace).

Over time, I’ll keep an eye on the feedback as to the legitimacy of this approach versus the value of holding more data. Already I’ve had feedback from the private beta that people would like to be able to view historical results (i.e. how a site’s security profile has changed over time) and that’s a possibility for the future (among many other possibilities).

Caveats

I’ve thus far been successful in avoiding Ts & Cs and inane agreements that satisfy nobody outside the legal profession and I’ll be keeping it that way for as long as possible. But there are some caveats to be aware of and they’re mostly common sense things:

- Scheduled scans are a “Best Effort” endeavour. In other words, ASafaWeb will do everything it can to run the scan at the scheduled time, but there are no guarantees. There could be a massive queue of scans at the same time, there could be network outages, there could be platform outages. Having said that, it’s proved highly reliable over months of testing so I’m cautiously optimistic.

- There is still no proof of ownership required to run a scan on a website; you can literally run it against anything. However, there is now a “blacklist” feature which is an unfortunate by-product of previous incidents (yes, there has been more than one) where a site owner has requested ASafaWeb not scan their site. Is this an over-reaction? Possibly, they’re innocuous HTTP requests in a sea of malicious ones (judging by some of the requests I see), but it’s better to play this one safe. In short, please schedule your scans against your sites!

- I’m keeping the “beta” tag. Rightly or wrongly, this is an illustration that I don’t yet consider ASafaWeb to be “complete”. Now of course implies there an absolute state where no further development happens which we all know if never the case (nor should it be), but there are a few things in the pipeline which I think need to be completed before I consider this a holistic offering. All of that’s about what’s coming next…

What’s coming next?

ASafaWeb has a roadmap – schedules is one of the stops along the way but there are many, many more to come. The roadmap actually breaks down into a couple of facets which you can think of as features on one axis (of which scheduling is one) and scans on the other axis.

The features have a roadmap which includes finding better ways to tie scans into key points in the release cycle of an application as well as making it easier to scan more of your sites. I’ll keep the details a little quiet for now, but this is the general gist.

There are plenty of options left for scans too, in fact there are a number of additional security configuration attributes that can be detected from the existing request patterns let alone those that can be pursued by making more requests. The trick with scans is that making HTTP requests is expensive. Not in terms of dollars (at least not directly) but in terms of tying up system resources with long running processes.

The roadmap for both features and scans will roll on with continual contributions to each. In fact speaking of continual, the concept of continuous delivery is very dear to my heart and some of these new features will tie into that very nicely. Stay tuned!

Go for it!

So that’s it for now – schedules are finally live! I’d love your feedback on this feature and indeed that’s the best way to ensure it’s relevant and useful to everyone. As with before, even outside of new features ASafaWeb will continue to evolve; not a week has gone by since launch that I haven’t written at least a handful enhancements ranging from simple CSS tweaks to mobile compatibility to functional changes to scan behaviour. So keep the feedback coming and help me to make ASafaWeb a genuinely valuable resource for you.