I’m sorry to be the one to break this to you, but, well, your company network is compromised. I know, I know, you thought you had firewalls and antivirus and Dropbox is blocked but somehow the nasties got in. Unfortunately that also means that all the web apps you have behind your corporate firewall are, for all intents and purposes, now public.

Now you may not even be aware of the hacked state of the network you spend your nine to five hours in, many of these intrusions go entirely undetected. Even when they are detected, it’s the sort of thing that organisations like to keep pretty quiet so unless you have an integral role in organisational security then the chances are you’re never going to hear about 99% of these incidents. Rightly or wrongly, this gives people the warm and fuzzies: they feel safe and sound ensconced within the confines of their company network believing that everything on the inside is super secure.

It’s a serious conundrum, the whole idea that just because something sits inside the corporate firewall that it has achieved some sort of position of security greatness that allows people to take shortcuts on application security. The “private” network has become the security blanket of the web app world; yes, it will give you a warm glow having it present, but will it really keep the bad guys away? And for that matter, does the perception of security provided at the network perimeter lead developers to take shortcuts in the design of “internal” applications? I say it does – but it shouldn’t. Let me explain why.

The first step is admitting you have a problem

Let’s kick off with a few minutes from Reuters. This really sets the scene so it’s worth watching to put things in perspective:

This comment by Dmitri Alperovitch was particularly relevant to the point I’m making:

As a corporation, you should first and foremost assume that you’re compromised. You need to stop thinking about ways to prevent this activity because you won’t. A determined adversary will always get in.

Now I don’t think Dmitri quite meant to say you should turn off your firewalls and make things a free for all, but what he does clearly say and what’s resonated unanimously by security pundits the world over is that you must always make the assumption that your network is already compromised – because it almost certainly is.

Of course all this raises the question – how was it compromised? And for that matter, why was it compromised?

For sale: Hacked companies

You can buy pretty much anything online these days. Obviously there are books and music and all the usual paraphernalia you’re probably already familiar with, but there’s also the shadier side of the web. You might have heard of Silk Road, the online market place for illegal drugs secreted within the bowels of the Tor network. There are other equivalents for all sorts of other levels of nastiness, the point being that every conceivable commodity now has an online marketplace.

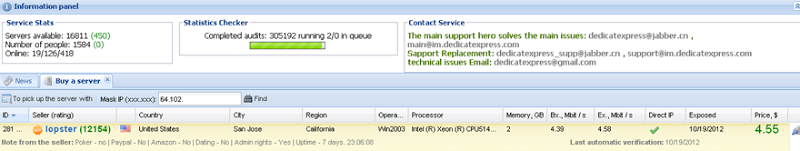

One of those commodities is access to corporate networks. Last year Brian Krebs wrote about a service selling access to Fortune 500 firms. For as little as a few dollars you can buy remote desktop access into these firms by renting out one of nearly 17,000 compromised computers across the globe. In fact the whole thing is so well organised that they even have a very well-crafted pricing strategy:

The price of any hacked server is calculated based on several qualities, including the speed of its processor and the number of processor cores, the machine’s download and upload speeds, and the length of time that the hacked RDP server has been continuously available online (its “uptime”).

Here’s an example of a hacked server for rent inside Cisco (remember, this is the guys who make firewalls):

It’s all a very well thought out, dare I say “professional” operation and a service like this literally puts an attacker from anywhere smack bang inside a company’s firewall, you know, that thing behind which everything is “secure”, right?

Of course selling access to infected PCs is a significantly broader market than that serviced by just the one provider. Only last week Dancho Danchev wrote an interesting insight into the market where he talked about the cost of buying access to machines:

The service is currently offering access to malware-infected hosts based in Russia ($200 for 1,000 hosts), United Kingdom ($240 for 1,000 hosts), United States ($180 for 1,000 hosts), France ($200 for 1,000 hosts), Canada ($270 for 1,000 hosts) and an International mix ($35 for 1,000 hosts), with a daily supply limit of 20,000 hosts, indicating an ongoing legitimate/hijacked-traffic-to-malware-infected hosts conversion.

Now of course these machines may not all be within corporate environments, a significant portion of them are no doubt personal machines which, of course, means no impact on the office, right? Yeah, about that – let’s talk BYOD.

BYOD, “The Cloud” and the death of the network perimeter

Bring Your Own Device – what better way to save a company zillions than by allowing people to bring their own gear into the office and just plug it in?! Of course we have the likes of Apple and Samsung to blame for this, the buggers keep making devices that have an uncanny habit of being just as good at doing corporate email as they are at playing Angry Birds. Naturally the question gets asked: if our employees already have these devices, how about we save some coin and get them to use those rather than buying them new tablets / phones / PCs every few years? Employees are generally happy with this arrangement too as their shiny new device is now an easy tax write-off. Besides, what’s more convenient than putting in a hard day at the office crunching sales figures and writing up the company’s product development pipeline then handing over the iPad to the 4 year old so they can smash some bad piggies?

I’m by no means against BYOD, in fact I think that when done well there’s a win-win in there for everyone. The point I’m making though is that the concept of a company’s internal network being some virginal, unadulterated landscape totally goes out the window. Sure, there are all sorts of controls and policies (both hard and soft) that go into place with a BYOD program, the point is though that you now have a lot of new players within your network.

But of course this isn’t an entirely new concept either, who’s guilty of ever plugging one of these into the corporate network?

Exactly, and nasties get through in these by host machines that are already “trusted” on the network. But where on earth do you get infected thumb drives from to begin with? This is a bit theoretical, right? Yeah, not so much and there have been many successful attacks mounted simply by scattering USB sticks around corporate car parks. There are enough people curious enough and unaware enough of the consequences to take a peek at what’s on there when they land in the office then WAMMO! Autorun malware time.

Ok, so you’re not stupid enough to plug in a random USB stick you picked up in the car park, good for you! But imagine this: your company sends you off to a government sponsored security conference where official IBM representatives are handing out software on thumb drives. Do you trust it and plug it in when you get back to the office? If you did this after visiting AusCERT a few years ago you’d pick yourself up a nice little bit of malware. As that article goes on to explain, this wasn’t the first incident courtesy of IBM nor was it the first related to AusCERT.

How about good old Stuxnet as another example:

Stuxnet attacked Windows systems using an unprecedented four zero-day attacks (plus the CPLINK vulnerability and a vulnerability used by the Conficker worm). It is initially spread using infected removable drives such as USB flash drives, and then uses other exploits and techniques such as peer-to-peer RPC to infect and update other computers inside private networks that are not directly connected to the Internet.

Now we’re talking about an air-locked nuclear facility that’s running malware. Granted, this was a government-sponsored attack and those guys tend to have a lot of resources at their disposal but then again, there’s an ROI to be made on getting nasties inside Fortune 500 companies as well. What value would it represent to an attacker to have internal access to any unprotected or at-risk data on a corporate network? Significant, and that’s why it happens so frequently. Fortunately though, there’s an easy fix:

Don’t laugh, IT departments have been known to glue up USB ports . So job done, right? Well that was kind popular for a while then something came along that trumped it:

Yes, “The Cloud”, that mythical all-encompassing beast that spans everything from email to file sharing to photo syncing. Let’s face it – people aren’t quite sure what it is but they know they like it and the reason they like it so much is that it makes things easy. Things like sharing files – that’s pretty damn easy now.

The problem, of course, is that you can’t superglue up the cloud. Oh you can block ports and blacklist domains but the reality remains that for every defensive measure there’s a dozen other “cloud” solutions out there making it easy for people to shuffle files around in and out of corporate networks. Got port 80 open? Yes? Of course you do, so now you have a file sharing and malware distribution channel.

The point of all this goes back to Dmitri’s statement in the video earlier on: you must assume that you are already compromised because it’s just so damn easy. What this means is looking beyond the veneer of the network perimeter and starting to work on the assumption that the outside is coming in. Most importantly though, it means dropping the line of “Oh it’s internal therefore it’s safe”. That’s not even close to the truth.

Exploiting the soft targets within

Security people often lament that the biggest risk we face in this industry is not the weaknesses in computer systems, rather it’s dealing with the soft organic matter using them. That would be us – those trusting, naive individuals that are so valuable to attackers.

Earlier this year the FBI reported that 91% of targeted attacks involved “spear phishing” or in other words, carefully crafted attacks intended to exploit very specific individuals or companies. Usually these attacks are constructed with a very clever veneer of legitimacy by including information that’s tailored to the environment they’re targeting. For example, a victim might receive an email purporting to be from the CEO with references to organisational constructs related to the target company (departments, products, people names, etc.) but then links out to compromised services designed to infect the victim’s PC.

Attacks of this type are enormously effective. From the article above:

57% of one organisation’s users were susceptible to phishing emails, despite a long-standing security awareness training that included newsletters, security awareness events and a poster campaign

This is a very typical figure and indeed there are many comparable studies with similar results. If an attacker wants to introduce malicious software into an organisation, all they need to do is ask!

“But”, you say, “We have antivirus!”, you triumphantly announce. Yeah, about that…

The ineffectiveness of antivirus

Antivirus, at its best, is nothing more than an arms race. Bad guys find risks and create exploits, good guys learn of exploits and create updates to patch risks or defend against them with AV and customers (eventually) take the updates and are now protected. Of course there are numerous problems with this.

The first is the lead time between the exploit and the patch. We’ve seen many examples in the past where viruses have run rampant in corporate networks because they’ve gained traction before updated virus definitions have been obtained or vulnerabilities in the underlying software patched. SQL Slammer is a perfect example: it’s a decade ago now but that was 75,000 infections in ten minutes. Or Conficker: much more recent and anywhere up to 15 million infected machines. How about Melissa: I vividly recall the chaos this caused my employer at the time back in ‘99.

So some viruses are very successful, that’s probably not news to anyone. What might be news is the sheer volume of evil software doing the rounds – it’s reportedly in the order of 200,000 – 300,000 new viruses every day. That article from last year then goes on to explain how numerous AV products were assessed against various selections of malware and concludes with a rather sobering result:

The average detection rate for these samples was 24.47 percent, while the median detection rate was just 19 percent. This means that if you click a malicious link or open an attachment in one of these emails, there is less than a one-in-five chance your antivirus software will detect it as bad.

In other words, you’re lucky if your AV picks up incoming nasties. Of course that by no means implies you should turn of your McAfees or your Kasperskys, but what it does mean is that if you think that up to date antivirus is sufficient to stop the nasties coming in, you’re almost always going to be wrong.

Outdated browsers and operating systems in corporate environments = increased risk

Here’s another problem with corporations protecting against digitally borne risks: they’ve usually slipped quite far behind the curve when it comes to modern browsers and operating systems. The latter in particular is a huge risk particularly at the time of writing when Windows XP remains extremely common. Upgrading operating systems and browsers in the Windows world that corporations almost ubiquitously live in is a high-friction process. Forget about the license cost, the killer is the packaging, testing, distribution and training involved in rolling out such a fundamental component of computing systems to thousands, tens of thousands or even hundreds of thousands of users; it’s damn hard, it doesn’t happen often and it’s usually well after the departing versions have been comprehensively succeeded by far more secure versions.

A case in point: a few months ago and only twelve months before Microsoft will drop support for it, AppSense was reporting that 45% of organisations were still running on Windows XP. That’s a 12 year old operating system that was superseded nearly seven years ago. Ok, so Vista wasn’t exactly a hit but even Windows 7 was now four years ago and it brought with it many fundamental security improvements. UAC, for example, is arguably one of the single greatest security contributions made to the Windows ecosystem since, well, I can’t really think of a comparison. It’s significant.

But it’s more than just Windows and as I've written before, we also face an impending crisis due to the way Internet Explorer is tied to the operating system and organisations stuck on XP are consequently also stuck on IE8. With IE11 just about to hit, that’s now three generations of security improvements including things like Application Reputation, Phishing Filter and the HTML 5 sandbox that many corporates are missing out on. Naturally, as browsers evolve things get better and a big chunk of that is security. Oh, and the “Yeah but you can always just install Chrome / Firefox on XP argument” – read the aforementioned post of mine – it’s not a “standard” browser!

The point of all this is to demonstrate that in many ways corporations are at more risk than consumers because of the circumstances that cause them to be late adopters of more modern, more secure computing environments. In an era when the population of Windows XP in the US is getting down towards 10% (I’m using the US so as not to include China, because, well, this), the corporate grasp on XP is significant and must have an adverse impact on the overall risk position behind the company firewall.

The rampant internal threat

Let’s say that your company is the leprechaun of compromised organisations and, well, isn’t. I mean imagine that there are no evildoers from the outside world inside the network seeking out your corporate data, it’s all good then, right? Unfortunately no, not by a long shot.

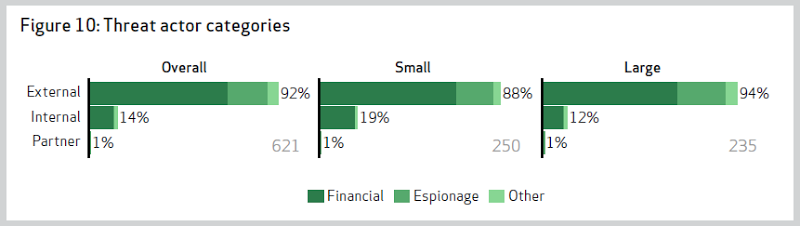

Every year Verizon produces a Data Breach Investigations Report which aggregates and reports across a huge range of security incidents in corporate environments. This year they covered more than 47,000 incidents and categorised the threat actors of each, or in other words “Entities that cause or contribute to an incident”:

- External: External actors originate outside the victim organization and its network of partners. Typically, no trust or privilege is implied for external entities.

- Internal: Internal actors come from within the victim organization. Insiders are trusted and privileged (some more than others).

- Partners: Partners include any third party sharing a business relationship with the victim organization. Some level of trust and privilege is usually implied between business partners.

External is where we usually view threats as coming from and that’s why we have corporate firewalls; keep the bad guys out and the good guys in. But what happens when the good guys are actually the bad guys? I mean do the internal folks contribute to attacks? Apparently, yes:

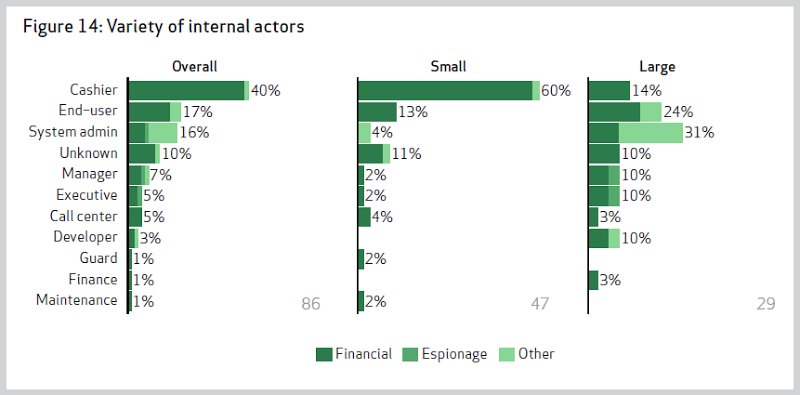

Of course it’s long been known that many security breaches originate from within but it’s always interesting to see the raw numbers. If you’re in a small organisation (that’s less than 1,000 employees), one in five breaches is going to come from the person sitting next to you or the guy you have coffee with or the lady running the accounts. Either that or one of these people:

Yep, even developers! In fact developers are particularly bad actors once you get into large organisations because after all, these are the guys who should have half a clue about how to siphon off valuable data.

Clearly the lesson is this: treat your colleagues as though they’re potential attackers. I’m not trying to be flippant about it, but it’s the same mitigations as you’d apply to protect data from external threats. Concepts such as the principle of least privilege are vitally important in order to protect data from your own peers so remember that the next time you’re told that open access to data is just fine because only staff can access it, you’d better take a good hard look at what that might actually mean.

The risk might be lower, but what about the impact?

Despite all the dire warnings above, there’s no arguing that the risk surface of apps behind the corporate firewall is less than those on the outside. Of course how much that risk is reduced and how much of it still remains is subject to all sorts of factors but arguably private network segments provide some advantages. But the risk of an attack is only one part of the equation…

Let’s say you have a “brochureware” site, you know, the ones that are effectively nothing more than a digitised version of what we typically would have handed out in brochures in years gone by. Naturally this would be a public, consumer-facing site and for the sake of argument, let’s say it does nothing more than present information about the company and their products or services which, of course, is very often the nature of public websites. Right – so what’s the impact of an attack? Defacement? Some public shaming? At worst perhaps some malware slipped into the site and served up to visitors? None of that is good and certainly it could lead to some reputation damage, but it’s a whole different world compared to the impact of web apps within the corporate network.

Think about the sort of web-based services you use within an organisation: payroll, customer databases, product pipeline, maybe email, sales figures (everyone loves dashboards, right?) and so on and so forth. Now what’s the impact of an attack on those classes of data? What would it mean for an organisation if their sales forecasts for the coming quarter were disclosed publicly when they were intended to be private? What would it mean to a publicly listed company? Or what would be the impact of employee payroll data being leaked? Clearly this is a whole new level of pain compared to a brochureware defacement.

The point is that whilst access to apps behind the great corporate firewall may be more difficult to obtain, the impact is very frequently much higher than if the same organisation’s publicly facing assets were breached. We’re probably all familiar with the debates that rage regarding how trustworthy “the cloud” is in terms of placing corporate data in an environment perceived as higher risk (although in many cases that’s a very weak argument), naturally it follows that the sensitivity of that data – the very data kept internal for security reasons – is going to lead to a significantly higher impact should it be breached. In many ways this should heighten the security awareness of these apps, not decrease it simply because it’s perceived to be in a lower risk environment. That may be true but crikey, get it breached and it’s going to be a whole new level of pain.

Pragmatic enterprise web app security

This is not intended to be a case about applying exactly the same levels of security due diligence to internal and external web applications because ultimately they’re different beasts. For example, in an internal environment you may not place database and web servers in isolated network segments with the former blocking public access. Likewise, you might not demand two factor authentication for internal users already on the company’s WAN. These can be burdensome processes and arguably don’t offer much value for apps within the corporate network.

However, think about all the usual web app security risks that I and others often talk about: SQL injection, cross site scripting, insufficient transport layer security and especially lack of access controls – all of these are security 101 type risks and the mitigations are no-brainers, why on earth wouldn’t you add protection against attacks? The mitigations are frequently ingrained into the practices of competent developers anyway, why would someone building that internal app compromise on a no-cost security feature such as parameterising their SQL statements or correctly encoding their output? They’re easy wins.

Above all though, the case I’m making is about complacency. Never, ever rest on your laurels and define the security position for an internal web app around the assumption that it is not accessible by attackers – because it almost certainly is.