I wrote recently about how Have I been pwned (HIBP) had an API rate limit introduced and then brought forward which was in part a response to large volumes of requests against the API. It was causing sudden ramp ups of traffic that Azure couldn't scale fast enough to meet and was also hitting my hip pocket as I paid for the underlying infrastructure to scale out in response. By limiting requests to one per every 1.5 seconds and then returning HTTP 429 in excess of that, the rate limit meant there was no longer any point in hammering away at the service.

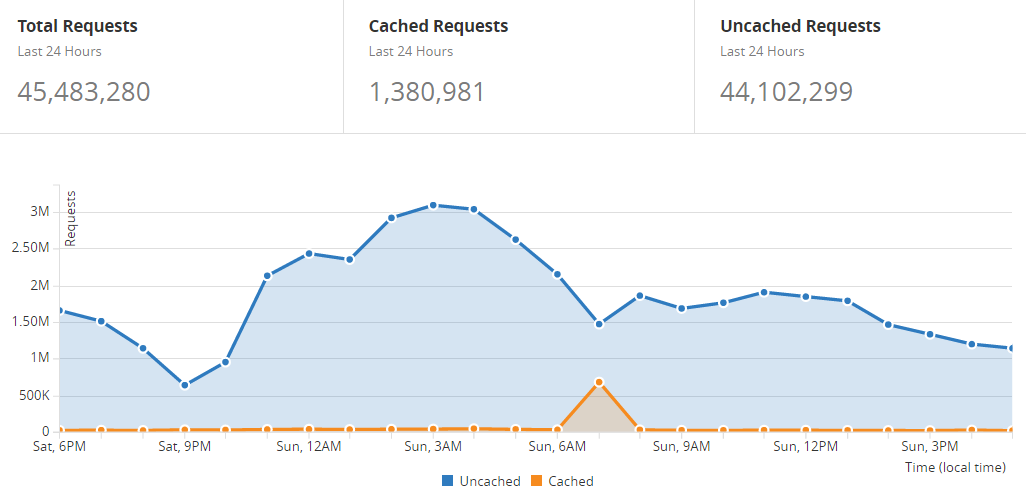

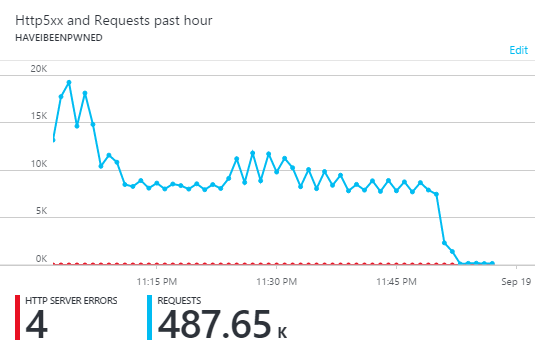

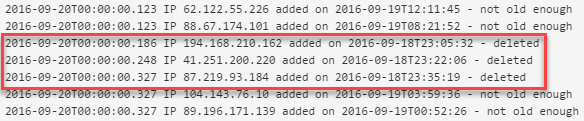

However, just because there's no point in it doesn't mean that people aren't going to do it anyway as my traffic stats last weekend would attest to:

That's as measured by CloudFlare and you can see that they passed 97% of the requests on to my site. However, in that 24-hour period where 45 million requests were served, the error rate according to New Relic was 0.0011%. It was only that high because someone also came along and decided to throw an automated scanning tool at it so as far as I'm concerned, downtime was effectively zero. In fact, it is zero as my weekly New Relic report from Monday shows (note that "views" doesn't include API hits which is where all the traffic way being directed to):

That was unchanged from the week before which also had zero downtime and I achieved that because I just spent money on scale to keep it fast for everyone. But that's not really fair now, is it? I mean that I should be paying out of my own pocket just to serve 45-something-million HTTP "Too Many Requests" responses to someone who's getting absolutely zero value out of them anyway. Let's fix that!

I started routing traffic through CloudFlare at the time of the blog post I mentioned in the opening paragraph. This was particularly useful when the traffic went from API abuse to all out attempted DDoS and I'll write more about how I handled that in the future (I'd like to wait until things settle down first). One of the great things about having CloudFlare in front of the site is that it opens up options of how to handle traffic upstream of your server, or origin as it's often referred to. For example, you can block an IP address outright. And they have an API to do it. And whilst there's a heap of IP addresses being abusive (refer back to that post), I can programmatically identify them.

Normally I'd stand up a WebJob to do this and I've written at length about my love of these. I love that they run in your existing website therefore don't cost any more, I love that they deploy along with the site and I love their resiliency. But they do draw resources from the infrastructure they run on and the hot thing these days is "serverless" which is like, on servers, but you kinda don't know it. One of the key tenets of a serverless architecture is that when it's provisioned in the way Microsoft has done it here, you just never even think about scaling underlying resources of what sort of load you're generating on the environment, it's just an endless stream of constant service that you consume at will. Of course you pay for that too in a pay-per-execution billing model (more on that later), but now it's just a money discussion and not a scaling one.

Azure's interpretation of serverless code is their Functions feature which is still in preview at the time of writing, but this is a perfect use case as it's something non-critical to the actual function of the site so a good place to dip a toe in the water. The value proposition of Azure Functions is that they're very small units of code that can be quickly written and deployed then triggered by events. So let's do this: let's use an Azure Function to take abusive IP addresses and submit them to CloudFlare to be blocked. That oughta do it!

My web app is already deciding when an IP is being abusive (and there's parameters around that I won't go into here) and then dropping it into an Azure storage queue. If that's an unfamiliar paradigm to you then check out Get started with Azure Queue storage using .NET first because I just want to focus on functions here. So that's the prerequisite: messages in a queue with each one containing a single IP address

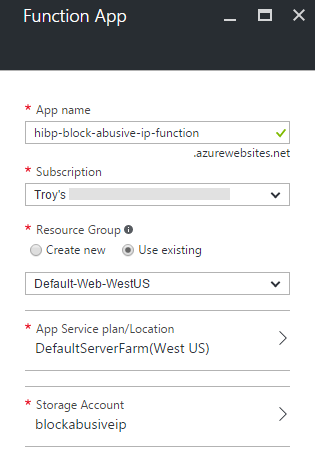

The Azure Function kicks off by creating a new one in the portal with some pretty basic details:

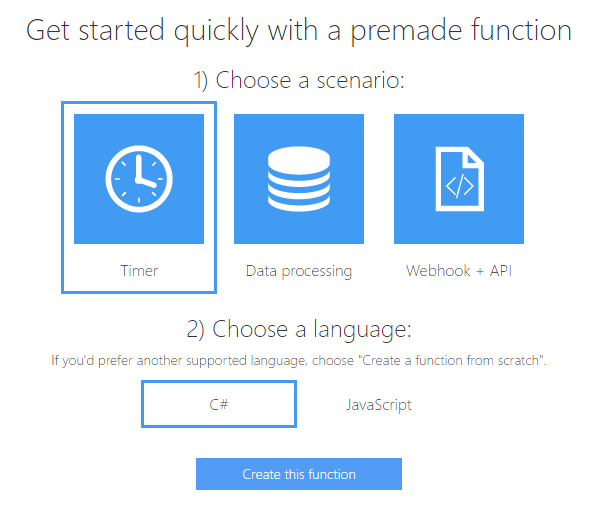

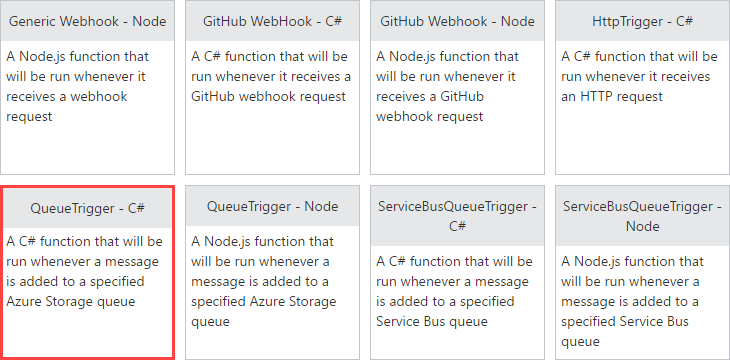

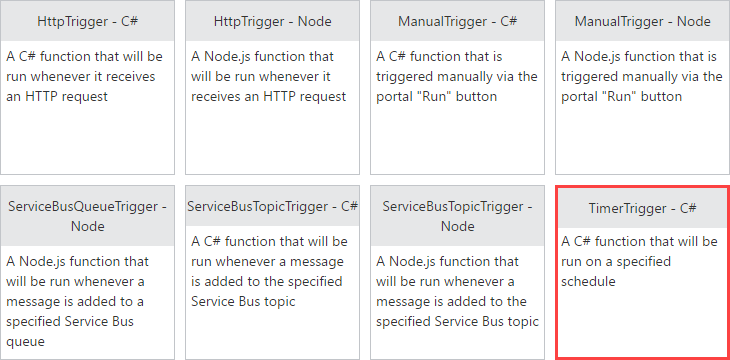

We're given some quick start options:

But let's instead just select "New Function" and start there:

It's going to be a queue trigger that I write in C# so we'll grab that option:

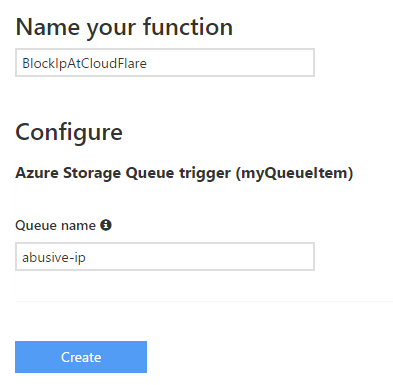

Now we'll name the function and specify the queue name it's going to watch:

This is the queue name my web application is already dropping abusive IP addresses into that resides in an existing storage account. This screen also allows you to choose the storage account (not in the screen cap), but only if it's not a "classic" storage account. However, there's an easy workaround for that if you're not already using the newer storage incarnation.

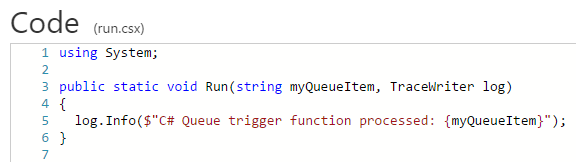

With the function now created, we have ourselves an empty stub:

And that's it! Well, it's something, it's code that's now running and will be invoked when an item appears in the queue. That item is then available via the "myQueueItem" parameter. In my case, it's just an IP address but it could easily be JSON serialised object containing a lot more data. The point is that I've now got something I can code against so let's look at what's involved in blocking that IP at CloudFlare.

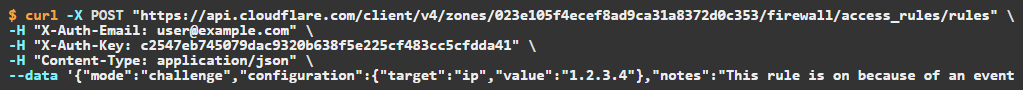

CloudFlare has got a great API that lets you do pretty much everything you can via the web interface in the browser. The API I'm particularly interested in though is the create access rule one on the firewall which looks like this (screen cap of their docs, not my API key!):

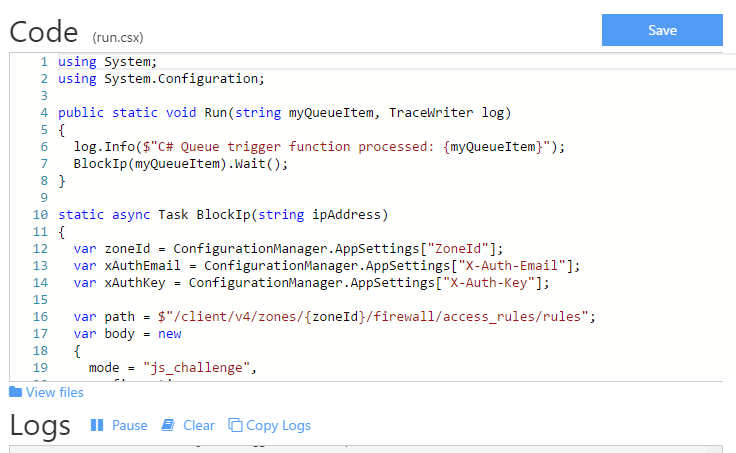

All I need to do now is wrangle up an HttpClient and send off the request. Here's the entire code:

The app settings are configurable via the portal just as you would with app settings in a website:

The "ZoneId" here is a unique ID for the CloudFlare asset I'm controlling (there's a zone subscription API you can retrieve that from) and the X-Auth-Key is on your CloudFlare account page. I also elected to use the "js_challenge" approach rather than actually block the traffic outright so that if a genuine user comes by and inadvertently sets off the trigger (or is on a shared IP that someone else is abusing), they'll just get an interstitial page rather than be completely blocked from the service.

You might also notice the rule notes uses a prefixed convention of "rate-limit-abuse-" followed by the present time in sortable format. I wanted to be able to look at CloudFlare and know when the rule was created, not just so that I can eyeball them in the portal, but because I'm going to use that information to manage them a bit later on.

And that's it - all you do now is save the code in the browser interface:

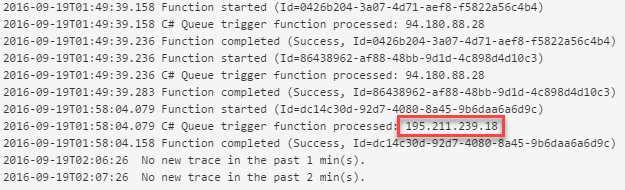

It compiles and runs immediately, returning output to the log beneath the code:

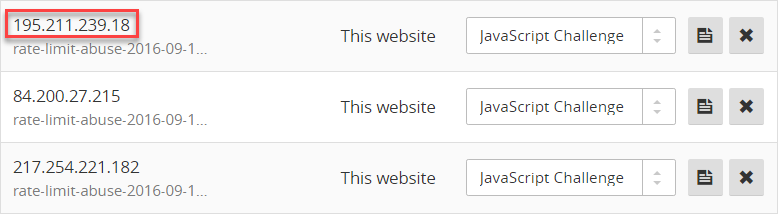

It's automatically picked up a number of items already in the queue and processed them, including IP address 195.211.239.18 (yet another Russian one). Output looks solid, there's a few successes there and they're all taking well under 100ms too. Let's now check the CloudFlare interface:

Perfect! The first IP address you see in that image is the one from the function output in the previous screen. In fact, on that screen you may notice the same IPs appearing a couple of times. This is down to the nature of the conditions in HIBP that flag the IP as being abusive and then queue it for processing, but the great thing about the CloudFlare API is that it's idempotent so it doesn't matter if you keep submitting the same thing to it over and over again.

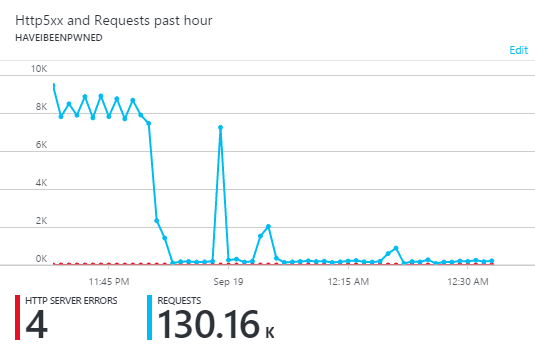

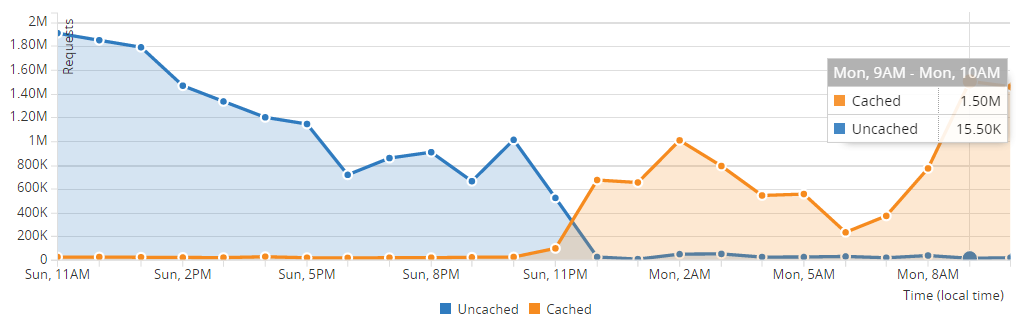

Of course the real proof in all this is what it does to my traffic:

Now this I like! That's precisely the outcome I was hoping for and it's absolutely smashed the traffic back down to purely organic users and those playing by the rules with the API. As I watched it run after initially rolling it out, I'd see occasional spikes:

These correlate with a new IP address suddenly hammering the service before being identified as abusive, getting thrown into the queue, picked up by the function and pushed over to CloudFlare to be blocked. The poor thing never stands a chance - it has a longevity measured in seconds from the time it starts abusing to being blocked outright.

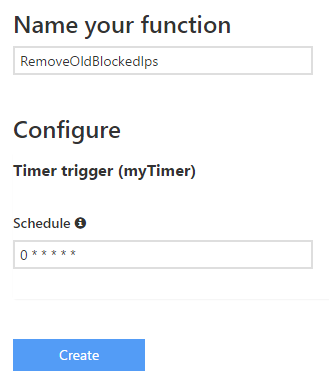

Now this is blocking which is awesome, but when it's so easy to create code to manage firewall rules this way, we can get even smarter about things. Let's go and create a second function:

This one is a timer trigger and I'm going to configure it like this:

The title should be self-explanatory - I'm going to remove "old" blocked IPs. My theory is that is that sooner or later, a blocked IP gets the message and moves on. Particularly when you're talking about botnet behaviour, these are quite possibly legitimate infected machines whose owners I still want to have unfettered access to the service. Alternatively, if it's just an IP that's inadvertently tripped the trigger then I don't want it being permanently blocked from making API calls which is what a JavaScript challenge effectively does. And finally, that CloudFlare firewall rule list is going to get unwieldy if I don't regularly prune it so this seems like a good move all round.

The schedule you see in the image above is a cron expression and that pattern means the function will run every minute. That's fine for testing, but for production purposes checking for old IPs that can be unblocked is fine on an hourly basis.

Anyway, onto code:

The only difference between the structure of this code and the previous function is the TriggerInfo passed to the run function. It's very simple stuff (in fact I've over-simplified it and not made it particularly resilient), but that's kinda the point with functions too - they can be extremely light weight and serve a very singular purposes within a self-contained construct.

When it runs, I'm seeing output like this:

When there's no IPs to remove, it's just a single GET request to CloudFlare which returns 100 rules (you can page through them if you have more) and the whole thing is executing in about 100ms. If there's a firewall rule to remove, then there's going to be a second call and a fraction more time. Frankly, that barely matters other than for the pricing, but I'll come back to that a bit later.

Checking in a half day after rolling this out, here's how things look from the CloudFlare side now:

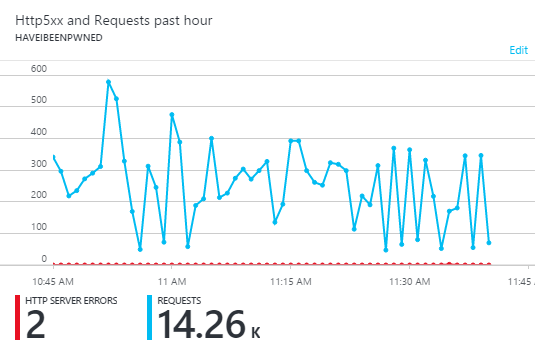

In other words, as of Monday morning, 99% of the traffic had come off my origin and you can see where the uncached requests dive dramatically as I implemented this late on Sunday night. Actually, the web server was just sitting there doing, well, basically nothing:

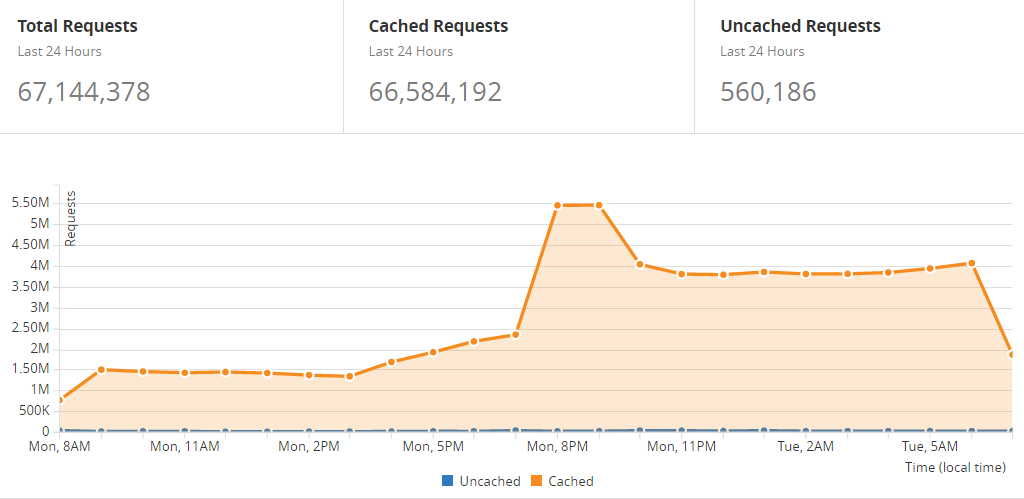

After implementing this, rather than whoever was abusing the system finally getting the message and moving on, they went at it even harder:

But it doesn't matter, not one little bit, because my system didn't have to deal with 67 million requests, rather a "mere" 560k. They can issue a billion requests in a day for all I care and so long as CloudFlare blocks it, we're all good. This all makes me very happy :)

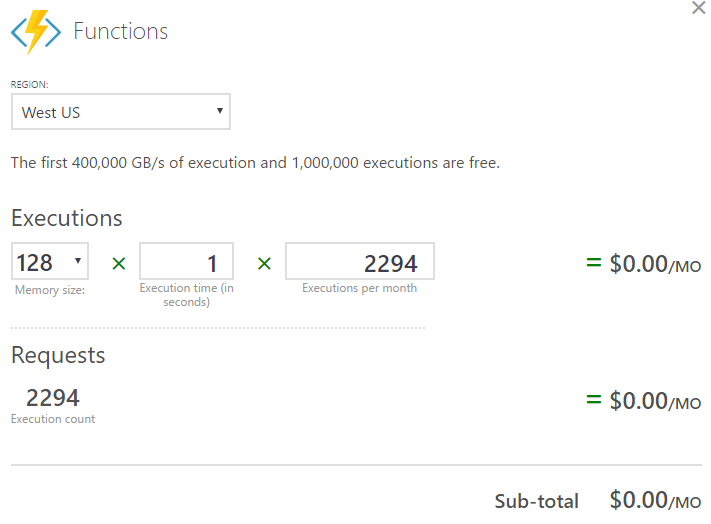

Now this is all great, but how much? You don't get stuff like this for free, right? Let's give the Azure pricing calculator a go and plug in what we know. Functions pricing works by looking at how long the code takes to execute and how many times it runs which is a very nice incarnation of commoditised cloud pricing - you're paying for what you use. Let's assume I see 50 nasty IP addresses a day so there's 50 function executions, then I check hourly for old IPs so there's another 24 which is 74 a day or 2,294 a month. They'll normally take 100ms to run, sometimes a bit longer but that doesn't matter as far as the calculator is concerned as it only works in round seconds. Anyway, it all looks like this:

Yeah, free. In fact, if it was used 1,000 times more it'd still only cost me $0.40 a month because of the free grant in the pricing structure:

Pricing includes a permanent free grant of 1 million executions and 400,000 GB-s execution time per month.

I like free, free is good. So now I've got the free Azure Functions orchestrating firewall rules on the free CloudFlare service which is taking traffic off my origin and dramatically reducing the cost of the web infrastructure I was paying for which is not free!

It's early days for this implementation as it is for my foray into Azure Functions. I love how lightweight they are and how easy it was to throw this all together - it took me longer to write this blog than it did to implement the feature! In fact, until doing this I'd never actually built anything on the Azure Functions service so to go from zero to something so useful in such a short time and for zero monthly cost makes me enormously happy. Only thing now is I'm left wondering how much other stuff I should be migrating over! Oh - and why I keep getting hit with requests that are returning absolutely useless responses 4 days after rolling this out, that remains a bit of a mystery...