Let's start with a quick quiz:

Take a look at haveibeenpwned.com (HIBP) and tell me where the traffic is encrypted between:

You see HTTPS which is good so you know it's doing crypto things in your browser, but where's the other end of the encryption? I mean at what point is the traffic decrypted? Many people would say it's at the web server but it's not, it's upstream of there at Microsoft's appliances that sits in front of the web application PaaS offering. You might see a padlock, but your traffic is not encrypted all the way to the server.

But it's not just HIBP and it's not just Microsoft either, many of the websites you visit every day will show you a padlock and not encrypt every segment of the network. For example, there may be unencrypted segments where caching appliances are involved or where security devices are inspecting traffic. That may be within private or public networks; the padlock icon gives you no assurance of that. And even if the traffic is encrypted all the way to the web server (also known as "the origin"), it may not then be encrypted when it goes to the database. Green bits in address bars make no assurances of that.

HTTPS is not an absolute state; it's not "you have it and everything is perfect" or "you don't and it all sucks". But there are some who believe just that and they neglect the more complex fabric of not just how we compose applications, but who is composing them and where the greatest risks we're facing today lie. This is what I mean by "unhealthy security absolutism" and it's a position I'd like to comprehensively squash here.

The mechanics of CloudFlare

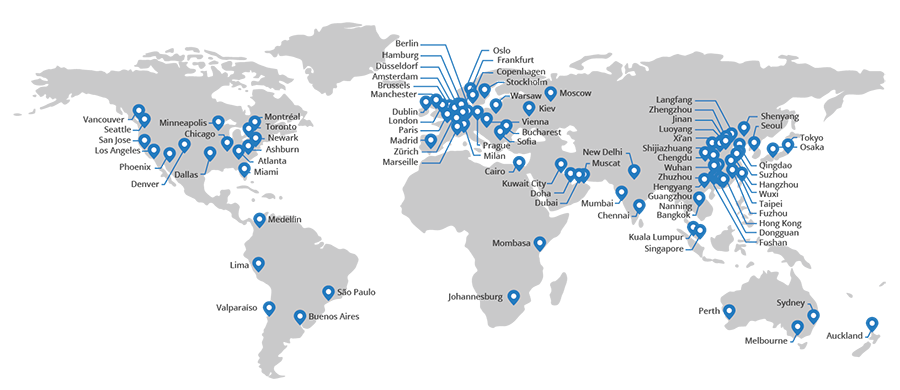

Dissenting voices are frequently directed at CloudFlare, the providers of a free service that wraps around your website and does everything from caching your content at edge nodes to blocking various attacks to allowing you to serve resources to your visitors over HTTPS. I've written in detail about the mechanics of this in the past and even created a Pluralsight course on Getting Started with CloudFlare Security. Needless to say, I've spent a lot of time thinking about CloudFlare.

The basic premise is that you create an account, set up a new site that takes a copy of all your DNS records then you update your name servers to use theirs. That's it, job done. It's literally a 5-minute exercise and the most complex part of it for most people will be figuring out where to change those name servers with their registrar. Once you've done that and DNS propagation magic takes hold, traffic is now routing through CloudFlare's infrastructure. Because the service is a globally distributed CDN, it means that visitors will be hitting an edge node in one of these locations:

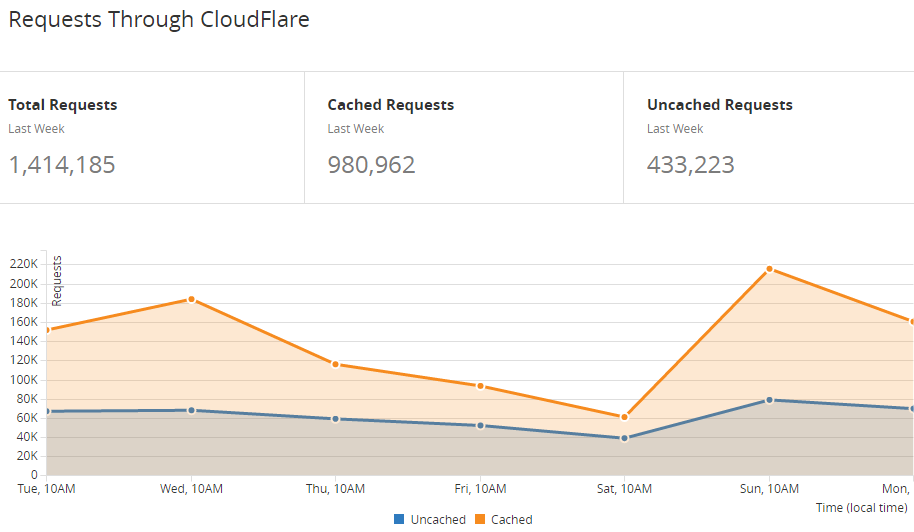

By operating as a CDN, CloudFlare can do some pretty neat caching tricks. For example, you're reading this now on a page that was served through their infrastructure and a bunch of the content on this page (images, style sheets etc.) would have been served directly by them, not by the website at the back end:

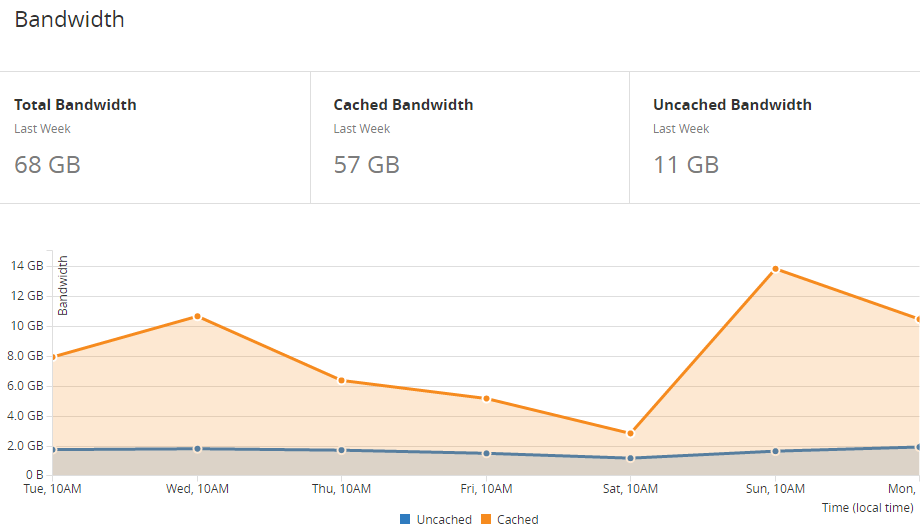

That's 69% of my requests that didn't need to hit my website. That's also 57GB in that same period and remember, this is all on a highly optimised website and during a fairly normal traffic period. This is particularly important for those paying for bandwidth as it slashes that cost by 84%:

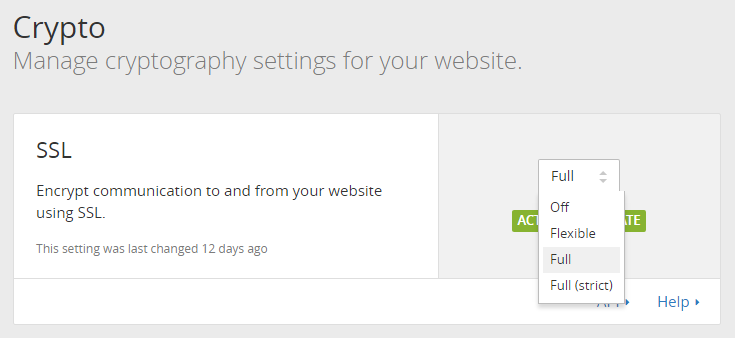

Even though my site is served over HTTPS, CloudFlare can do caching tricks because they sit in the middle of the connection, encrypting and decrypting the traffic between the browser and the origin. They offer a number of different configurations for this:

It works like this:

- Off: They won't serve anything over HTTPS

- Flexible: They'll serve content over HTTPS from their infrastructure, but the connection between them and the origin is unencrypted

- Full: Still HTTPS from CloudFlare to the browser but they'll also talk HTTPS to the origin although they won't validate the certificate

- Full (strict): CloudFlare issues the certificate and they'll intercept your traffic, but then it's all HTTPS to the origin and the cert is validated as well

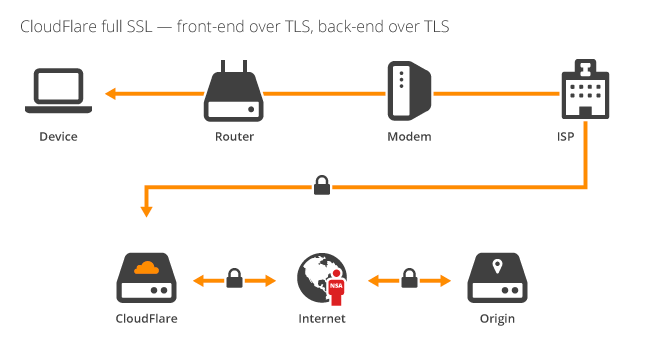

What it means is that you can choose how much SSL you want depending on what's supported by your origin. For example, the blog you're reading this on uses the "Full" model which looks like this (image courtesy of CloudFlare's blog post on the introduction of strict SSL):

In this model, everything between the browser and CloudFlare is encrypted; the router, the modem the ISP and anything else between the person viewing the website and CloudFlare themselves is protected. Traffic between CloudFlare and the origin is also encrypted, but CloudFlare doesn't validate the cert. The reason is because I can't serve a valid cert from the origin for troyhunt.com, it's not a feature supported by Ghost Pro. However, I can serve a wildcard cert for *.ghost.io and this act alone protects against passive eavesdropping (an attacker listening on the wire), but not active interception where an attacker inserts their own cert. They could do this because CloudFlare can't validate it.

If I could serve a valid cert from my blog's origin then most of the anti-CloudFlare arguments would go away, at least those related to interception on the wire. Some opponents would still argue that because decryption (and then encryption) is happening within CloudFlare itself then them having access to the traffic is unacceptable. In some cases, they're probably right, but if you don't want to spend big bucks setting up your own edge caching and optimisation infrastructure then you're left with using a provider like CloudFlare or Akamai or several others who will still have access to your traffic.

However, there are many people using CloudFlare to wrap SSL around their assets who have no access to any form of encryption at all. They can't do "Full" and they certainly can't do "Full (strict)" which means that network segment between CloudFlare and the origin remains open to monitoring and interception. It's predominantly the "Flexible" and "Full" models that opponents would proverbially throw out with the bathwater and that's the absolutism I want to tackle here.

Risks on the transport layer

Let's start by clarifying why we need an encrypted transport layer in the first place, the typical risks we see and who the various threat actors are.

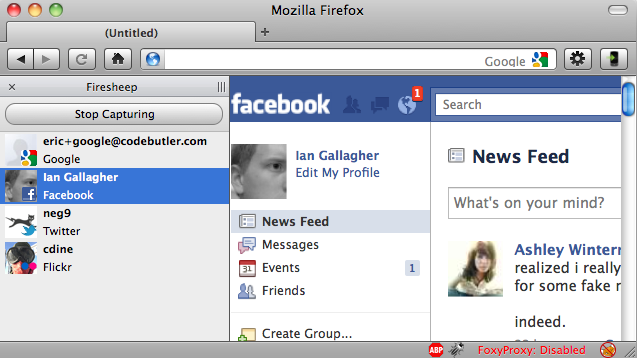

When we talk about encrypting in transit, we're primarily talking about protecting against the threat of a "man in the middle" or MitM as you'll commonly see it referred to. This risk comes in many different forms, for example there's the now infamous Firesheep extension from 2010 which enabled someone to hijack the Facebook sessions of people around them on an unencrypted network:

There's the ongoing scourge of poorly protected router admin interfaces which are vulnerable to DNS modifications via cross site request forgery risks. That's the risk that just keeps on giving!

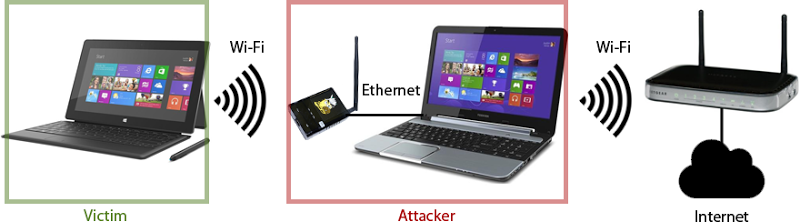

A particularly accessible way of intercepting traffic is with a device like the Wifi Pineapple that I've used in so many of my conference talks around the world. It's an exceptionally trivial attack to mount:

There's even airlines (and many other service providers) who'll hijack your traffic and modify it to inject their own things:

Oh @Fly_Norwegian ... you didn't just do that?!? /cc @troyhunt pic.twitter.com/1QpsOlUDxX

— David Peter Hansen (@DPHansen) January 10, 2016

Just think about all the points of exposure where normal everyday people face traffic interception risks; I've covered personal routers being DNS hijacked, but think about the cafes people visit, the airports they travel through, the hotels they stay in and number of other points close to the user which put them at risk. On a website like this one you're reading now, there are many, many points between you and CloudFlare that can be intercepted and traffic read or modified. Further to that, the difficulty of doing so is not necessarily high; casual hackers and unsophisticated criminals alike can access many of these methods.

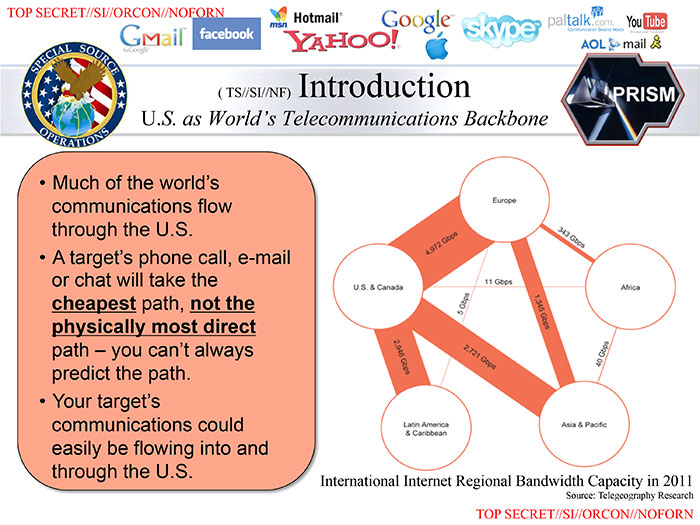

But going back to the earlier diagram, the segment between CloudFlare and the origin remains at risk either via passive monitoring in the "Flexible" model or active interception in the "Full" model. So who are the "threat actors" in that segment of the network? The most important thing to remember (and I suspect this is what opponents of the model neglect), is that we're talking about the internet backbone now and that's an entirely different class of bad guy.

An example of this is the Tunisian government's interception of the Facebook login page in 2011. They controlled the internet backbone going in and out of the country so they could modify the traffic way upstream of all the other risks I just outlined. In some ways that's not such a good example because CloudFlare doesn't have an edge node in Tunisia so all the data from citizens in that country would leave the nation encrypted anyway. But there's a better example:

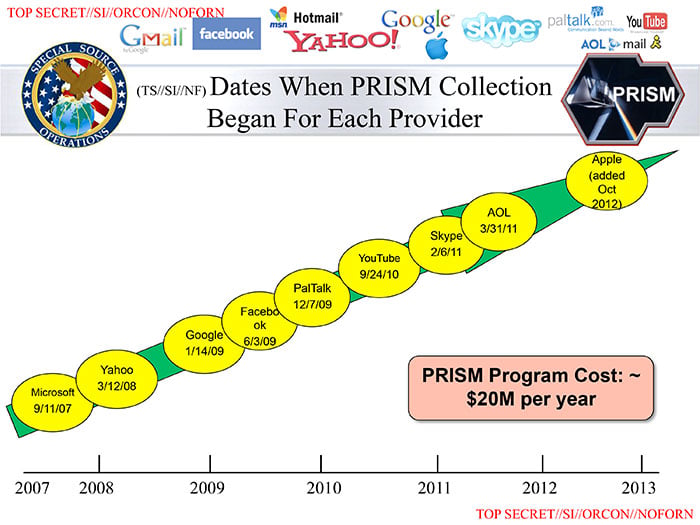

We know now - quite emphatically - that the NSA and counterparts in other countries have been involved in mass surveillance at the internet backbone level. That means that they could be watching you read this blog post right now! But let's delve deeper on the government risk and indeed the risks that remain in a "Flexible" or "Full" CloudFlare SSL model because it's pivotal to this post.

The government can get your things

There are two sides to the government interception issue I want to touch on and the first is simply this: is government interception a risk for your website?

This is a really important question and it acknowledges the premise that security defences should be commensurate to the risk you're trying to defend against. In the earlier Tunisia example with Facebook, yes, government interception is a real risk as the data transiting the network provides a genuine upside to them. In the case of my blog, no, I don't believe that's a risk of any magnitude, I'm more worried about modification to serve malicious content or possibly phishing material.

The second side to consider is if there's a genuine intent by the government to gain access to data, what channels exist regardless of the ability to intercept plain text communications between CloudFlare and the origin?

Beginning with an NSA slide again, the Snowden leaks revealed they were extracting data directly from the origin anyway:

We don't want to unnecessarily give up our privacy by not protecting traffic to the fullest extent possible (and I will come back to that distinction), but let us also not be under the illusion that SSL alone solves that problem.

Even with the presence of encryption to the origin, an MitM can still observe the HTTPS CONNECTs the browser makes at the proxy level. It won't show what's in the request, but it will show who they're talking to so there's your metadata. In case you think that doesn't matter, remember that governments kill people based on metadata and in many ways it's more valuable than the message contents itself.

Then there's CloudFlare themselves or any other similar provider that supports edge caching (I mentioned Akamai earlier). They're still encrypting and decrypting traffic even when they're then talking to the origin in the most secure way possible (Full "strict", in CloudFlare nomenclature) and should they be subject to the same sort of Prism data collection as all the others were or subject to a legal court order to obtain information then they have the ability to access your traffic. That's an important observation too - there are classes of website that, rightly or wrongly, shouldn't be using CloudFlare at all as this sort of scenario poses too great a risk to their specific class of website.

Take it a step further and we have the whole Diginotar situation with Iran a few years back. Now it's arguably because SSL works so well that they had to go to these lengths in the first place, but the point remains that when your adversary is a nation state, they can be exceptionally resourceful.

But what about that Pirate Bay thing in India?

There was a really well-written piece recently on how an Indian ISP (Airtel) was intercepting CloudFlare traffic destined for The Pirate Bay (TPB) in order to block access to it. The author of this post was able to prove pretty emphatically that whilst his connection to CloudFlare was solid, upstream of CloudFlare when they're connecting to The Pirate bay origin things were being MitM'd. Based on CloudFlare's response to the incident, it appears that TPB had configured CloudFlare to use the "Flexible" model of SSL so in other words, no encryption upstream of their edge nodes whatsoever.

In case you've been living in a cave, TPB has been an enormously popular site for the last decade and a bit that has served primarily to distributed copyrighted material. There's a good reason it has "pirate" in the name... the site has previously been shut down, it's operators have been jailed and many different countries have blocked it at various times. TPB is exactly the sort of site that piques the interest of governments.

In the blog post about CloudFlare and TPB, Airtel is quoted as saying that they will block content "on orders from the government or the courts". Kinda like, well, pretty much anywhere. Now Airtel's choice of words was poor (they begin by saying they don't block), but the bottom line is just like Australia or the UK or US or anywhere else I can think of, ISPs are beholden to the orders of local authorities.

Frankly, the whole CloudFlare thing is a bit pointless because the government could easily block HTTPS connections to sites anyway, but what this article does show us is exactly what CloudFlare have always said is possible: that an MitM can modify upstream traffic from them if you're not using "Full (strict)" SSL. It's precisely what's shown in the earlier diagram of their Full SSL implementation except instead of the NSA you have Airtel at the bequest of the Indian government. This event isn't news, it's simply a demonstration of what we already know.

Without necessarily condoning what they're doing here, the biggest mistake made was TPB not saying "Hey, maybe we're the sort of site that might be at risk of government interception, perhaps our threat model should recognise that". In that case they would have enabled Full (strict) or possibly have even not used CloudFlare at all and served content from the origin (but then of course their bandwidth costs go way up and perf drops). They could still be blocked anyway, but that would have meant this is no longer a CloudFlare story and it simply becomes "Indian government blocks TPB access" in just the same way as so many other countries block it.

It's places like this where we need to cut through the FUD and objectively look at what's actually going on. Anyone jumping up and down and decrying that the CloudFlare model is fundamentally wrong is completely missing not just the point of the whole thing, but how the technology actually works.

You wouldn't want your credit cards going over there, would you, huh, what about that?!

I've heard this a few times from opponents of CloudFlare and they're right - I wouldn't want my credit card going out in the clear - but that's not permissible anyway. PCI DSS disallows it already so it's a moot argument. However...

Think back again to the threat actors who can access data upstream of CloudFlare's edge nodes. So the government or operators of internet backbones could access the card data. I don't necessarily want that to happen, but they're not the guys I'm worried about. I'm worried about those who'd seek to commoditise my card data so we're talking about criminal elements who whilst very resourceful, are far less likely to have access to that segment of the network.

But more than that, merchants taking credit card payments can usually justify the expense of SSL on the origin anyway. And if they don't, they get the PCI hammer for not being compliant so there are well and truly already enough constructs here keeping card data in check.

Again, we're back to commensurate security measures for the asset being protected and the actual security risks faced.

There should be a response header that indicates where the SSL terminates

One request I've heard a number of times is to indicate what is encrypted where in a publicly observable fashion, such as via a response header. This has merit, but is also not straight forward.

To begin with, what does it actually say? Ideally, you don't want a vendor-specific header talking about what CloudFlare does so you need to create a spec that's more agnostic. You could possibly indicate if the node that's done the TLS termination is relaying traffic over another encrypted connection (and then whether it's "Full" or "Strict"), but what happens after that? What if there are multiple points of encryption / decryption due to various caching layers or other app design decisions? What if termination occurs in a network other than the one hosting the origin? Or in the same network but some traffic flows in the clear to the web server after termination?

From a transparency perspective, I like the idea of providing some information and I'll come back to that a bit later on. But from a practicality perspective not only is it difficult to implement in a way that actually makes sense, it's not going to change user behaviour when they arrive at the site. It would allow those of us who look for these things to hold the site more accountable (and that's a good thing), but it would make very little difference to the overall landscape of how SSL is implemented.

Your traffic may be fully encrypted to the origin anyway

CloudFlare does much more than just allows you to (allegedly) cheat at SSL. They do a whole bunch of performance optimisations too, one of which is edge caching. By storing static content on edge nodes, they can avoid the additional hop between their infrastructure and your infrastructure which is why my earlier graphs look so nice. You can configure caching in a variety of different ways depending on how aggressive you want to be.

The point is though, many requests won't even hit your origin, in fact they won't even leave CloudFlare's infrastructure. You could browse an entire site like this one and never actually hit Ghost's platform - it's like, 100% secure! (No, not really, but whilst people are enjoying talking in absolutes...)

The web is more complex than whether you have 100% security all the time or none at all. Nuances like this are all part of the plumbing that makes the whole thing work and this is but one example. Now you could always add yet more headers to indicate where the traffic was last served from, and continue down this slippery path of trying to map out the route of the request, but this is yet another illustration that the view of CloudFlare being "insecure" is much more nuanced than some people think.

Why don't you just do it properly?

I would love for everyone to do it "properly". I'd like to see every website with an A+ rating from SSL Labs and full transport layer encryption all the way back to the origin. I'd like to solve world hunger, poverty and get rid of Trump but none of those things are going to happen in one go (ok, maybe Trump), they all require gradual steps in the right direction.

The reason we can't jump straight to the SSL destination is because the service providers still make it hard. Yes, I could give up on Ghost Pro and self-host on the Azure website service (oh crap - I'd still be behind their shared SSL termination device!) and be perpetually responsible for patching and running an app platform which is way more risky (just read my piece on self-hosted vBulletin if that sounds weird). Only a year ago I explained how we’re struggling to get traction with SSL because it’s still a "premium service" and this is still true. Now there have some great steps forward since then such as Let's Encrypt getting up off the ground, but even then if can be a lot of hard work to get running.

People will make ROI decisions on how many dollars and how much effort are required and they'll assess that against the perceived value. People are generally terrible at doing that, particularly the value piece so the more we can keep cost and effort down, the easier it makes the whole equation. In this instance, "properly" can mean trade-offs; It might mean not using a service or hosting provider that can't encrypt to the origin, it might mean financial, usability or geo-location compromises. Sometimes these will make sense but that's a much more holistic discussion than just "always do your SSL perfectly".

Why you should be using CloudFlare

First and foremost, if your choices are to either run entirely unencrypted or to protect against the 95% (or thereabouts) of transport layer threats that exist between your visitors and your origin, do the sensible thing. Nobody in their right mind is going to advocate for remaining totally unencrypted rather than using CloudFlare purely to encrypt between their edge nodes and your users. There are people not in their right mind that will argue to the contrary and that's precisely what the title of this post suggests - it's unhealthy security absolutism.

Secondly, remember that you're getting many other things out of the box with CloudFlare including all that edge node caching goodness. As I said earlier, if you're paying for bandwidth and you can shave the vast majority of that off your origin for free and serve your content fast then that's a serious advantage. That should be in your ROI somewhere.

And finally, as I recently wrote, HTTPS served over HTTP/2 has a massive speed advantage and you get that from CloudFlare even if your origin doesn't support HTTP/2. Of course it only makes sense for the requests that aren't served from your old HTTP/1.1 origin, but that's a small portion of them anyway.

I'm aware of how evangelical this sounds so for the sake of total transparency, I'm not incentivised by CloudFlare in any way and they've never paid me for anything or given me any free or discounted services. When I really like a technology, I get excited about it and that combined with the counterproductive attitudes I've mentioned throughout this post are what's led me to write it.

If I was CloudFlare, I would do these things:

Now as much as I think opponents have what we refer to down here as "some kangaroos loose in the top paddock", I also think there are opportunities for CloudFlare to help raise the bar.

For example, they could check the highest level of SSL that's available to the origin when the crypto is configured. See if there's a valid cert, see if there's an invalid cert and make a recommendation to the customer about how best to secure their site. Help them fall into the pit of success!

I also think they could give a clearer indication of what's encrypted and what's not at the point where the site is configured. I like their flow diagram with the NSA in it and I'd love to see that facing the customer as they set up their site. Make it impossible for them not to fully grasp the consequences of what they're doing.

And finally, even though I think it's of minimal value, add the chosen encryption model as a response header. Obviously it'd be a non-standard header at this point but something like this would go a long way to establishing transparency:

x-cloudflare-crypto: full

One important thing CloudFlare has done this year that's worth recognising is the introduction of their origin CA offering. If your origin has the ability to do Full (strict) but you don't have the cert (or don't want to pay for it), CloudFlare will give you a free one. There are actually reasons why this makes even more sense than going out and spending money with an incumbent CA and you can read why in that link, point is that they genuinely are attempting to raise the bar at all points in the transport layer and that's something that I hope even the naysayers will recognise.

Summary

We're on a journey to ubiquitous encryption both in transit and at rest. CloudFlare's Flexible SSL model is not the destination, it's merely a step along the way. For some use cases it won't be a step far enough - those with PCI obligations, for example - yet for so many others, moving to CloudFlare regardless of what happens behind their infrastructure is a massive step forward; the site is now SSL friendly, content is embedded correctly, it might mean enabling HSTS, secure versions of the URL are socialised and a huge portion of the hard work of going secure is done. When it does become a no-brainer to encrypt back to somewhere closer to the origin, that one further step is going to be a piece of cake. 86% of the Alexa Top 1 million websites aren't served over HTTPS - any SSL is a move in the right direction for them, we're merely debating how far they should move in one go.

Unhealthy security absolutism does not move us in the right direction. It delays progress and ultimately undermines the very objective those doing the complaining claim to aspire to. In the progress of bemoaning "but it's not absolutely perfect", the people trying to actually deliver working software to the web with appropriate security controls get exposed to the ugly side of security that insists on perfection at all costs. Everyone loses and that's just not a healthy state to be in.