So the Ethical Hacking series marches on, this time with my third course in the series, Ethical Hacking: Hacking Web Applications. As a quick recap of why we’re doing this series, Ethical Hacking material remains the number one requested content on Pluralsight’s course suggestion list. It’s more in demand than all the new shiny Microsoft .NET bits or fancy cloud services and even more popular than JavaScript libraries!

Why is it so popular? Just take a look at some of the events of last week. The big one over in the UK was TalkTalk suffering a rather nasty data breach. I found this particularly interesting because prior experience only last month had shown they have somewhat of a “quaint” view of fundamental security principles:

Can anyone help @TalkTalkCare out with some candid feedback? https://t.co/P4rYh7rH3H

— Troy Hunt (@troyhunt) September 1, 2015 Now I don’t think for one minute that the inability to paste passwords securely from a password manager is what brought them undone, but you do tend to find that cultures of security ignorance can be pervasive across the systems an organisation builds. Watching the post-breach handling of the incident can also be telling, such as this comment from their CEO in a video interview:

Unfortunately, cybercrime is the crime of our generation

And then a brief bit of “well every company’s defences could be stronger” and some stats on how basically everyone is getting pwned these days anyway followed by numerous “very sorry” statements. In some cases, a company’s defences can could be a lot stronger:

Good news, @TalkTalkCare have tried to implement TLS for webmail (at last). Bad news is, "they take it seriously" pic.twitter.com/UJE1xPkywe

— Paul Moore (@Paul_Reviews) October 23, 2015 Then there was the Essex police who tweeted some friendly cyber-advice:

Uh, hang on, is that…? And the URL linked off to an assumedly malicious file. So here we have a verified Twitter account owned by those responsible for helping us protect our cyber-things and they’ve gone and used a bad password on their social media (which is almost certainly the root cause of the errant tweet).

Then there was Xero who sent me out an email about phishing which I thought was particularly interesting given the discussion I’d had with them only a few short weeks ago:

I spoke to @Xero last month: "You guys seriously need 2FA" "Don't worry, an attacker actually needs your password" pic.twitter.com/j3IjGIww0l

— Troy Hunt (@troyhunt) October 23, 2015 Here’s the relevance of all these incidents to the new course: everything you see here is as a result of humans making poor decisions. TalkTalk doesn’t understand some fundamental security principles, the cops missed “Passwords 101” in their induction and Xero didn’t feel my financial information deserved the same level of security as as you get when you tweet about what you had for breakfast. Evidently, we have an education problem.

I had a bunch of queries from reporters last week about these incidents and a few others that came up and one of the things they frequently like to ask is “What advice would you give CIOs to protect their companies from these attacks”? I always – always – reply that the very first thing that has to happen is education. Yes, I know they’d like to hear “Install this security-in-a-box solution and everything will be fine”, but that’s just not how it works.

Just the other day I even had one journo ask if it might be better for orgs without security competency to use a service like OpenID to save them from screwing up the password implementation. That’s like suggesting it’s ok for my 6 year old to drive the car so long as he wears a helmet! No, it’s not ok and the first thing that any responsible CIO should be doing if they come to the realisation that their team is ill-equipped security wise is to fix the underlying problem!

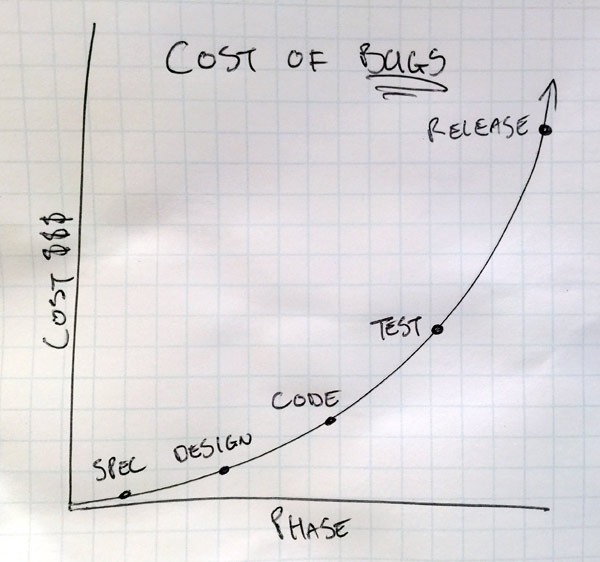

Building up competencies in this area is not just about saving an organisation from being the next breach statistic, it also makes good financial sense across the lifecycle of an app. I shared this diagram recently when I wrote about one of the workshops I was doing:

It’s a long held truism that the cost of any sort of bug increases exponentially as you proceed down the software development lifecycle path. Just think about it, and we’ll take a security example here: someone on the development / architecture / management team decides to store passwords as salted SHA-1 (yes, sometimes management does actually make this decision…). This is bad, in fact it’s almost useless for protecting passwords and what we really want to see is a modern hashing algorithm designed for storing passwords (OWASP has a great cheat sheet) such as bcrypt. Now let’s imagine the impact at various phases of the software development lifecycle:

In the design, it’s usually just a case of saying “perhaps when we actually get around to building this, we should do it differently”. No biggie, the effort is really no different and the change in direction hasn’t cost you anything.

But get to the coding phase and change your mind and now you’re undoing stuff. Code has been written that needs to be rewritten, discussions have been had that are now irrelevant and the cost of the original poor security decision must be worn.

Production is where it really starts to hurt – you’ve got a live system with salted SHA-1 and you need to change to bcrypt. You can’t un-hash the passwords because a hash is a one-way algorithm, so you’re left with a few choices of varying pain. You can re-hash everything with bcrypt but now you’ve permanently got the “code smell” of SHA-1. You could just hash passwords with the new algorithm when people successfully authenticate and you have access to the one they provided you in the clear, but now you have a database of multiple different password storage mechanisms which is also smelly code but at least you’re heading in the right direction. Another option again is just to rollover to the new system and tell everyone they have to reset their password, but, well, don’t do that!

In the examples above, the organisations are realising that they made some poor decisions about security. It’s now an expensive decision to rectify not just because they’re in the production phase of the code, but because those poor security decisions may have lead to a serious security incident. If only the developers had have been given the opportunity for a little more training…

Ethical Hacking: Hacking Web Applications is now live – enjoy!