Someone sent me an email today which essentially boiled down to this:

Hey, Microsoft's Azure Active Directory alerted me to leaked credentials but won't give me any details so there's very little I can do about it

This is a really interesting scenario and it relates to the way Microsoft reports risk events, one of which is the discovery of leaked credentials that match those within AD. In other words, they've identified that someone used the same email address and password in multiple places and they've let the administrator of this particular AD instance know. As you can imagine from my work with Have I been pwned (HIBP), I have many thoughts on the subject. Rather than keep them to myself, I thought I'd dump them here because the whole thing raises a bunch of really interesting technical, ethical and legal issues.

What are we actually talking about here?

An increasingly large number of organisations are doing precisely what's described above - trawling the underbelly of the web, obtaining breached data then using it to better protect their own accounts. You think about it - if, for example, Amazon finds a dump of data that has thousands of their customers in there with the same username and password as on their own service, that's really useful info for them. We know this credential stuffing thing is rampant and I illustrated that last week with the folks trying to blackmail Apple (which incidentally, failed spectacularly and surprised no one beyond themselves).

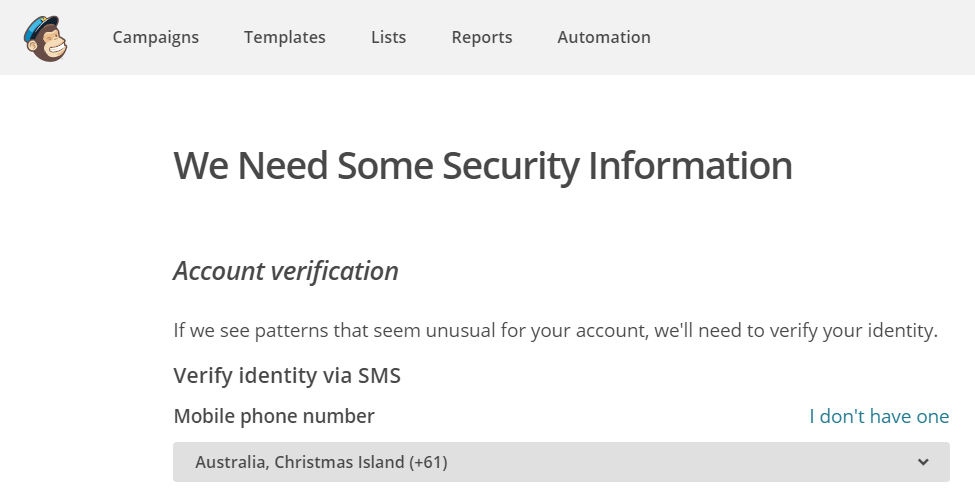

Knowing this is very useful to Microsoft or Amazon or Apple because now they can protect their customers. For example, they can force password resets. They can flag the account as being at higher risk and request additional verification at login, for example via SMS as MailChimp does:

They can do all manner of things to limit the ability for bad guys to login to a customers' account not only because it protects the customer, but fraud costs the organisation dearly when it happens en mass too. But there's a problem...

You've been pwned... (but we can't tell you where)

This was the crux of the message I was sent this morning and I've heard it many times before. All of these companies which are essentially saying that they've found their customers in a data breach won't tell them which one. That leaves the customer in the uncomfortable position of knowing that somewhere on the web their data has been taken and circulated, but they're at a loss as to where.

Think about the implications of this: we all have dozens of accounts online these days. Some of us have hundreds. If a company tells me that the username and password I use on their service just turned up in a breach of a totally unrelated system, I'm gonna have absolutely no idea where that other system is. (Incidentally, by virtue of using a password manager and creating unique passwords everywhere, this isn't going to happen to me, but you get the idea.) I wouldn't know which other system to change my password on. I wouldn't know what other info about me was just leaked. I'd have absolutely no idea as to the broader risk this now exposes me to.

This isn't a situation we've arrived at by accident, there are good reasons for this, let's go through a few.

It's a legal minefield

Let's think this through for a moment and we'll imagine it's Amazon who's got their hands on the LinkedIn data. They notify their impacted customers that their data has appeared in a data breach and for the purposes of playing out this train of thought, they tell their customers it's LinkedIn.

One of the first issues here is that the legitimacy of this data in terms of who should hold it is grey at best. I - more than probably just about anyone - know that and believe me, I think about it a lot! A company like Amazon would be very wary about publicly acknowledging that they had another company's entire client base including their customers' passwords. They'd be worried about LinkedIn demanding they remove it, about common customers getting cranky that they were holding data they don't own and about either of those parties getting legal on them.

Imagine also how that plays out with the impending GDPR legislation which has some pretty tight rules about how personal data is handled. Now not telling people they have their data doesn't exactly absolve them of compliance obligations, but it's the sort of thing they probably don't want to outwardly discuss.

There's another legal issue around all this too, and that's this:

What happens if the breached company doesn't know they were breached?

There's a heap of data out there being traded around that's known fairly well in those circles but not known by the impacted company. Sometimes it's relatively tightly held, other times it's a new incident and public disclosure hasn't yet occurred.

Let's play out another hypothetical: imagine eBay got their hands on the VTech data. This was a very tightly held set of data and to this day, I'm quite confident only the hacker, Motherboard and myself ever saw it. But imagine for a moment that one of eBay's security folks got their hands on it, now what?

On the one hand, you could argue that eBay should notify VTech because that's the responsible thing to do. But now you're sort of projecting an online auction site into the realm of ethical disclosure to other organisations and that also brings with it a bunch of legal issues; can you imagine how many lawyers would be involved in a discussion like that?! Plus, it puts us back at the previous point in that this is very shadily obtained data and you've got a big multinational admitting they have it.

Yet on the other hand, eBay wants to protect their customers. They know bad guys have the data, they know people reuse passwords and they know that they could proactively protect accounts. But they can't "show their hand" to their customers and say "VTech got hacked and you reused your credentials" because not only will eBay customers start asking hard questions of VTech, it'll also end up all over the news.

And we haven't even touched on the technical aspects yet...

Password hashing makes it "interesting"

So you're one of these organisations attempting to protect your customers and you have a big whack of someone else's data you want to compare against yours. Email addresses are plain text in both so that's easy, but passwords are another story. We'll assume the organisation doing the retrieval of other people's data is using a strong hashing algorithm with a high work factor, say bcrypt. But it's what the other site is doing which makes things interesting.

If they stored data in plain text then you're going to need to grab every single one of those passwords which belongs to common email addresses and hash them with your own algo and corresponding salt for the account. You could exclude the ones that don't meet your own password rules but there's going to plenty left and remember, you've got a strong hashing algorithm which means it's going to be slow going, especially if we're talking large amounts of data.

But let's imagine the breached site hashed them - now it gets interesting. The immediately obvious answer is that you crack the other party's hashes but things now start to get extra murky. Firstly, I'm no lawyer but having another company's data is one thing, I imagine that actively trying to break a cryptographic protection such as a hash is quite another. Imagine Big Company A going "Yeah, we've got Big Company B's data and we're actively cracking all their hashes". That's a minefield.

But say they're doing it anyway and it's MD5 with no salt. No biggy, they'll tear through a big whack of those quite quickly. Add a salt and suddenly things are massively harder. Still relatively easy in the grand scheme of things when it's a fast algorithm like MD5 or SHA1, but it's going to take a heap longer. Do you invest in dedicated hash cracking gear like what Sagitta make? How far down the rabbit hole do you go because once you start getting too slow hashing algos like the aforementioned bcrypt, things start getting very hard. A heap of recent breaches have been in that category too - Ashley Madison, Dropbox, Ethereum and despite their many other shortcoming, even CloudPets.

Thing is, a good enough hashing algorithm across a large enough set of data is going to rule cracking out, at least in terms of resolving any reasonable number of credentials back to plain text. So you need to think about other options...

What if you didn't crack hashes?

If you had the plain text password, you wouldn't need to crack hashes to figure out if the one from another data breach matched those in your own system. There's no magic involved in gaining access to your customers' plain text passwords, you just have to wait until they login!

So here's the workflow: someone (not necessarily your customer) attempts to login with a set of credentials. You hash that with your own algo and establish whether the creds are correct, then you also hash with the algo of the breach you want to compare the password to and see if it matches there (obviously the correct salt may also be required). If it matches then you know they're reused credentials, but then what?

Well firstly, you could simply flag the account as higher risk at that time. For example, perhaps they can browse around your online store but not order anything or view account settings until they verify their identity. Or depending on the organisation's tolerance for potentially pissing off legitimate customers, immediately after login you prompt them to do a password reset. There are options, not all of them pretty and subject to some pretty major other issues.

One of those issues is that this isn't particularly proactive - you need to wait until the customer (or someone else with their creds) logs in. The other one - and this is a biggy - is that this can have serious overhead. You're now saying "hey, let's create hashes of the password and check other breached systems" but how many other breached systems? Do you just do recent ones where the data is circulating? Or heaps of them, some of which have slow hashing algorithms and when done en mass result in a lot of overhead on your own system?

Alternatively, you run this in the background asynchronously to everything else the user is presently doing to log in. Maybe it takes a minute, but once executed it flags the account and you fall back to the aforementioned position of challenging for verification a little later on in the session before something important happens. There are a lot of different ways you can slice this.

Summary

This is not exhaustive and it was literally me sitting down dumping many of the thoughts and discussions I've had over the years, but hopefully it gives you an idea of how much more complex this issue is. When you next get one of those emails saying "you've been pwned but we can't tell you where", understand that there are all sorts of reasons why you can't be told and indeed why you may only be learning about it much later after the incident.