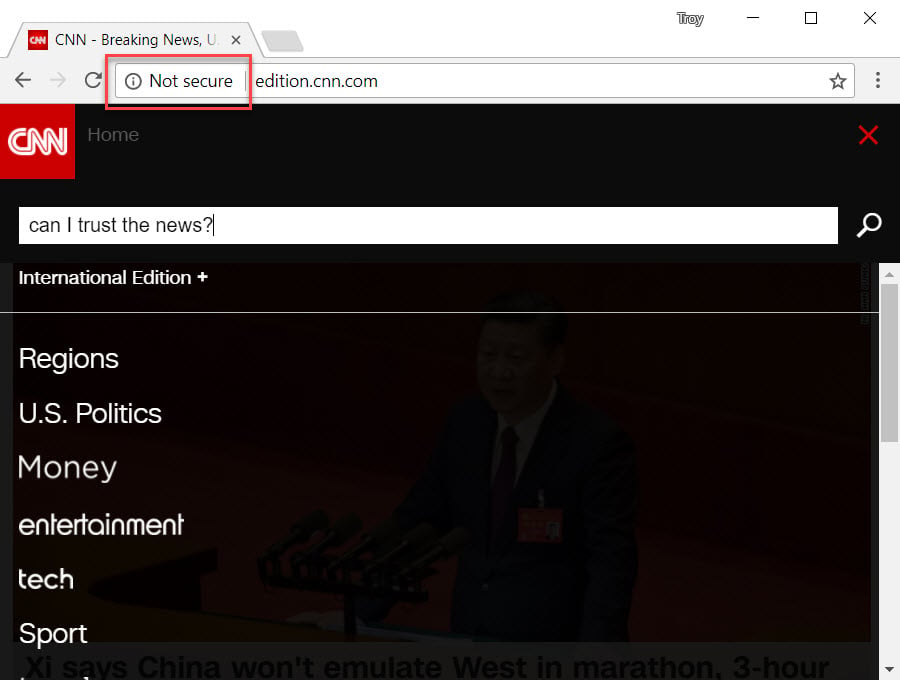

It's finally time: it's time the pendulum swings further towards the "secure by default" end of the scale than what it ever has before. At least insofar as securing web traffic goes because as of this week's Chrome 62's launch, any website with an input box is now doing this when served over an insecure connection:

It's not doing it immediately for everyone, but don't worry, it's coming very soon even if it hasn't yet arrived for you personally and it's going to take many people by surprise. It shouldn't though because we've known it's coming for quite a while now starting with Google's announcement back in April. That was then covered pretty extensively by the tech press as well as on this blog where I wrote about how life is about to get a lot harder for websites without HTTPS. Then back in August, Google started emailing site owners and made it very clear what was coming:

New warnings being sent out now for sites that collect ANY info via a <form> on http. Starting October 2017. pic.twitter.com/4EbahDEfRA

— Marie Haynes (@Marie_Haynes) August 17, 2017

But even before that, back in Jan we saw both Chrome and Firefox starting to flag any page with login or credit card fields as "Not secure" so this is just continuing that march. In fact, back at that time I wrote about how HTTPS adoption has reached the tipping point and I pointed to a range of facts supporting that, including the fact that in August last year, 14% of the Alexa top 1 million sites were now forcing HTTPS. But that wasn't the headline figure, rather it was the rate of change and 12 months later, that number was now 31%. Yep, more than doubled in a year.

This will only come as a surprise to folks who haven't been paying attention. Either that or those who, against all evidence, continue to argue that HTTPS is unnecessary. In August, I highlighted how SEO "experts" were advising customers against HTTPS based on fundamentally flawed reasoning. Fortunately, even these guys are seeing the light and realising that HTTPS is, in fact, now somewhat of a necessity. However, doing it right can be more difficult than many people think:

*Attempting* to be https now ? pic.twitter.com/awbqM9zoem

— Deborah Kay (@debbiediscovers) September 1, 2017

Well, it can be more difficult but it can also be fundamentally simple. In this post I want to detail the 6-step "Happy Path", that is the fastest, easiest way you can get HTTPS up and running right. Let's dive into it!

1. Get a Free Cert

This is the first thing most people think of when it comes to HTTPS - they need a certificate. There was a day when this would cost money and you'd pay a large company such as Comodo a fistful of dollars for them to issue you a cert, but those days are now behind us. There's now two primary routes you can go in my "Happy Path" and I want to detail both of those here:

Firstly, there's Let's Encrypt. They've had an enormously positive impact on HTTPS adoption by making certificates available not just for free, but in an automated fashion that takes a lot of the legwork out of installing a cert on your site.

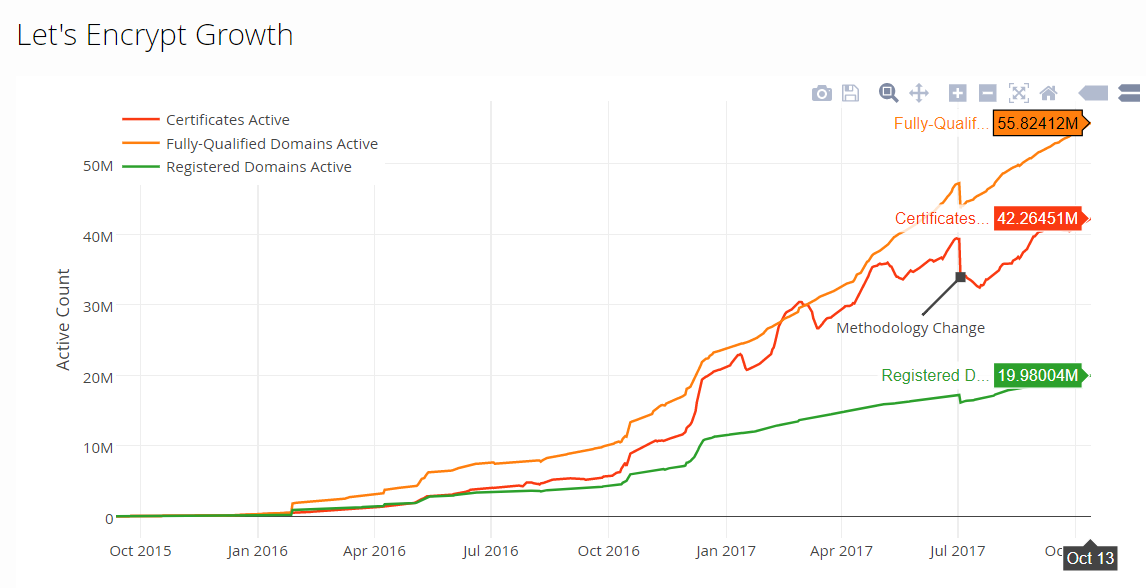

There was a time when Let's Encrypt was a newcomer and understandably, some people were a little reserved about using them. But check out just how far they've come since just the start of last year:

The only real vocal criticism of Let's Encrypt has (unsurprisingly) come from commercial certificate authorities. I wrote about this at length in July when I talked about the perceived value of extended validation certs, commercial CAs and phishing. That's essential reading if you've previously been hit with arguments ranging from "you need an EV cert" to "free certs aren't as good" and even "Let's Encrypt helps phishing sites". Read that now if you're not already across these issues and the FUD involved.

The one major practical barrier to Let's Encrypt is lack of first class support in PaaS and SaaS models. Last year I wrote about what's involved in loading a Let's Encrypt certificate into an Azure app service and I concluded that as it stands, it's a risky model. Still to this day, I would not use Let's Encrypt in this way, there's a much better way...

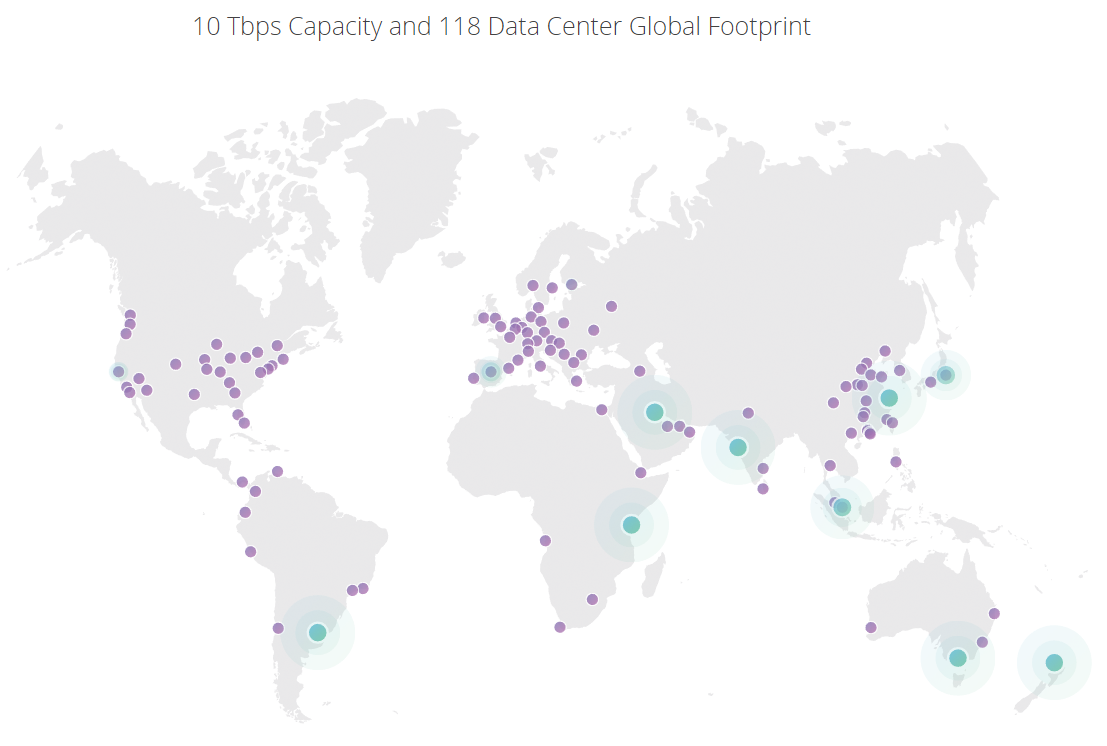

That way is Cloudflare. I love the service that Cloudflare provides because it's not just about HTTPS. Like Let's Encrypt, it's free if all you want is a cert on your site and it's also highly automated, but where it differs is that Cloudflare is a globally distributed CDN with 118 edge nodes around the world:

What this means is that they don't just do free certs, they can also cache content, optimise traffic and block threats such as DDoS attacks. They can do this because your traffic literally routes through their infrastructure. Now on the one hand, this worries some people and frankly, those concerns are largely unfounded and I address them in my post on unhealthy security absolutism. On the other hand, when an intermediary has the ability to modify your traffic on the fly, you can do some enormously cool things and I'm going to keep coming back to those throughout the remainder of this post.

My strong recommendation is Cloudflare not because there's anything wrong with Let's Encrypt - quite the opposite, I think they're awesome - but rather because there's so much more value to be had from a reverse proxy. I run this blog through them using their free service and I also run Have I been pwned (HIBP) through Cloudflare which has made an enormously positive impact on the sustainability of the site.

Ok, so that's certs, but now we need to make some changes on the site too so let's jump into that next.

2. Add a 301 "Permanent Redirect"

What we need to start doing now is ensuring that whenever a request comes in over the HTTP scheme, the site tells the browser that instead it must request that same content over the secure scheme. We do that with the HTTP 301 response code which indicates that the resource has "Moved Permanently". This is accompanied by a "Location" response header which indicates the new URL the browser should issue the request to.

For example, imagine making an insecure request to this blog:

GET http://www.troyhunt.com/ HTTP/1.1

When my site receives that request, it will respond like this:

HTTP/1.1 301 Moved Permanently

Location: https://www.troyhunt.com/

The browser then turns around and makes a near-identical request to the first one, albeit it over the secure scheme:

GET https://www.troyhunt.com/ HTTP/1.1

How you implement this depends on your framework of choice. For example, in ASP.NET you could use a URL Rewrite rule. This is a simple configuration that can go into your web.config and constitutes a "no code" fix. Obviously, you're going to tackle a PHP or a Node site differently, but you get the idea.

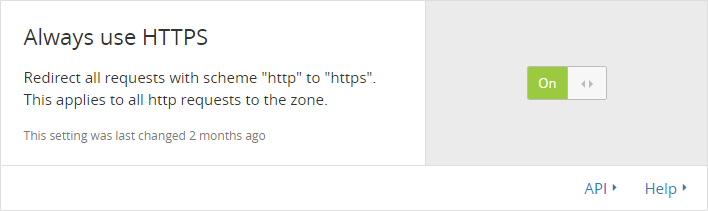

An even cleaner way to tackle this is with Cloudflare. Remember that bit where I said there's a bunch of additional value to be had from a reverse proxy? How's this for an easy fix:

Toggling this switch allows Cloudflare to add the 301 response header on the fly so that you don't need to modify the site itself. It doesn't matter what tech stack you're running on, you simply hit the button and it's job done.

The 301 is necessary, but there's also a couple of problems. Firstly, you're forcing the user's browser to make an extra request. Every single time they attempt to load any resource on the site insecurely, the browser is going to need to make a subsequent request to the secure scheme after the 301 comes back so it's not great in terms of performance. But more serious than that from a security perspective is that the first request - the insecure one - can be intercepted and modified. This is exactly the sort of thing we're trying to protect people from in the first place and whilst every request after that first one the gets 301'd is good, we still need to protect it as well. Which brings us to HSTS.

3. Add HSTS

HSTS stands for "HTTP Strict Transport Security" - and it's awesome! I've written about it before in depth so I won't repeat everything here but for the sake of completeness in this post, we'll go through it again briefly.

The 301 situation left us with a risk in that any insecure requests could still be read by someone with access to the traffic. Sensitive data like any cookies sent with the request could be read and then the response itself could be manipulated. It's sub-optimal. HSTS changes that (to a degree) by way of a simple response header such as the one on this very blog:

Strict-Transport-Security: max-age=31536000

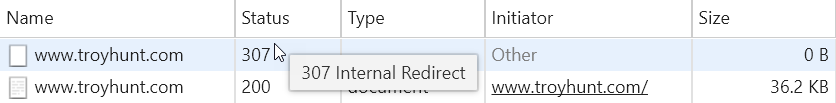

When returned over a secure connection, this header tells the browser that for the next 31,536,000 seconds (that's one year's worth of seconds), it may not make an insecure request to the site. If (for whatever reason), the browser then makes an insecure request - say by me explicitly typing GHOST_URL/ into the address bar - this happens:

What you're seeing here an HTTP 307 "Internal Redirect" followed by a secure request. This is the browser upgrading the request internally before sending it out over the wire. The really neat thing about this is that it avoids the problem we just had with the 301 where we could keep issuing insecure requests. Or does it?

The remaining problem with this model is known as "Trust On First Use" or TOFU; the browser needs to get one good request without it being intercepted in order to get the response header in the first place. This is where preload comes into play and you can see it in action on HIBP as follows:

Strict-Transport-Security: max-age=31536000; includeSubDomains; preload

Now this looks very similar to before except for two new directives:

- includeSubDomains does exactly what it sounds like it does

- preload enables browser vendors to bake it into the browser itself

That second item is the key because it means you can then head over to hstspreload.org and submit the site for preloading. That service is run by the Chromium Project and all the major browser manufacturers use sites submitted there to ensure that their browsers can't serve any content from those domains insecurely. Ever!

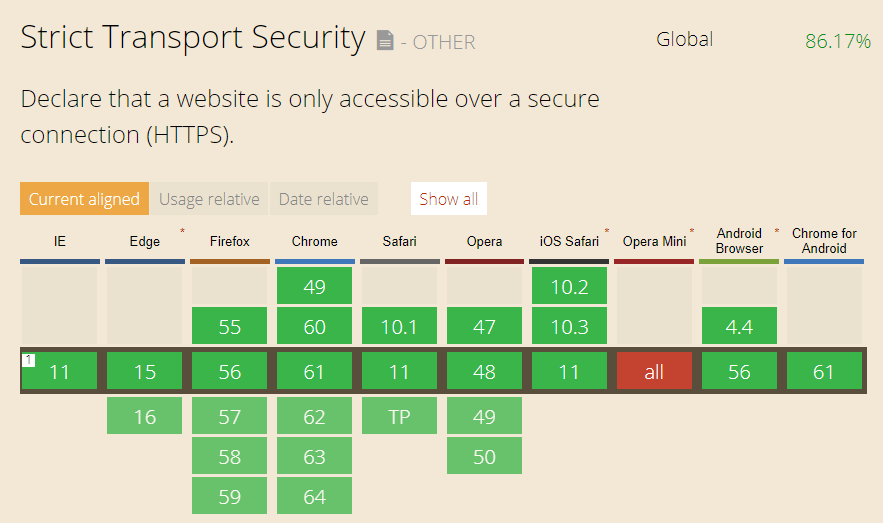

And just in case you're wondering about browser support for HSTS, it's very broad:

Now, why don't I preload this very blog? Because amongst the requirements for preloading is this one:

The includeSubDomains directive must be specified

Which is problematic because I need to run hackyourselffirst.troyhunt.com over the insecure scheme so that when I run my workshops I can demonstrate what goes wrong when you don't get your HTTPS right! Ideally, I need to put this on a standalone domain so that I can get troyhunt.com preloaded and certainly that's on the cards.

In terms of implementing HSTS, it's just a response header you'll need to return upon an incoming request to the site. For the ASP.NET folks, check out NWebsec on NuGet which is made by fellow developer security MVP André Klingsheim. That makes it dead simple to setup via the web.config like so:

<strict-Transport-Security max-age="365" includeSubdomains="true" preload="true" />

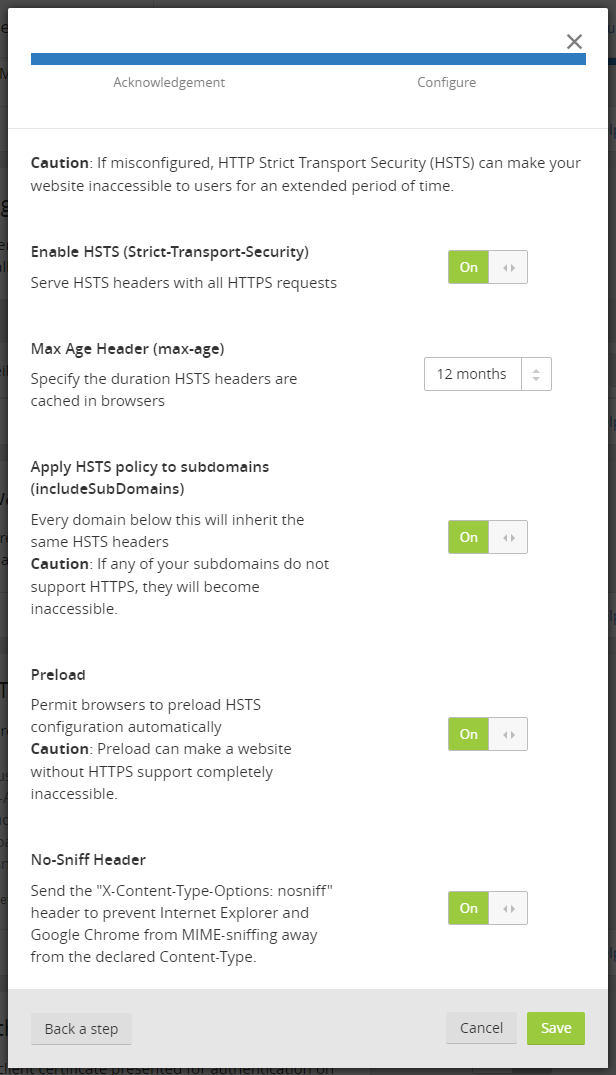

Or just like with the 301 redirects, you can easily add it via a web interface if you're routing your traffic through Cloudflare:

Now you definitely want to be confident that you're always going to be serving traffic over HTTPS all of the time once you go down this route (especially with preload) but frankly, that's where you should be by now anyway.

Oh - and just as a very timely example of why HSTS with preload is important, the security news earlier this week was dominated by the KRACK attack against wifi networks. On that page, Mathy shows how match.com traffic could be intercepted using a combination of his WPA2 attack and sslstrip. This is only possible because there is no preloaded HSTS (even HSTS without preload would still save many returning visitors). With this in place, the traffic can't be downgraded to force HTTP connections as the browser simply won't allow it. Frankly, the whole match.com page load experience is pretty terrible:

https://t.co/jYTfklaenQ was the perfect site to demonstrate the KRACK Attack on - 6 redirects with 5 insecure requests & no HSTS anywhere! pic.twitter.com/GWwRXmr8y6

— Troy Hunt (@troyhunt) October 16, 2017

For all the press this exploit has received over the last couple of days, it's amazing how simple it is to secure individual websites against it, you just need traffic served securely and a single response header plus preload.

4. Change Insecure Scheme References

And now for the hard part. Actually, let me talk about what is traditionally the hard part then I'll talk about how to make it easy.

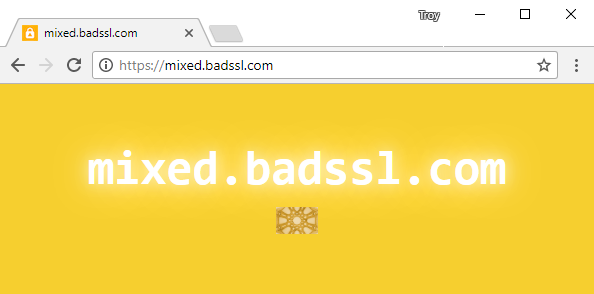

Once you go HTTPS, everything on the page must be served securely. Remember the SEO bloke in his pyjamas earlier who told people not to use HTTPS then deleted my comment saying you should use HTTPS then deleted his video saying you shouldn't use HTTPS then went and implemented HTTPS on his own site but got browser warnings? Visitors to his site were loading the page over HTTPS but didn't get a padlock, didn't get the "Secure" text next to the address bar and didn't get the HTTPS scheme represented in green. In fact, the browser security indicators looked exactly like this example from badssl.com:

This is simply because the image you see on the page above was embedded insecurely. (Incidentally, the image still loads as it's "passive content" in that it can't change anything, but try it with "active content" like a script tag and it will be blocked from loading in the first place.) Here's the root cause:

<img class="mixed" src="http://mixed.badssl.com/image.jpg" alt="HTTP image">

It's simply embedding the image insecurely and there are many different ways to easily fix this:

<img class="mixed" src="https://mixed.badssl.com/image.jpg" alt="HTTP image">

<img class="mixed" src="//mixed.badssl.com/image.jpg" alt="HTTP image">

<img class="mixed" src="image.jpg" alt="HTTP image">

Any one of these will immediately solve the problem... with that one image embedded in that one location. But that's not how web pages operate, rather they have a raft of images, style sheets JavaScript files and all sorts of other content embedded not just once on the one page, but all over the place. When moving to HTTPS, these need to be fixed which yes, means a lot of changes.

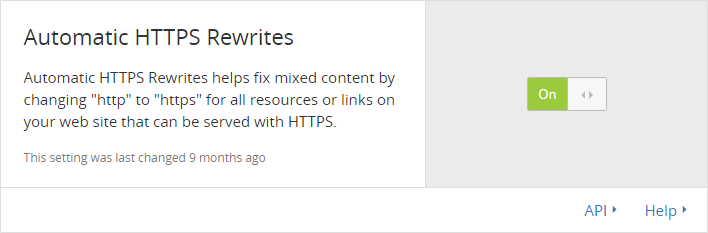

But this is where Cloudflare can help again (you seeing a theme here?) and it does so with what they call Automatic HTTPS Rewrites:

The explanation in the image above is pretty self-explanatory and once again, when an intermediary can control the traffic then they can do some pretty awesome things with it. This is more intelligent than simply changing every HTTP source to HTTPS though:

Only URLs that are known to support HTTPS will be rewritten. We use data from EFF’s HTTPS Everywhere and Chrome’s HSTS preload list, among others, to identify which domains support HTTPS.

This is great and it helps enormously, but whether you're manually fixing insecure scheme references yourself or delegating the work to Cloudflare, there's another problem: what happens if another service you're using makes an insecure request? I mean you might embed, say, Disqus securely on your site but what happens if their JavaScript which you're embedding from their site then loads an image over HTTP? There's another easy answer for that one, and it involves another header.

5. Add The upgrade-insecure-requests CSP

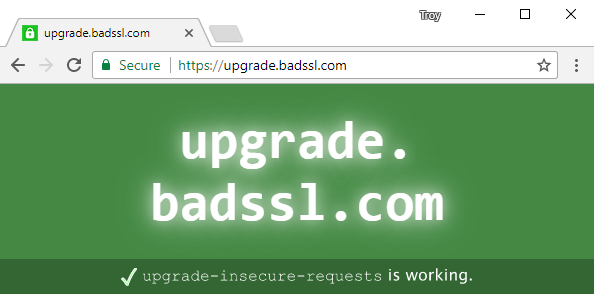

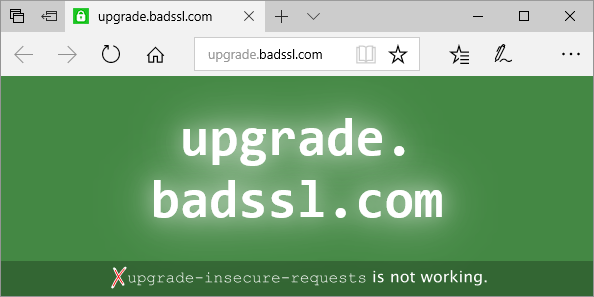

Let's go back to badssl.com for a moment and play with the "upgrade" demo:

It looks normal, right? But check out the HTML source:

<img id="http-vs-https" src="http://upgrade.badssl.com/upgrade-test/upgrade-test.svg" title="This is an image with an HTTP source location specified. If upgrade-insecure-requests is working, the source should be rewritten to HTTPS. The image will vary depending on the outcome.">

I love the title attribute on that image because it saves me the work of explaining it :) This is all achieved by virtue of a Content Security Policy response header, otherwise known as a CSP:

Content-Security-Policy: upgrade-insecure-requests

What makes this awesome is that even if you screw up every single reference for embedded content on your site, this header will automatically fix it for you. Of all the things I'm imparting in this blog post, this is the one that I find is least frequently known and has the greatest impact on how easy it can be to implement HTTPS.

But as I alluded to at the end of the previous section on updating all your links, the joy of this CSP is that it can also fix downstream dependencies loaded insecurely by other services. Last year I wrote about how Disqus caused browser warnings to be shown on my blog and per the earlier example, it was simply because they screwed up and started embedding content insecurely in a location well beyond my control.

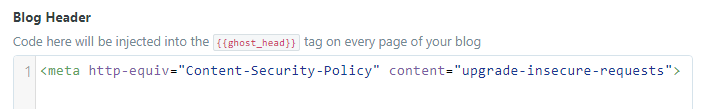

My website actually runs on Ghost Pro which is a hosted SaaS model and that meant I had zero control over headers - I couldn't even add the CSP via Cloudflare. However, you can also embed a CSP as a meta tag and if you view the source of this very page, you'll see the following:

<meta http-equiv="Content-Security-Policy" content="upgrade-insecure-requests">

I actually use their Code Injection feature to add this in so I didn't even need to change my template:

And just to add another Cloudflare angle to this, it's not yet available at the time of writing, but Cloudflare Workers should make this a breeze:

Playing with @Cloudflare workers - how about adding a CSP dynamically on the edge with no code on the origin: https://t.co/JIioKpmnIi

— Troy Hunt (@troyhunt) September 30, 2017

I'll certainly write more about this feature once it lands because it's going to open up a world of opportunities and make features like a CSP in response headers dead simple to implement.

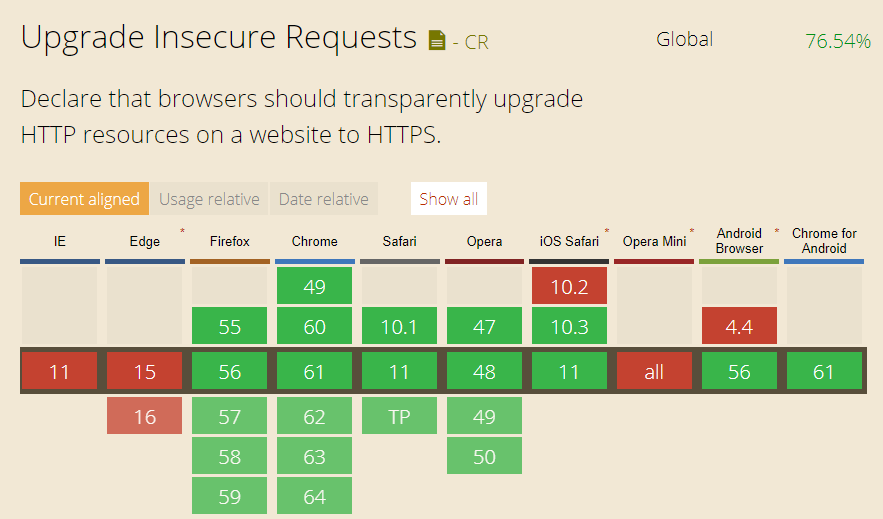

So, upgrade-insecure-requests is an awesome approach - what's not to love?! Well...

This is frustrating, particularly for someone as Microsoft aligned as myself, and believe me when I say I continually raise this issue at every opportunity. People using Microsoft's browsers simply won't get the benefit of upgrade-insecure-requests so when we load up that test site in Edge, we see this:

Edge is actually missing the padlock which is usually present on an HTTPS page which loads all child content securely:

This is one of the key reasons why it's still so important to get embedded content referenced over the secure scheme. However, there's another angle to all this which can help you complete that happy path and yes, it even makes for a happy experience on Edge!

6. Monitor CSP Reports

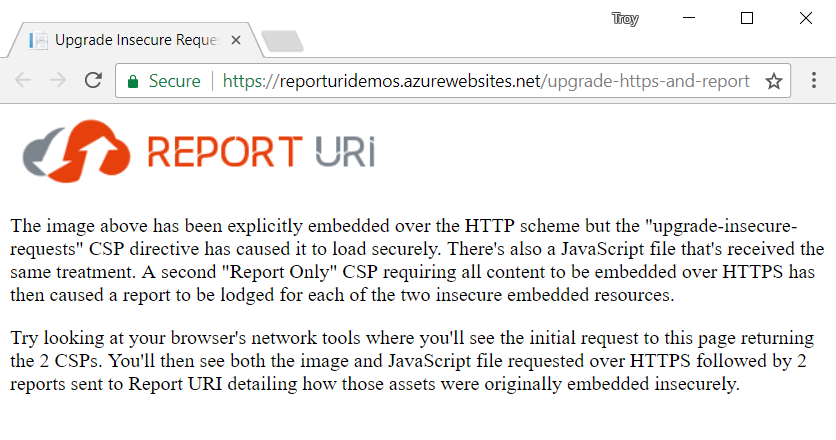

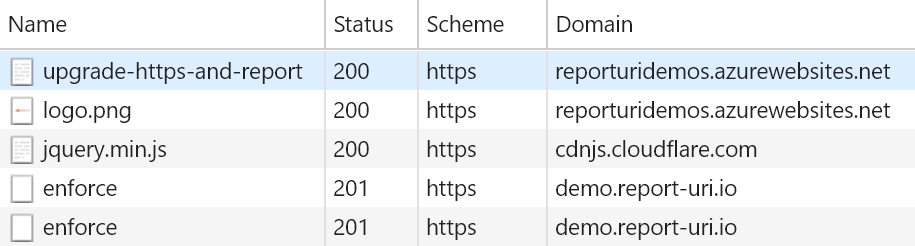

One of the really neat features of content security policies is that you can configure them to report violations back to a URI of your choosing. This is (currently) achieved by using the report-uri directive and it means that when a CSP is violated, you can get a neat report of what went wrong submitted directly by the user's browser. You can see precisely what this looks like via this little demo I set up:

Let's break down what's happening here: the page is obviously requested over HTTPS but the image and JavaScript are both embedded insecurely like this:

<img src="http://reporturidemos.azurewebsites.net/images/logo.png" />

<script src="http://cdnjs.cloudflare.com/ajax/libs/jquery/3.2.1/jquery.min.js"></script>

Yet we see green bits and a padlock in the address bar which means that everything is secure. It's secure because of the upgrade-insecure-requests CSP discussed above:

Content-Security-Policy: upgrade-insecure-requests

So far that's nothing new, but now check out the other header I've added:

Content-Security-Policy-Report-Only: default-src https:;report-uri https://demo.report-uri.io/r/default/csp/enforce

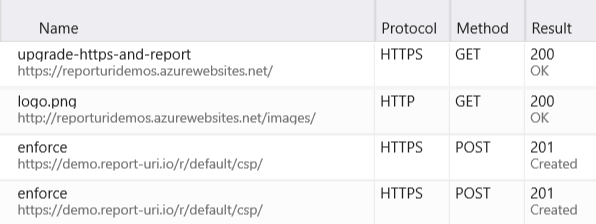

This is a "report only" CSP which means that it doesn't enforce the policy, it merely reports on violations. The content is then set to declare that default-src (so basically the default policy for everything) requires that content be loaded over HTTPS. When an asset is found not loaded over HTTPS, a report is issued to the address after the report-uri directive (I'll come back to the significance of the demo.report-uri.io host name a bit later). By using a CSP in conjunction with a CSPRO (report only), we can get the benefit of both the insecure requests being upgraded and then reported. What that means is that you see the following network requests when loading the page:

You'll see the initial request for the page itself followed by a request for the image which was embedded over HTTP yet had the request upgraded to HTTPS and then one for jQuery, again embedded over HTTP which was then upgraded. Then there's the two "enforce" requests which we're seeing because that word is the last part of the path in the report-uri directive earlier on. There's 2 requests because every single CSP violation causes 1 to be fired. These are POST requests and they contain a JSON payload explaining precisely what went wrong:

{

"csp-report":{

"document-uri":"https://reporturidemos.azurewebsites.net/upgrade-https-and-report",

"referrer":"https://www.troyhunt.com/the-6-step-happy-path-to-https",

"violated-directive":"img-src",

"effective-directive":"img-src",

"original-policy":"default-src https:;report-uri https://demo.report-uri.io/r/default/csp/enforce",

"disposition":"report",

"blocked-uri":"http://reporturidemos.azurewebsites.net/images/logo.png",

"line-number":9,

"source-file":"https://reporturidemos.azurewebsites.net/upgrade-https-and-report",

"status-code":0,

"script-sample":""

}

}

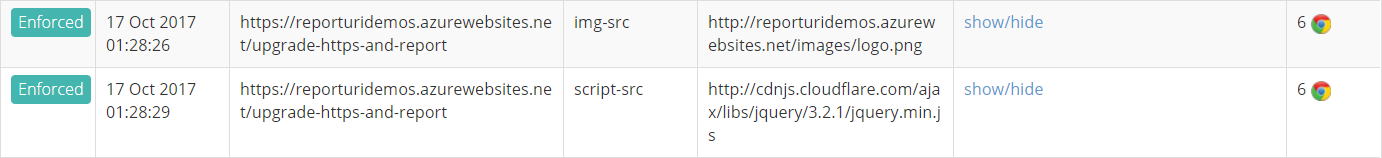

This is the one for the image and we can see from the JSON above that the blocked-uri was an HTTP request made to the image and that the document-uri (the page that caused the violation) was our test page. I also clicked through to that page from this blog post so you see the referrer being the URL of this page too. This has everything you need to now track down the root cause of the mixed content and fix it at the source. And here's another cool thing - the reporting works in Edge too:

Even though neither of Microsoft's browsers understand upgrade-insecure-requests, Edge implements most of the CSP level 2 spec which means it's able to understand (and report on) the CSPRO which demanded HTTPS. No, this won't cause the content to be loaded securely (the image still loaded over HTTP and the script didn't load at all because it's "active content"), but now that you're getting reports you can actually do something about it. Which brings us to the final part of the "Happy Path" - Report URI.

The thing with CSP violation reports is that they can get seriously voluminous. Think about it - you have a site template with a few assets embedded insecurely and each page inherits from that template. Then you have thousands or tens of thousands or however many people coming by the site each day. It's a lot of requests. Furthermore, they're all just the JSON you saw earlier on, you still need to do something useful with that in terms of parsing and reporting on it.

This is where the Report URI service comes in. This is a project stood up by my good friend Scott Helme a few years back (Scott is behind the Alexa Top 1 Million reports I referenced earlier). He makes this service available to anyone who wants to lodge their reports there and you can get into it for free. There's commercial plans available if you want to help Scott support what he does, but the free tier is enough to start working out what's going on in terms of mixed content.

For example, here's a sample report for that demo site:

So now for me as a site owner, I can review the entries on Report URI and see precisely where I've got mixed content on my site. It's all aggregated into the one place which makes it dead simple to review before heading into the code of the site and fixing any broken references. But of course, with upgrade-insecure-requests, things would only break in the Microsoft browsers anyway so the vast majority of people they've already had a seamless experience but their browsers have kindly done the work of letting me know there's a problem anyway.

I love the way this rounds out the "Happy Path": get a free cert, implement the 301 redirect, add HSTS, change your insecure references, use the CSP to fix any of the ones you've missed in non-MS browsers then finally, sit back and watch for any violations by reporting to the free Report URI service. HTTPS doesn't have to be hard, you just have to follow the happy path ?

Gotchas and Other Considerations

This can be a super-easy process and for the vast majority of sites out there, you can knock this off in an afternoon if not within an hour. But I don't want to overly trivialise it either and there are certainly various gotchas along the way.

For example, if you run a website like Stack Overflow which is the 55th most traffic'd in the world, things are somewhat trickier. Complex applications running at scale introduce all sorts of other issues.

Even for smaller apps, putting them behind Cloudflare can throw some curve balls. For example, when I moved HIBP behind them I needed to update code that records the client's IP address because the nature of a reverse proxy means I was seeing the address of their edge node. Instead, I needed to look for the CF-Connecting-IP request header they add to the inbound traffic and refer to that instead.

As for HSTS, it's awesome but it's also a bit of a one-way street, especially once using preload. You should use HSTS and you should preload, but I wouldn't begrudge anyone wanting to give it a few weeks in between going HTTPS, adding HSTS with a short max age then eventually preloading.

There are other aspects of implementing HTTPS comprehensively I haven't touched on here either. For example, flagging cookies as "secure" so they can't be passed over an insecure connection and also updating other references to the site to use HTTPS. Think about things like social media channels and email footers - you really want them referencing the secure scheme as well. Mind you, once HSTS is rolled out with preload that matters a lot less, but you still need browser vendors to bake in that preload list, roll out updates and customers to actually take them.

A classic argument against HTTPS is that every external service you embed must also support the secure scheme. It's extremely rare that this is a problem in this day and age, but there was a time when the likes of Google Adwords didn't and obviously that would present all sorts of dramas. Do check those dependencies if you're at all uncertain they can be embedded securely.

Cyclical redirects is another one I've seen a few times and some odd things can happen there. I've seen cases where someone is redirecting from HTTP to HTTPS but the HTTPS call then redirects back to another path on HTTP. It's a screwy scenario usually solved pretty quickly, but especially once combined with things like a canonical URL redirect (i.e. troyhunt.com redirects to www.troyhunt.com), then it can catch people out.

And finally, if you're going down that Cloudflare route as I've so emphatically suggested, I'd begin by making sure that everything plays nice over HTTP first. Give the site a comprehensive test and look for those little gotchas like the client IP I mentioned earlier first. It's a lot easier to iron those out first and then do the HTTPS thing than it is to try and troubleshoot everything at once.

Further Resources

Even with insurmountable volumes of evidence speaking to why HTTPS is important, there remain naysayers. Some of the comments on the blog posts I've linked to still astound me with some folks even suggesting it's a Google conspiracy theory. Frankly, I don't care because this isn't a negotiation; you either get HTTPS or your users get told the site is insecure. Don't bother arguing that you don't need HTTPS, invest that energy reading doesmysiteneedhttps.com instead.

A couple of additional favourite resources for those naysayers are istlsfastyet.com and HTTP vs HTTPS. The latter in particular is a favourite as it totally turns conventional wisdom about the speed cost of HTTPS on its head. No, it's not fair and no, I don't care because as I've said before, I just wanna go fast.

And finally, I'll leave you with a resource of my own which has proven pretty popular:

So here it is - my latest @pluralsight course - and why every web developer needs to understand HTTPS https://t.co/7gn4GIhv1b

— Troy Hunt (@troyhunt) April 13, 2017

There's 3 and a half hours of HTTPS training on Pluralsight and this course rocketed to as high as the 8th most popular out of a library of more than 6,000 recently. It's still rating 4.9 stars out of 5 too so if you really want to get into detail, check that out too. It turns out that people can actually find HTTPS pretty interesting ?