I love a good set of automatically generated code metrics. There’s something about just pointing a tool at the code base and saying “Over there – go and do your thing” which really appeals to the part of me that wants to quantify and measure.

I think part of it is the objectiveness of automated code analysis. Manual code reviews are great, but other than the manual labour issue, there’s always that degree of subjectiveness the human bring with them. Of course code reviews are still important, but generated findings and metrics are always a nice complement and because they can be done automatically, you can do them as frequently as you like.

One thing I’ve found about code reviews in the past – either manual or auto-generated – is that if you do them at the end of a project, it’s too late! You always end up between a rock and a hard place where on the one hand, you feel you’ve got a steaming heap of garbage, but on the other hand you’ve got deadlines and anxious customers.

What you really want to do is to bring your code quality metrics bang into the development process so they’re visible to everyone every single day. You kick them off on day one when there’s an empty project and you don’t stop until the whole thing is delivered. This is where the build server comes into play.

About NDepend

I wrote about NDepend back in April where I was very enthusiastic about its ability to generate some pretty powerful metrics on the codebase. It’s a fantastic tool for getting some very detailed static analysis metrics on your code. Even if not everyone is familiar with metrics such as efferent coupling and cyclomatic complexity, at the very least people should be able to make good sense of things like complex methods and types with too many responsibilities.

About TeamCity

I’ve only just wrapped up the 5 part series about “You’re deploying it wrong” which was all about using TeamCity for continuous builds and deployments against Microsoft Web Deploy. I go through the reasons I’m keen on TeamCity in the blog series but in short, it’s pretty much the front-runner for .NET continuous builds without going down the Team Foundation Server path. It’s also super easy to integrate with code quality tools such as NDepend.

Configuring the build

I’m going to start with the assumption that there’s already an NDepend project in the solution and a TeamCity project configured on the CI server. See the previous posts mentioned above if you’re yet to get to this point.

In Part 4: Continuous builds with TeamCity, I created a build triggered by every VCS commit to ensure the entire solution built successfully. I’d like to use the output of that build, namely the compiled DLLs, so that NDepend can analyse these rather than rebuilding the solution again.

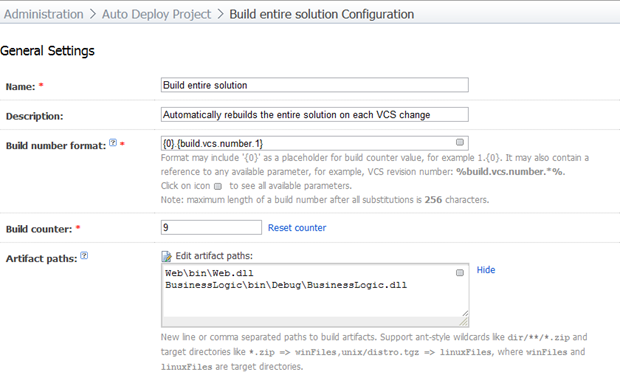

Here’s what the general settings now look like with a couple of artifact paths added:

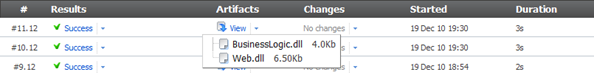

Obviously this solution has both a web project and a business logic solution hence the two assemblies in the artifact paths. What this means is that previous builds will produce some artifacts that look like this:

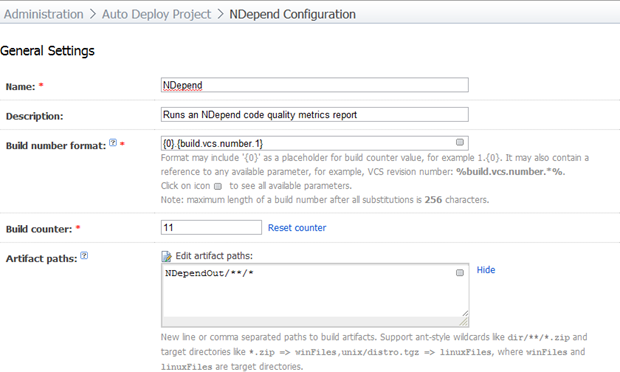

Now let’s go and create a band new build. Here’s how I’ve configured mine:

The artifact path is important as this needs to pick up the output of the NDepend analysis. I’ll skip any screen grabs of the VCS settings because they’re identical to what I’ve described in previous posts (don’t forget the checkout rule if you have a trunk). Even though we’re going to run against the artifacts from the previous build, we still need the NDepend project file and I’d also like to include the VCS revision number in the build number formatting.

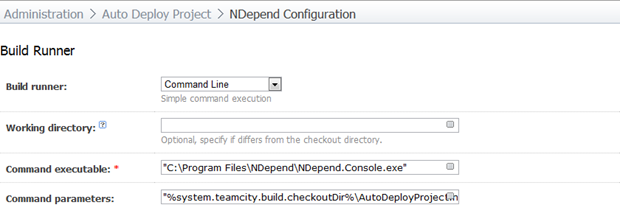

The important bit is the build runner. What we’re going to do is use a command line runner to execute the NDepend console. You can always create a custom build file and run that as an MSBuild task (in fact this is the guidance given on the NDepend website), I just find this simpler and sufficient for my purposes here, particularly given we’ve already got an existing build to run against.

This is what my configuration looks like:

The executable path is where I’ve manually copied the files in lieu of there being no installer for NDepend. The command parameters look like this:

"%system.teamcity.build.checkoutDir%\AutoDeployProject.ndproj"

/OutDir "%system.teamcity.build.checkoutDir%\NDependOut"

/InDirs

"%system.teamcity.build.checkoutDir%\Assemblies"

"C:\Windows\Microsoft.NET\Framework\v4.0.30319"

"C:\Windows\Microsoft.NET\Framework\v4.0.30319\WPF"

Note that there are a lot of references to the “%system.teamcity.build.checkoutDir%” variable. The NDepend console runner expects all the paths to be passed in as absolute so we need to get TeamCity to tell us what these are. Note also the first input directory ends in “Assemblies”. This is where we’ll put the artifacts from the first build. More on that shortly.

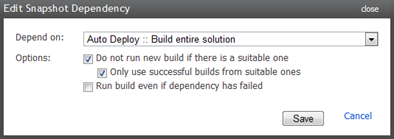

Next up we’ll move on to the dependencies section and add a snapshot dependency on the first build. This will ensure this build only ever runs against sources the other build has already run against:

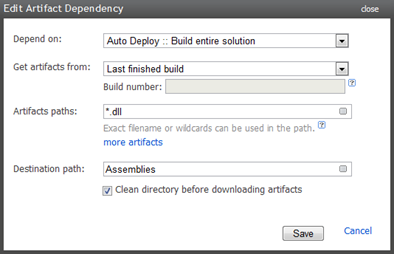

To use the artifacts from the previous build, we’ll just add an artifact dependency that requests all the .dll files and drops them into an “Assemblies” path which this build can now refer to:

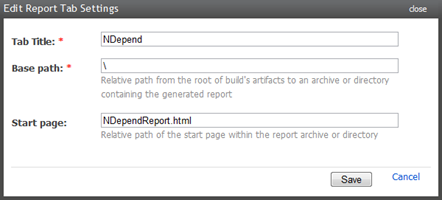

The last thing to do is to ensure the NDepend report is easily accessible via a report tab. Let’s head on over to Administration –> Server Configuration –> Reports tabs and create a new entry like so:

All that’s left to do is to run it. We’ll do this on demand for the moment but nightly is neat for once the project kicks off.

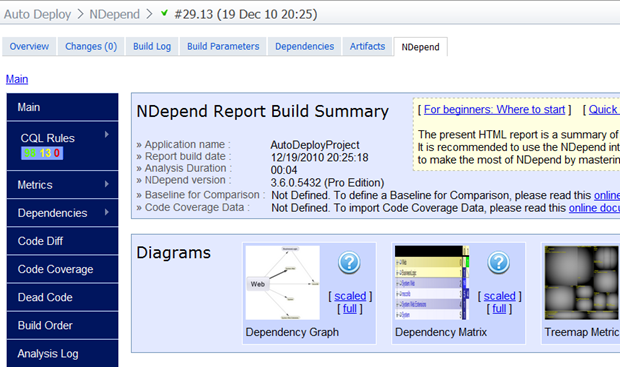

Browsing back over to the homepage of the new NDepend build, the latest build should now display an NDepend tab which will bring the report right into the build page:

Summary

Reports like NDepend are great for generating via a build server on a nightly basis so they’re sitting there first thing each morning. I don’t necessarily believe that every recommendation should be strictly adhered to, but I do believe they should be read on a regular basis and consciously not adhered to if necessary.

The only other thing that would be really nice to see is an indication of how many new findings there were since the last run. The TeamCity duplicates finder and FxCop builds do this and I find it extremely useful. Obviously NDepend would need a little more consciousness of the previous builds to do this but it seems like the sort of thing there should be an extensible model for from Jet Brains.

I’m going to write a little more about automated quality metrics from TeamCity shortly. Stay tuned for more quantitative goodness!